H. Jaap van den Herik

Fair Tree Learning

Oct 25, 2021

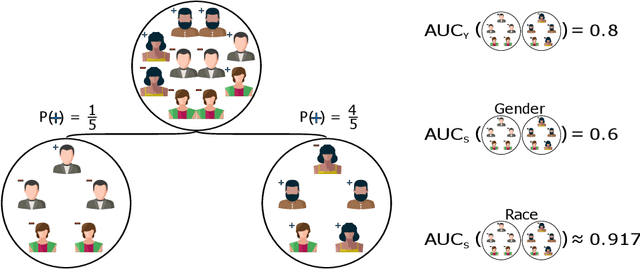

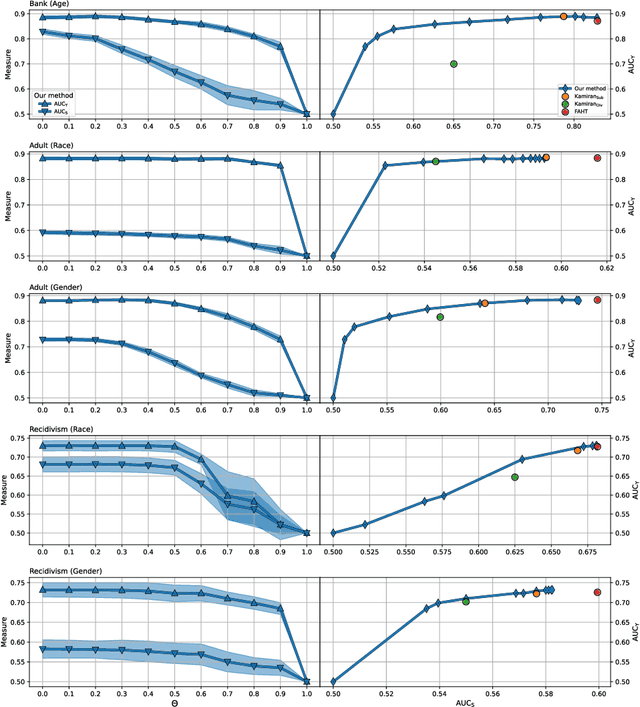

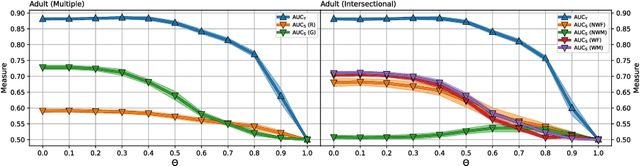

Abstract:When dealing with sensitive data in automated data-driven decision-making, an important concern is to learn predictors with high performance towards a class label, whilst minimising for the discrimination towards some sensitive attribute, like gender or race, induced from biased data. Various hybrid optimisation criteria exist which combine classification performance with a fairness metric. However, while the threshold-free ROC-AUC is the standard for measuring traditional classification model performance, current fair decision tree methods only optimise for a fixed threshold on both the classification task as well as the fairness metric. Moreover, current tree learning frameworks do not allow for fair treatment with respect to multiple categories or multiple sensitive attributes. Lastly, the end-users of a fair model should be able to balance fairness and classification performance according to their specific ethical, legal, and societal needs. In this paper we address these shortcomings by proposing a threshold-independent fairness metric termed uniform demographic parity, and a derived splitting criterion entitled SCAFF -- Splitting Criterion AUC for Fairness -- towards fair decision tree learning, which extends to bagged and boosted frameworks. Compared to the state-of-the-art, our method provides three main advantages: (1) classifier performance and fairness are defined continuously instead of relying upon an, often arbitrary, decision threshold; (2) it leverages multiple sensitive attributes simultaneously, of which the values may be multicategorical; and (3) the unavoidable performance-fairness trade-off is tunable during learning. In our experiments, we demonstrate how SCAFF attains high predictive performance towards the class label and low discrimination with respect to binary, multicategorical, and multiple sensitive attributes, further substantiating our claims.

Genetic Algorithms for Evolving Computer Chess Programs

Nov 21, 2017

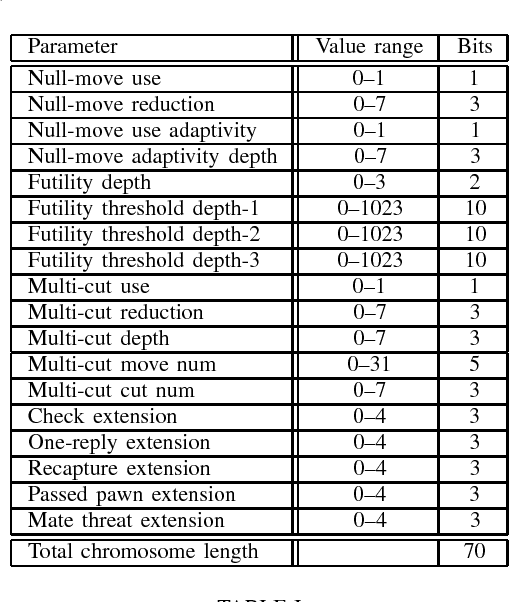

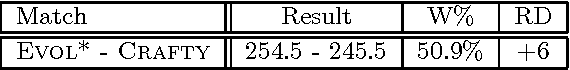

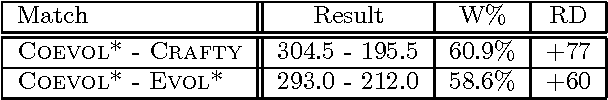

Abstract:This paper demonstrates the use of genetic algorithms for evolving: 1) a grandmaster-level evaluation function, and 2) a search mechanism for a chess program, the parameter values of which are initialized randomly. The evaluation function of the program is evolved by learning from databases of (human) grandmaster games. At first, the organisms are evolved to mimic the behavior of human grandmasters, and then these organisms are further improved upon by means of coevolution. The search mechanism is evolved by learning from tactical test suites. Our results show that the evolved program outperforms a two-time world computer chess champion and is at par with the other leading computer chess programs.

* Winner of Gold Award in 11th Annual "Humies" Awards for Human-Competitive Results. arXiv admin note: substantial text overlap with arXiv:1711.06840, arXiv:1711.06841, arXiv:1711.06839

Simulating Human Grandmasters: Evolution and Coevolution of Evaluation Functions

Nov 18, 2017

Abstract:This paper demonstrates the use of genetic algorithms for evolving a grandmaster-level evaluation function for a chess program. This is achieved by combining supervised and unsupervised learning. In the supervised learning phase the organisms are evolved to mimic the behavior of human grandmasters, and in the unsupervised learning phase these evolved organisms are further improved upon by means of coevolution. While past attempts succeeded in creating a grandmaster-level program by mimicking the behavior of existing computer chess programs, this paper presents the first successful attempt at evolving a state-of-the-art evaluation function by learning only from databases of games played by humans. Our results demonstrate that the evolved program outperforms a two-time World Computer Chess Champion.

* arXiv admin note: substantial text overlap with arXiv:1711.06839, arXiv:1711.06841

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge