Gottfried Munda

Scalable Full Flow with Learned Binary Descriptors

Jul 20, 2017

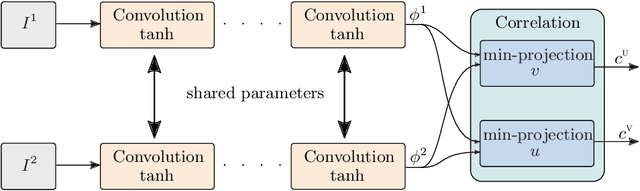

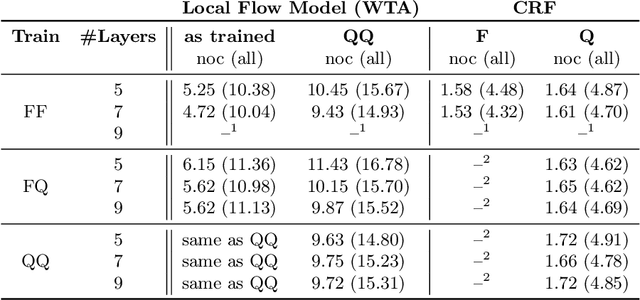

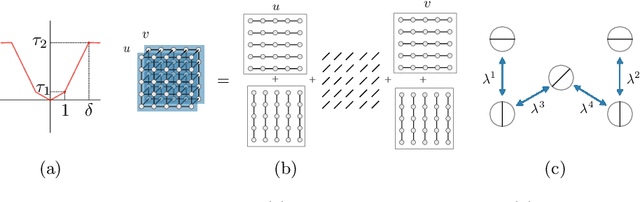

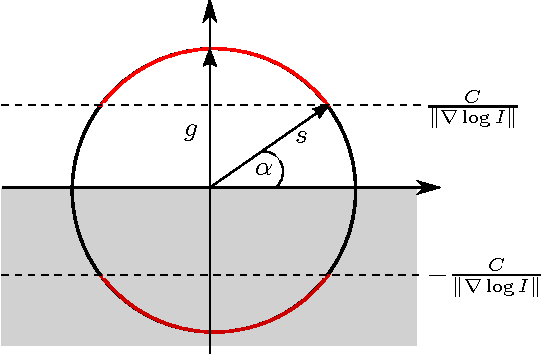

Abstract:We propose a method for large displacement optical flow in which local matching costs are learned by a convolutional neural network (CNN) and a smoothness prior is imposed by a conditional random field (CRF). We tackle the computation- and memory-intensive operations on the 4D cost volume by a min-projection which reduces memory complexity from quadratic to linear and binary descriptors for efficient matching. This enables evaluation of the cost on the fly and allows to perform learning and CRF inference on high resolution images without ever storing the 4D cost volume. To address the problem of learning binary descriptors we propose a new hybrid learning scheme. In contrast to current state of the art approaches for learning binary CNNs we can compute the exact non-zero gradient within our model. We compare several methods for training binary descriptors and show results on public available benchmarks.

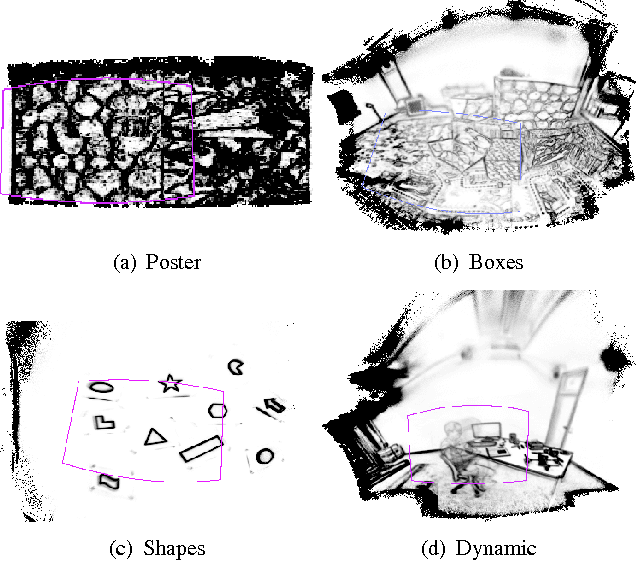

Real-Time Panoramic Tracking for Event Cameras

Mar 21, 2017

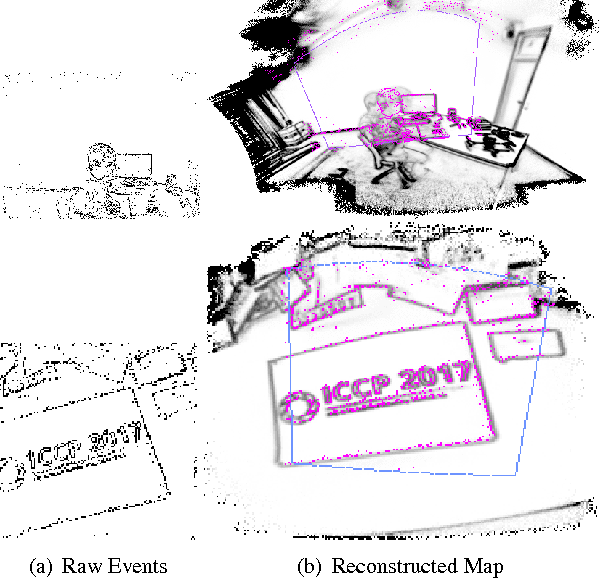

Abstract:Event cameras are a paradigm shift in camera technology. Instead of full frames, the sensor captures a sparse set of events caused by intensity changes. Since only the changes are transferred, those cameras are able to capture quick movements of objects in the scene or of the camera itself. In this work we propose a novel method to perform camera tracking of event cameras in a panoramic setting with three degrees of freedom. We propose a direct camera tracking formulation, similar to state-of-the-art in visual odometry. We show that the minimal information needed for simultaneous tracking and mapping is the spatial position of events, without using the appearance of the imaged scene point. We verify the robustness to fast camera movements and dynamic objects in the scene on a recently proposed dataset and self-recorded sequences.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge