Ghazal Saheb Jam

Developing a Data-Driven Categorical Taxonomy of Emotional Expressions in Real World Human Robot Interactions

Mar 07, 2021

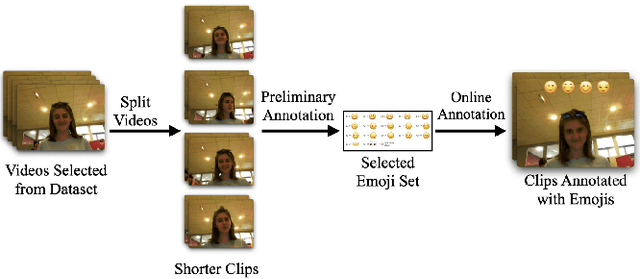

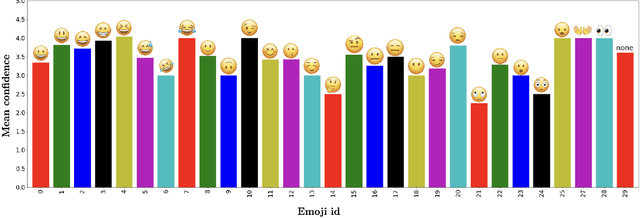

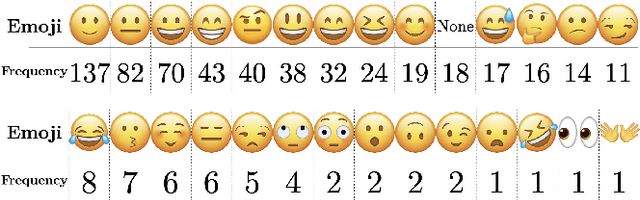

Abstract:Emotions are reactions that can be expressed through a variety of social signals. For example, anger can be expressed through a scowl, narrowed eyes, a long stare, or many other expressions. This complexity is problematic when attempting to recognize a human's expression in a human-robot interaction: categorical emotion models used in HRI typically use only a few prototypical classes, and do not cover the wide array of expressions in the wild. We propose a data-driven method towards increasing the number of known emotion classes present in human-robot interactions, to 28 classes or more. The method includes the use of automatic segmentation of video streams into short (<10s) videos, and annotation using the large set of widely-understood emojis as categories. In this work, we showcase our initial results using a large in-the-wild HRI dataset (UE-HRI), with 61 clips randomly sampled from the dataset, labeled with 28 different emojis. In particular, our results showed that the "skeptical" emoji was a common expression in our dataset, which is not often considered in typical emotion taxonomies. This is the first step in developing a rich taxonomy of emotional expressions that can be used in the future as labels for training machine learning models, towards more accurate perception of humans by robots.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge