Georgiana Neculae

Retrieve to Explain: Evidence-driven Predictions with Language Models

Feb 06, 2024

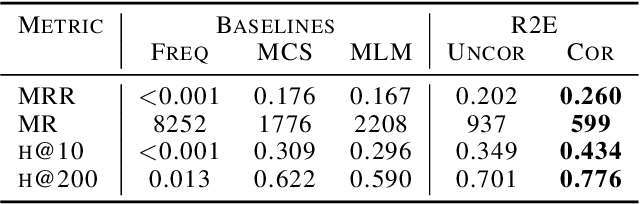

Abstract:Machine learning models, particularly language models, are notoriously difficult to introspect. Black-box models can mask both issues in model training and harmful biases. For human-in-the-loop processes, opaque predictions can drive lack of trust, limiting a model's impact even when it performs effectively. To address these issues, we introduce Retrieve to Explain (R2E). R2E is a retrieval-based language model that prioritizes amongst a pre-defined set of possible answers to a research question based on the evidence in a document corpus, using Shapley values to identify the relative importance of pieces of evidence to the final prediction. R2E can adapt to new evidence without retraining, and incorporate structured data through templating into natural language. We assess on the use case of drug target identification from published scientific literature, where we show that the model outperforms an industry-standard genetics-based approach on predicting clinical trial outcomes.

Ensembles of Spiking Neural Networks

Oct 15, 2020

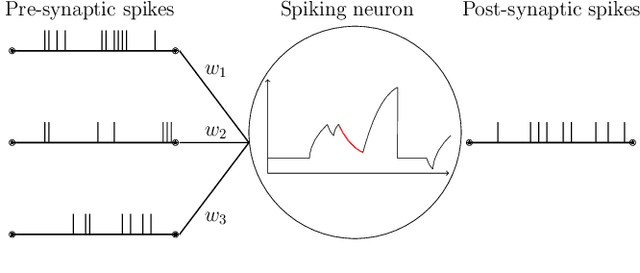

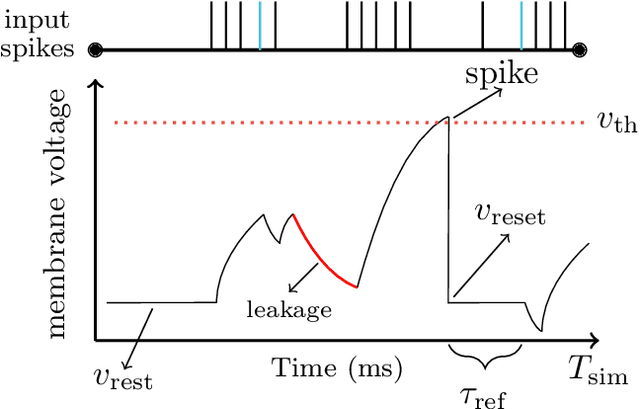

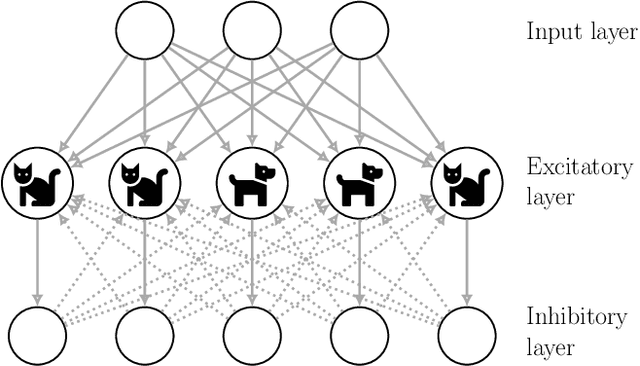

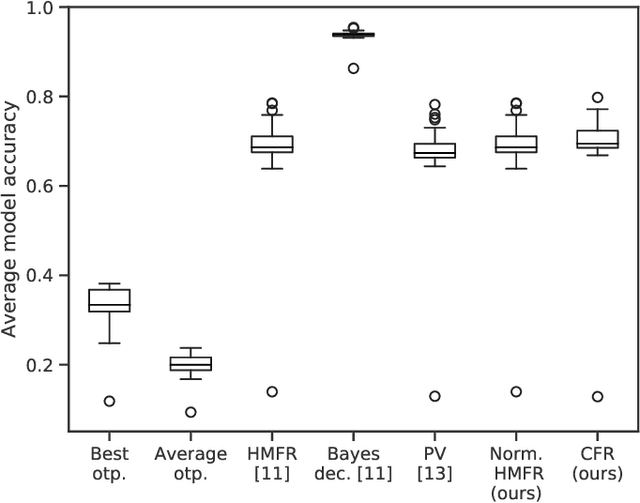

Abstract:This paper demonstrates that ensembles of spiking neural networks can be constructed so that the ensemble performance is guaranteed to be better than the average performance of a single model. Spiking neural networks have not challenged the performance obtained by conventional neural networks on the same problems. Ensemble learning is a framework that has been used extensively to improve the performance of machine learning models. In this paper, we show how to construct ensembles of spiking neural networks that both produce state-of-the-art results, and achieve this with less than 50% of the parameters of the original models. We establish the methodology on combining model predictions such that performance improvements are guaranteed for spiking ensembles. For this, we formalize spiking neural networks as GLM predictors, identifying a suitable representation for their target domain. Further, we show how the diversity of our spiking ensembles can be measured using the Ambiguity Decomposition.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge