Ensembles of Spiking Neural Networks

Paper and Code

Oct 15, 2020

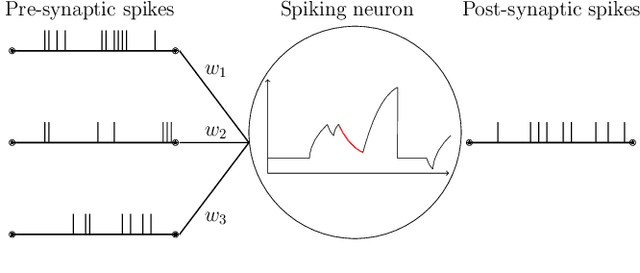

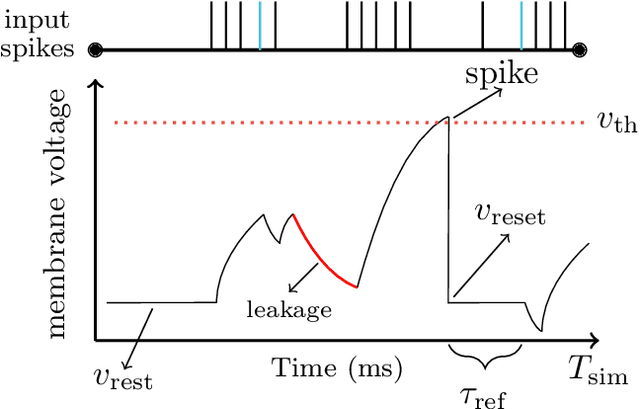

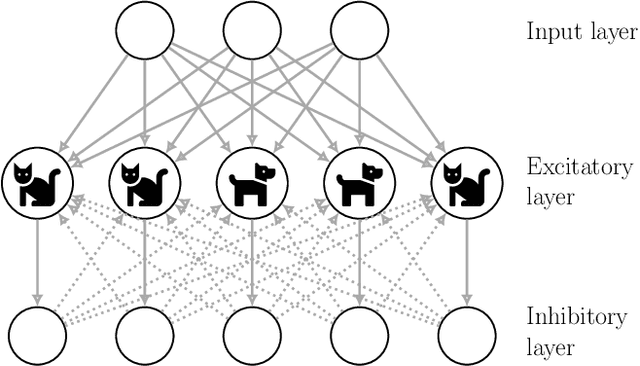

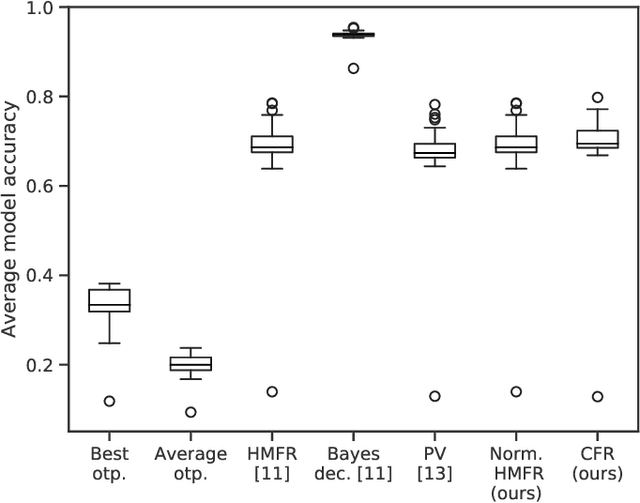

This paper demonstrates that ensembles of spiking neural networks can be constructed so that the ensemble performance is guaranteed to be better than the average performance of a single model. Spiking neural networks have not challenged the performance obtained by conventional neural networks on the same problems. Ensemble learning is a framework that has been used extensively to improve the performance of machine learning models. In this paper, we show how to construct ensembles of spiking neural networks that both produce state-of-the-art results, and achieve this with less than 50% of the parameters of the original models. We establish the methodology on combining model predictions such that performance improvements are guaranteed for spiking ensembles. For this, we formalize spiking neural networks as GLM predictors, identifying a suitable representation for their target domain. Further, we show how the diversity of our spiking ensembles can be measured using the Ambiguity Decomposition.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge