Gary S. W. Goh

Understanding Integrated Gradients with SmoothTaylor for Deep Neural Network Attribution

Apr 22, 2020

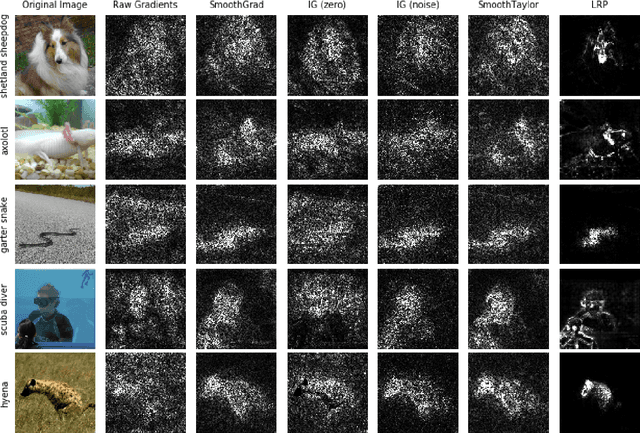

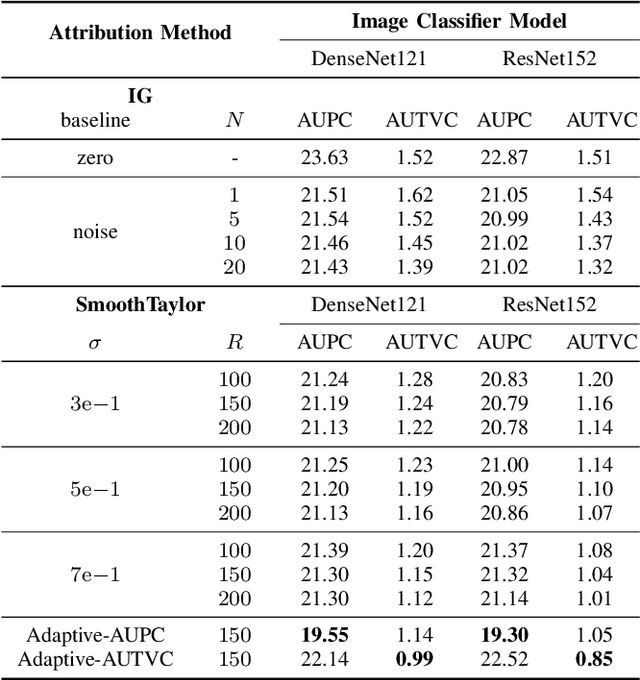

Abstract:Integrated gradients as an attribution method for deep neural network models offers simple implementability. However, it also suffers from noisiness of explanations, which affects the ease of interpretability. In this paper, we present Smooth Integrated Gradients as a statistically improved attribution method inspired by Taylor's theorem, which does not require a fixed baseline to be chosen. We apply both methods to the image classification problem, using the ILSVRC2012 ImageNet object recognition dataset, and a couple of pretrained image models to generate attribution maps of their predictions. These attribution maps are visualized by saliency maps which can be evaluated qualitatively. We also empirically evaluate them using quantitative metrics such as perturbations-based score drops and multi-scaled total variance. We further propose adaptive noising to optimize for the noise scale hyperparameter value in our proposed method. From our experiments, we find that the Smooth Integrated Gradients approach together with adaptive noising is able to generate better quality saliency maps with lesser noise and higher sensitivity to the relevant points in the input space.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge