Francois Rivest

Monte Carlo Tree Search Satellite Scheduling Under Cloud Cover Uncertainty

May 31, 2024Abstract:Efficient utilization of satellite resources in dynamic environments remains a challenging problem in satellite scheduling. This paper addresses the multi-satellite collection scheduling problem (m-SatCSP), aiming to optimize task scheduling over a constellation of satellites under uncertain conditions such as cloud cover. Leveraging Monte Carlo Tree Search (MCTS), a stochastic search algorithm, two versions of MCTS are explored to schedule satellites effectively. Hyperparameter tuning is conducted to optimize the algorithm's performance. Experimental results demonstrate the effectiveness of the MCTS approach, outperforming existing methods in both solution quality and efficiency. Comparative analysis against other scheduling algorithms showcases competitive performance, positioning MCTS as a promising solution for satellite task scheduling in dynamic environments.

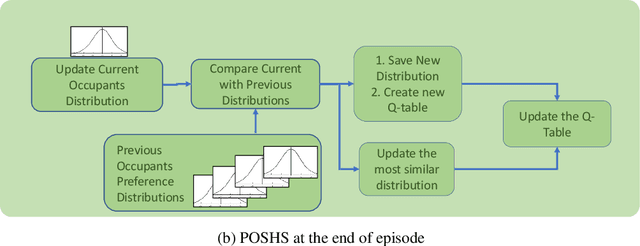

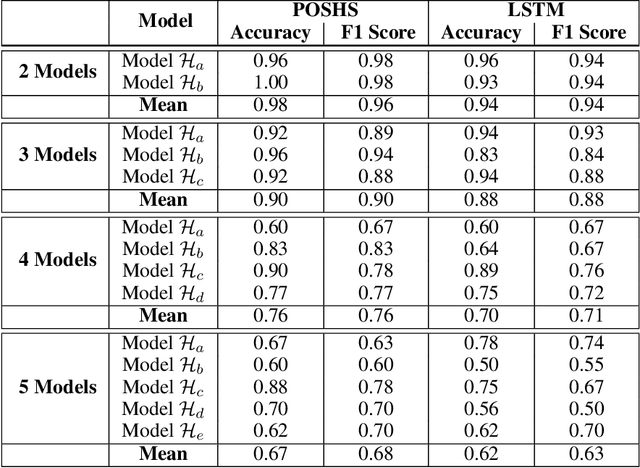

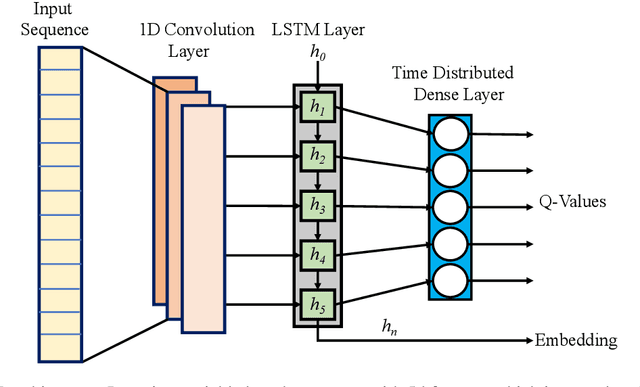

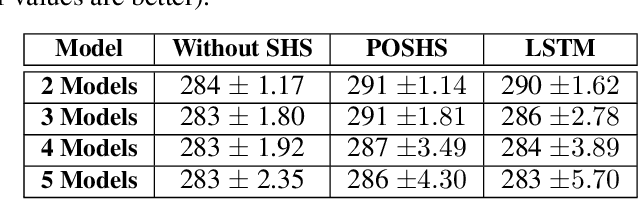

Towards Personalization of User Preferences in Partially Observable Smart Home Environments

Dec 15, 2021

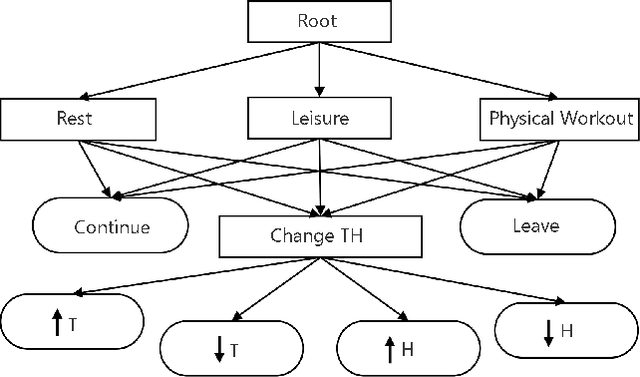

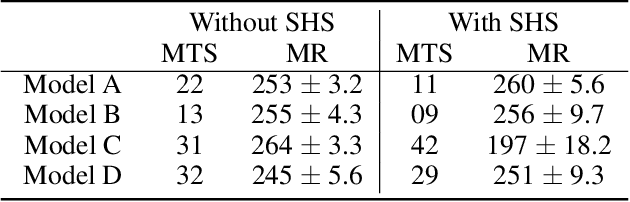

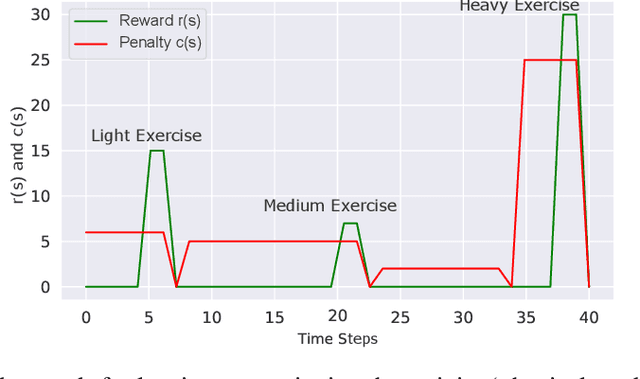

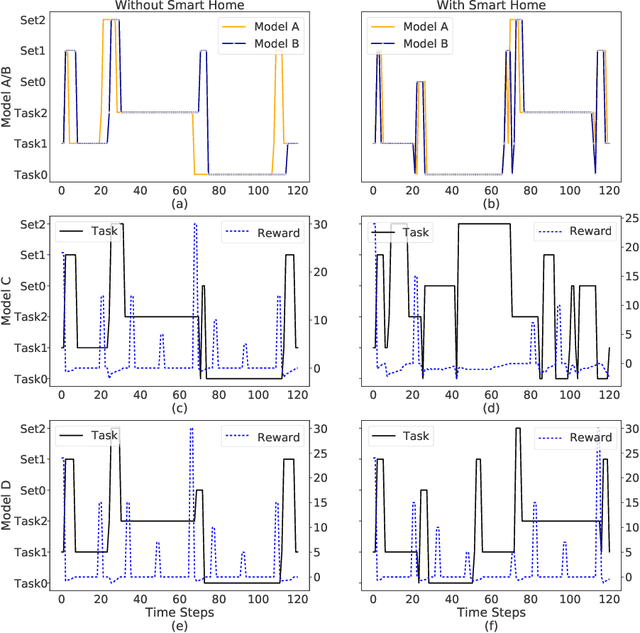

Abstract:The technologies used in smart homes have recently improved to learn the user preferences from feedback in order to enhance the user convenience and quality of experience. Most smart homes learn a uniform model to represent the thermal preferences of users, which generally fails when the pool of occupants includes people with different sensitivities to temperature, for instance due to age and physiological factors. Thus, a smart home with a single optimal policy may fail to provide comfort when a new user with a different preference is integrated into the home. In this paper, we propose a Bayesian Reinforcement learning framework that can approximate the current occupant state in a partially observable smart home environment using its thermal preference, and then identify the occupant as a new user or someone is already known to the system. Our proposed framework can be used to identify users based on the temperature and humidity preferences of the occupant when performing different activities to enable personalization and improve comfort. We then compare the proposed framework with a baseline long short-term memory learner that learns the thermal preference of the user from the sequence of actions which it takes. We perform these experiments with up to 5 simulated human models each based on hierarchical reinforcement learning. The results show that our framework can approximate the belief state of the current user just by its temperature and humidity preferences across different activities with a high degree of accuracy.

Latent Time-Adaptive Drift-Diffusion Model

Jun 04, 2021

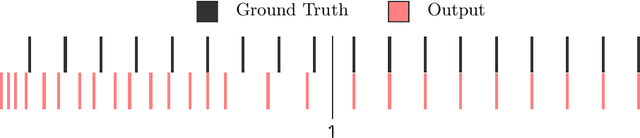

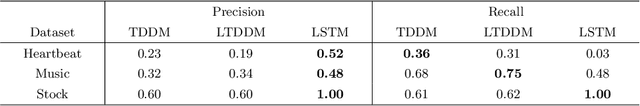

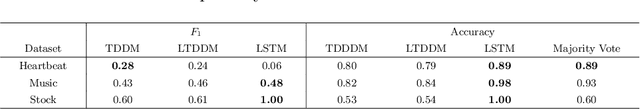

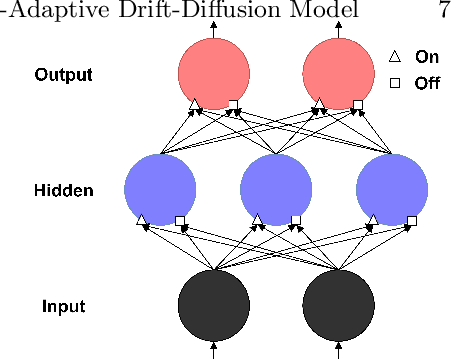

Abstract:Animals can quickly learn the timing of events with fixed intervals and their rate of acquisition does not depend on the length of the interval. In contrast, recurrent neural networks that use gradient based learning have difficulty predicting the timing of events that depend on stimulus that occurred long ago. We present the latent time-adaptive drift-diffusion model (LTDDM), an extension to the time-adaptive drift-diffusion model (TDDM), a model for animal learning of timing that exhibits behavioural properties consistent with experimental data from animals. The performance of LTDDM is compared to that of a state of the art long short-term memory (LSTM) recurrent neural network across three timing tasks. Differences in the relative performance of these two models is discussed and it is shown how LTDDM can learn these events time series orders of magnitude faster than recurrent neural networks.

Potential Impacts of Smart Homes on Human Behavior: A Reinforcement Learning Approach

Mar 16, 2021

Abstract:We aim to investigate the potential impacts of smart homes on human behavior. To this end, we simulate a series of human models capable of performing various activities inside a reinforcement learning-based smart home. We then investigate the possibility of human behavior being altered as a result of the smart home and the human model adapting to one-another. We design a semi-Markov decision process human task interleaving model based on hierarchical reinforcement learning that learns to make decisions to either pursue or leave an activity. We then integrate our human model in the smart home which is based on Q-learning. We show that a smart home trained on a generic human model is able to anticipate and learn the thermal preferences of human models with intrinsic rewards similar to the generic model. The hierarchical human model learns to complete each activity and set optimal thermal settings for maximum comfort. With the smart home, the number of time steps required to change the thermal settings are reduced for the human models. Interestingly, we observe that small variations in the human model reward structures can lead to the opposite behavior in the form of unexpected switching between activities which signals changes in human behavior due to the presence of the smart home.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge