Francisco Leiva

Data-driven control of hydraulic impact hammers under strict operational and control constraints

Jan 12, 2026Abstract:This paper presents a data-driven methodology for the control of static hydraulic impact hammers, also known as rock breakers, which are commonly used in the mining industry. The task addressed in this work is that of controlling the rock-breaker so its end-effector reaches arbitrary target poses, which is required in normal operation to place the hammer on top of rocks that need to be fractured. The proposed approach considers several constraints, such as unobserved state variables due to limited sensing and the strict requirement of using a discrete control interface at the joint level. First, the proposed methodology addresses the problem of system identification to obtain an approximate dynamic model of the hydraulic arm. This is done via supervised learning, using only teleoperation data. The learned dynamic model is then exploited to obtain a controller capable of reaching target end-effector poses. For policy synthesis, both reinforcement learning (RL) and model predictive control (MPC) algorithms are utilized and contrasted. As a case study, we consider the automation of a Bobcat E10 mini-excavator arm with a hydraulic impact hammer attached as end-effector. Using this machine, both the system identification and policy synthesis stages are studied in simulation and in the real world. The best RL-based policy consistently reaches target end-effector poses with position errors below 12 cm and pitch angle errors below 0.08 rad in the real world. Considering that the impact hammer has a 4 cm diameter chisel, this level of precision is sufficient for breaking rocks. Notably, this is accomplished by relying only on approximately 68 min of teleoperation data to train and 8 min to evaluate the dynamic model, and without performing any adjustments for a successful policy Sim2Real transfer. A demonstration of policy execution in the real world can be found in https://youtu.be/e-7tDhZ4ZgA.

Sistema de navegación de cobertura para vehículos no holonómicos en ambientes de exterior

Dec 28, 2025Abstract:In mobile robotics, coverage navigation refers to the deliberate movement of a robot with the purpose of covering a certain area or volume. Performing this task properly is fundamental for the execution of several activities, for instance, cleaning a facility with a robotic vacuum cleaner. In the mining industry, it is required to perform coverage in several unit processes related with material movement using industrial machinery, for example, in cleaning tasks, in dumps, and in the construction of tailings dam walls. The automation of these processes is fundamental to enhance the security associated with their execution. In this work, a coverage navigation system for a non-holonomic robot is presented. This work is intended to be a proof of concept for the potential automation of various unit processes that require coverage navigation like the ones mentioned before. The developed system includes the calculation of routes that allow a mobile platform to cover a specific area, and incorporates recovery behaviors in case that an unforeseen event occurs, such as the arising of dynamic or previously unmapped obstacles in the terrain to be covered, e.g., other machines or pedestrians passing through the area, being able to perform evasive maneuvers and post-recovery to ensure a complete coverage of the terrain. The system was tested in different simulated and real outdoor environments, obtaining results near 90% of coverage in the majority of experiments. The next step of development is to scale up the utilized robot to a mining machine/vehicle whose operation will be validated in a real environment. The result of one of the tests performed in the real world can be seen in the video available in https://youtu.be/gK7_3bK1P5g.

Autonomous loading of ore piles with Load-Haul-Dump machines using Deep Reinforcement Learning

Sep 11, 2024

Abstract:This work presents a deep reinforcement learning-based approach to train controllers for the autonomous loading of ore piles with a Load-Haul-Dump (LHD) machine. These controllers must perform a complete loading maneuver, filling the LHD's bucket with material while avoiding wheel drift, dumping material, or getting stuck in the pile. The training process is conducted entirely in simulation, using a simple environment that leverages the Fundamental Equation of Earth-Moving Mechanics so as to achieve a low computational cost. Two different types of policies are trained: one with a hybrid action space and another with a continuous action space. The RL-based policies are evaluated both in simulation and in the real world using a scaled LHD and a scaled muck pile, and their performance is compared to that of a heuristics-based controller and human teleoperation. Additional real-world experiments are performed to assess the robustness of the RL-based policies to measurement errors in the characterization of the piles. Overall, the RL-based controllers show good performance in the real world, achieving fill factors between 71-94%, and less wheel drift than the other baselines during the loading maneuvers. A video showing the training environment and the learned behavior in simulation, as well as some of the performed experiments in the real world, can be found in https://youtu.be/jOpA1rkwhDY.

Combining RL and IL using a dynamic, performance-based modulation over learning signals and its application to local planning

May 16, 2024

Abstract:This paper proposes a method to combine reinforcement learning (RL) and imitation learning (IL) using a dynamic, performance-based modulation over learning signals. The proposed method combines RL and behavioral cloning (IL), or corrective feedback in the action space (interactive IL/IIL), by dynamically weighting the losses to be optimized, taking into account the backpropagated gradients used to update the policy and the agent's estimated performance. In this manner, RL and IL/IIL losses are combined by equalizing their impact on the policy's updates, while modulating said impact such that IL signals are prioritized at the beginning of the learning process, and as the agent's performance improves, the RL signals become progressively more relevant, allowing for a smooth transition from pure IL/IIL to pure RL. The proposed method is used to learn local planning policies for mobile robots, synthesizing IL/IIL signals online by means of a scripted policy. An extensive evaluation of the application of the proposed method to this task is performed in simulations, and it is empirically shown that it outperforms pure RL in terms of sample efficiency (achieving the same level of performance in the training environment utilizing approximately 4 times less experiences), while consistently producing local planning policies with better performance metrics (achieving an average success rate of 0.959 in an evaluation environment, outperforming pure RL by 12.5% and pure IL by 13.9%). Furthermore, the obtained local planning policies are successfully deployed in the real world without performing any major fine tuning. The proposed method can extend existing RL algorithms, and is applicable to other problems for which generating IL/IIL signals online is feasible. A video summarizing some of the real world experiments that were conducted can be found in https://youtu.be/mZlaXn9WGzw.

Learning to Play Soccer From Scratch: Sample-Efficient Emergent Coordination through Curriculum-Learning and Competition

Mar 09, 2021

Abstract:This work proposes a scheme that allows learning complex multi-agent behaviors in a sample efficient manner, applied to 2v2 soccer. The problem is formulated as a Markov game, and solved using deep reinforcement learning. We propose a basic multi-agent extension of TD3 for learning the policy of each player, in a decentralized manner. To ease learning, the task of 2v2 soccer is divided in three stages: 1v0, 1v1 and 2v2. The process of learning in multi-agent stages (1v1 and 2v2) uses agents trained on a previous stage as fixed opponents. In addition, we propose using experience sharing, a method that shares experience from a fixed opponent, trained in a previous stage, for training the agent currently learning, and a form of frame-skipping, to raise performance significantly. Our results show that high quality soccer play can be obtained with our approach in just under 40M interactions. A summarized video of the resulting game play can be found in https://youtu.be/f25l1j1U9RM.

Playing Soccer without Colors in the SPL: A Convolutional Neural Network Approach

Nov 29, 2018

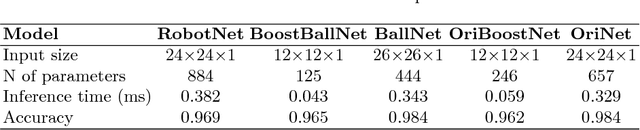

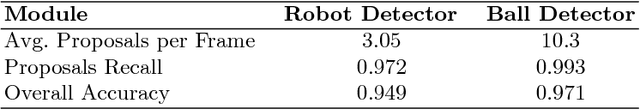

Abstract:The goal of this paper is to propose a vision system for humanoid robotic soccer that does not use any color information. The main features of this system are: (i) real-time operation in the NAO robot, and (ii) the ability to detect the ball, the robots, their orientations, the lines and key field features robustly. Our ball detector, robot detector, and robot's orientation detector obtain the highest reported detection rates. The proposed vision system is tested in a SPL field with several NAO robots under realistic and highly demanding conditions. The obtained results are: robot detection rate of 94.90%, ball detection rate of 97.10%, and a completely perceived orientation rate of 99.88% when the observed robot is static, and 95.52% when the observed robot is moving.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge