Finn M. Sherry

Flow Matching on Lie Groups

Apr 01, 2025Abstract:Flow Matching (FM) is a recent generative modelling technique: we aim to learn how to sample from distribution $\mathfrak{X}_1$ by flowing samples from some distribution $\mathfrak{X}_0$ that is easy to sample from. The key trick is that this flow field can be trained while conditioning on the end point in $\mathfrak{X}_1$: given an end point, simply move along a straight line segment to the end point (Lipman et al. 2022). However, straight line segments are only well-defined on Euclidean space. Consequently, Chen and Lipman (2023) generalised the method to FM on Riemannian manifolds, replacing line segments with geodesics or their spectral approximations. We take an alternative point of view: we generalise to FM on Lie groups by instead substituting exponential curves for line segments. This leads to a simple, intrinsic, and fast implementation for many matrix Lie groups, since the required Lie group operations (products, inverses, exponentials, logarithms) are simply given by the corresponding matrix operations. FM on Lie groups could then be used for generative modelling with data consisting of sets of features (in $\mathbb{R}^n$) and poses (in some Lie group), e.g. the latent codes of Equivariant Neural Fields (Wessels et al. 2025).

Orientation Scores should be a Piece of Cake

Apr 01, 2025

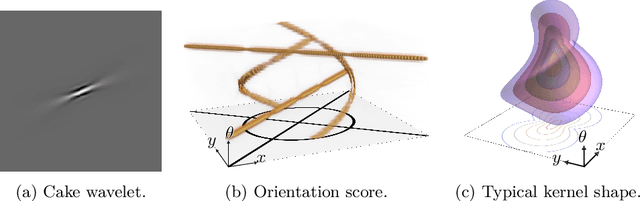

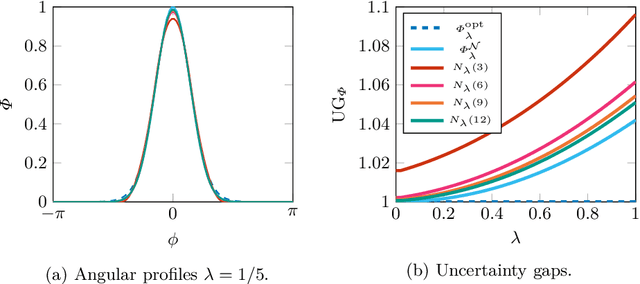

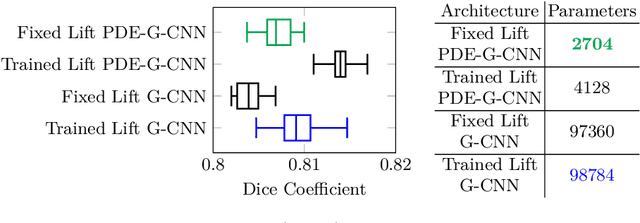

Abstract:We axiomatically derive a family of wavelets for an orientation score, lifting from position space $\mathbb{R}^2$ to position and orientation space $\mathbb{R}^2\times S^1$, with fast reconstruction property, that minimise position-orientation uncertainty. We subsequently show that these minimum uncertainty states are well-approximated by cake wavelets: for standard parameters, the uncertainty gap of cake wavelets is less than 1.1, and in the limit, we prove the uncertainty gap tends to the minimum of 1. Next, we complete a previous theoretical argument that one does not have to train the lifting layer in (PDE-)G-CNNs, but can instead use cake wavelets. Finally, we show experimentally that in this way we can reduce the network complexity and improve the interpretability of (PDE-)G-CNNs, with only a slight impact on the model's performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge