Faraz S. Ahmad

A Comparative Study of Pretrained Language Models for Long Clinical Text

Jan 27, 2023

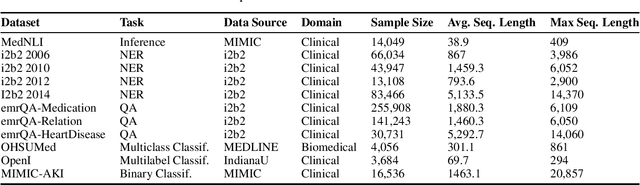

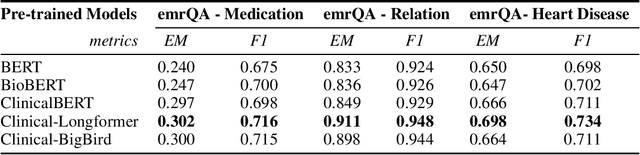

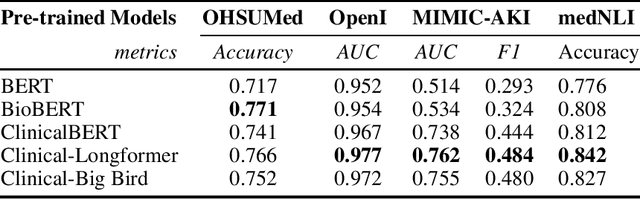

Abstract:Objective: Clinical knowledge enriched transformer models (e.g., ClinicalBERT) have state-of-the-art results on clinical NLP (natural language processing) tasks. One of the core limitations of these transformer models is the substantial memory consumption due to their full self-attention mechanism, which leads to the performance degradation in long clinical texts. To overcome this, we propose to leverage long-sequence transformer models (e.g., Longformer and BigBird), which extend the maximum input sequence length from 512 to 4096, to enhance the ability to model long-term dependencies in long clinical texts. Materials and Methods: Inspired by the success of long sequence transformer models and the fact that clinical notes are mostly long, we introduce two domain enriched language models, Clinical-Longformer and Clinical-BigBird, which are pre-trained on a large-scale clinical corpus. We evaluate both language models using 10 baseline tasks including named entity recognition, question answering, natural language inference, and document classification tasks. Results: The results demonstrate that Clinical-Longformer and Clinical-BigBird consistently and significantly outperform ClinicalBERT and other short-sequence transformers in all 10 downstream tasks and achieve new state-of-the-art results. Discussion: Our pre-trained language models provide the bedrock for clinical NLP using long texts. We have made our source code available at https://github.com/luoyuanlab/Clinical-Longformer, and the pre-trained models available for public download at: https://huggingface.co/yikuan8/Clinical-Longformer. Conclusion: This study demonstrates that clinical knowledge enriched long-sequence transformers are able to learn long-term dependencies in long clinical text. Our methods can also inspire the development of other domain-enriched long-sequence transformers.

Clinical-Longformer and Clinical-BigBird: Transformers for long clinical sequences

Feb 12, 2022

Abstract:Transformers-based models, such as BERT, have dramatically improved the performance for various natural language processing tasks. The clinical knowledge enriched model, namely ClinicalBERT, also achieved state-of-the-art results when performed on clinical named entity recognition and natural language inference tasks. One of the core limitations of these transformers is the substantial memory consumption due to their full self-attention mechanism. To overcome this, long sequence transformer models, e.g. Longformer and BigBird, were proposed with the idea of sparse attention mechanism to reduce the memory usage from quadratic to the sequence length to a linear scale. These models extended the maximum input sequence length from 512 to 4096, which enhanced the ability of modeling long-term dependency and consequently achieved optimal results in a variety of tasks. Inspired by the success of these long sequence transformer models, we introduce two domain enriched language models, namely Clinical-Longformer and Clinical-BigBird, which are pre-trained from large-scale clinical corpora. We evaluate both pre-trained models using 10 baseline tasks including named entity recognition, question answering, and document classification tasks. The results demonstrate that Clinical-Longformer and Clinical-BigBird consistently and significantly outperform ClinicalBERT as well as other short-sequence transformers in all downstream tasks. We have made our source code available at [https://github.com/luoyuanlab/Clinical-Longformer] the pre-trained models available for public download at: [https://huggingface.co/yikuan8/Clinical-Longformer].

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge