Fangqin Zhou

HyTAS: A Hyperspectral Image Transformer Architecture Search Benchmark and Analysis

Jul 23, 2024

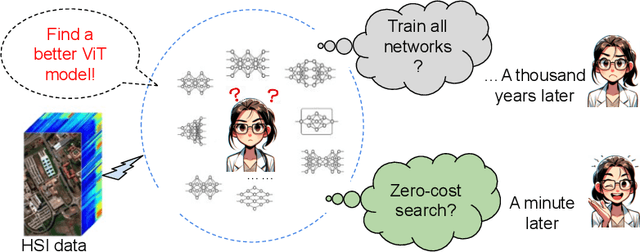

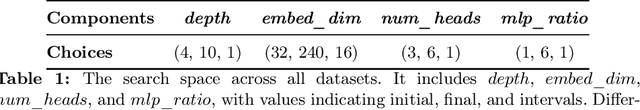

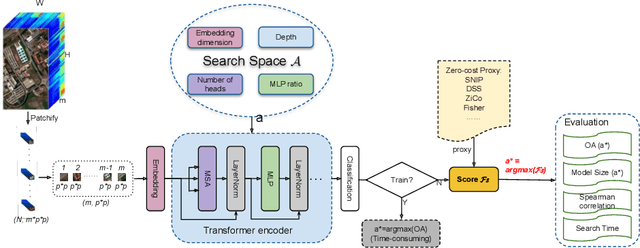

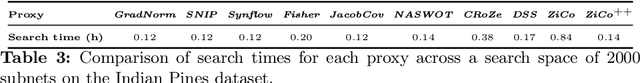

Abstract:Hyperspectral Imaging (HSI) plays an increasingly critical role in precise vision tasks within remote sensing, capturing a wide spectrum of visual data. Transformer architectures have significantly enhanced HSI task performance, while advancements in Transformer Architecture Search (TAS) have improved model discovery. To harness these advancements for HSI classification, we make the following contributions: i) We propose HyTAS, the first benchmark on transformer architecture search for Hyperspectral imaging, ii) We comprehensively evaluate 12 different methods to identify the optimal transformer over 5 different datasets, iii) We perform an extensive factor analysis on the Hyperspectral transformer search performance, greatly motivating future research in this direction. All benchmark materials are available at HyTAS.

Locality-Aware Hyperspectral Classification

Sep 04, 2023

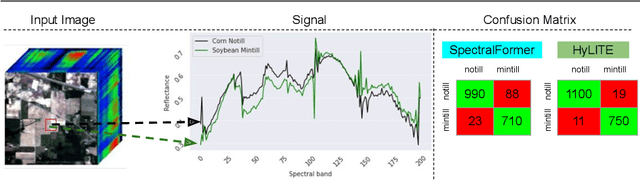

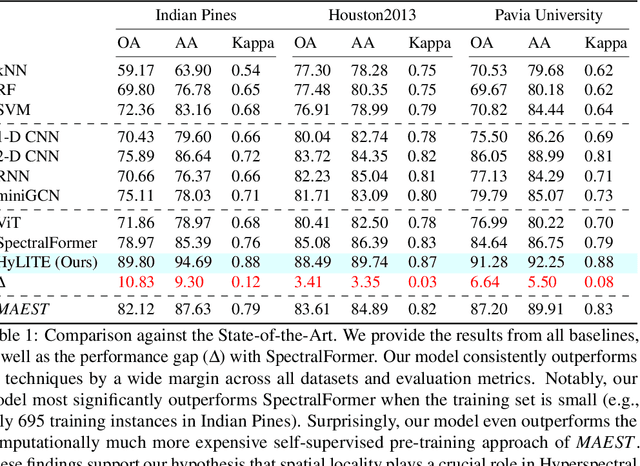

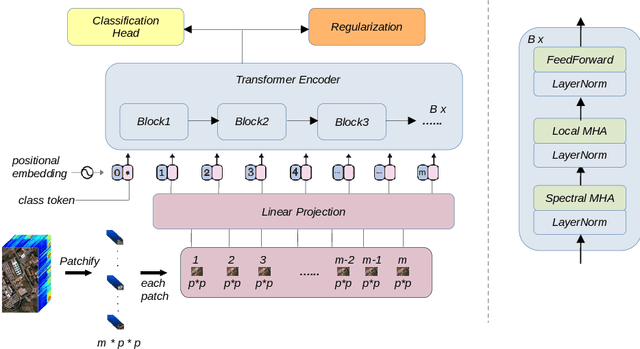

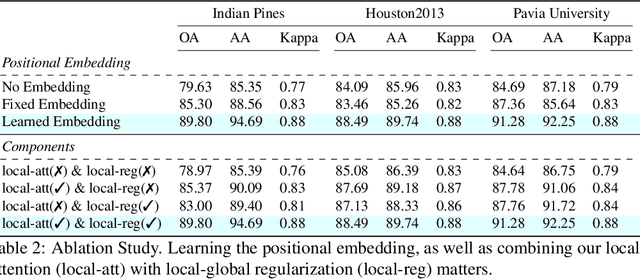

Abstract:Hyperspectral image classification is gaining popularity for high-precision vision tasks in remote sensing, thanks to their ability to capture visual information available in a wide continuum of spectra. Researchers have been working on automating Hyperspectral image classification, with recent efforts leveraging Vision-Transformers. However, most research models only spectra information and lacks attention to the locality (i.e., neighboring pixels), which may be not sufficiently discriminative, resulting in performance limitations. To address this, we present three contributions: i) We introduce the Hyperspectral Locality-aware Image TransformEr (HyLITE), a vision transformer that models both local and spectral information, ii) A novel regularization function that promotes the integration of local-to-global information, and iii) Our proposed approach outperforms competing baselines by a significant margin, achieving up to 10% gains in accuracy. The trained models and the code are available at HyLITE.

Open-Ended Learning Strategies for Learning Complex Locomotion Skills

Jun 14, 2022

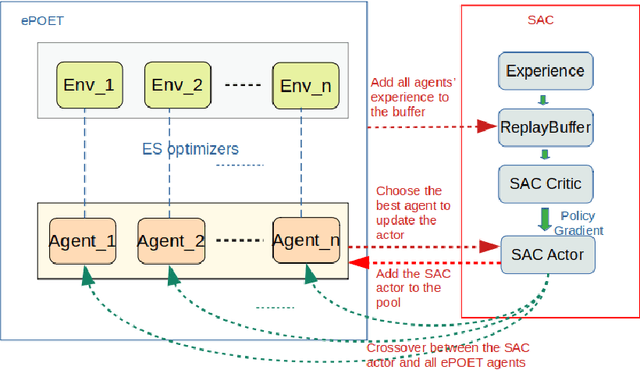

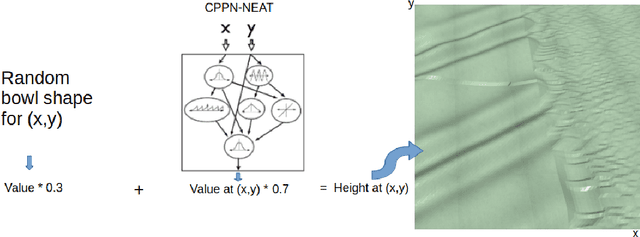

Abstract:Teaching robots to learn diverse locomotion skills under complex three-dimensional environmental settings via Reinforcement Learning (RL) is still challenging. It has been shown that training agents in simple settings before moving them on to complex settings improves the training process, but so far only in the context of relatively simple locomotion skills. In this work, we adapt the Enhanced Paired Open-Ended Trailblazer (ePOET) approach to train more complex agents to walk efficiently on complex three-dimensional terrains. First, to generate more rugged and diverse three-dimensional training terrains with increasing complexity, we extend the Compositional Pattern Producing Networks - Neuroevolution of Augmenting Topologies (CPPN-NEAT) approach and include randomized shapes. Second, we combine ePOET with Soft Actor-Critic off-policy optimization, yielding ePOET-SAC, to ensure that the agent could learn more diverse skills to solve more challenging tasks. Our experimental results show that the newly generated three-dimensional terrains have sufficient diversity and complexity to guide learning, that ePOET successfully learns complex locomotion skills on these terrains, and that our proposed ePOET-SAC approach slightly improves upon ePOET.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge