Fabio Merizzi

Evaluating Adversarial Attacks on Federated Learning for Temperature Forecasting

Dec 16, 2025Abstract:Deep learning and federated learning (FL) are becoming powerful partners for next-generation weather forecasting. Deep learning enables high-resolution spatiotemporal forecasts that can surpass traditional numerical models, while FL allows institutions in different locations to collaboratively train models without sharing raw data, addressing efficiency and security concerns. While FL has shown promise across heterogeneous regions, its distributed nature introduces new vulnerabilities. In particular, data poisoning attacks, in which compromised clients inject manipulated training data, can degrade performance or introduce systematic biases. These threats are amplified by spatial dependencies in meteorological data, allowing localized perturbations to influence broader regions through global model aggregation. In this study, we investigate how adversarial clients distort federated surface temperature forecasts trained on the Copernicus European Regional ReAnalysis (CERRA) dataset. We simulate geographically distributed clients and evaluate patch-based and global biasing attacks on regional temperature forecasts. Our results show that even a small fraction of poisoned clients can mislead predictions across large, spatially connected areas. A global temperature bias attack from a single compromised client shifts predictions by up to -1.7 K, while coordinated patch attacks more than triple the mean squared error and produce persistent regional anomalies exceeding +3.5 K. Finally, we assess trimmed mean aggregation as a defense mechanism, showing that it successfully defends against global bias attacks (2-13% degradation) but fails against patch attacks (281-603% amplification), exposing limitations of outlier-based defenses for spatially correlated data.

Deep Learning for Sea Surface Temperature Reconstruction under Cloud Occlusion

Dec 04, 2024

Abstract:Sea Surface Temperature (SST) is crucial for understanding Earth's oceans and climate, significantly influencing weather patterns, ocean currents, marine ecosystem health, and the global energy balance. Large-scale SST monitoring relies on satellite infrared radiation detection, but cloud cover presents a major challenge, creating extensive observational gaps and hampering our ability to fully capture large-scale ocean temperature patterns. Efforts to address these gaps in existing L4 datasets have been made, but they often exhibit notable local and seasonal biases, compromising data reliability and accuracy. To tackle this challenge, we employed deep neural networks to reconstruct cloud-covered portions of satellite imagery while preserving the integrity of observed values in cloud-free areas, using MODIS satellite derived observations of SST. Our best-performing architecture showed significant skill improvements over established methodologies, achieving substantial reductions in error metrics when benchmarked against widely used approaches and datasets. These results underscore the potential of advanced AI techniques to enhance the completeness of satellite observations in Earth-science remote sensing, providing more accurate and reliable datasets for environmental assessments, data-driven model training, climate research, and seamless integration into model data assimilation workflows.

Wind speed super-resolution and validation: from ERA5 to CERRA via diffusion models

Jan 31, 2024

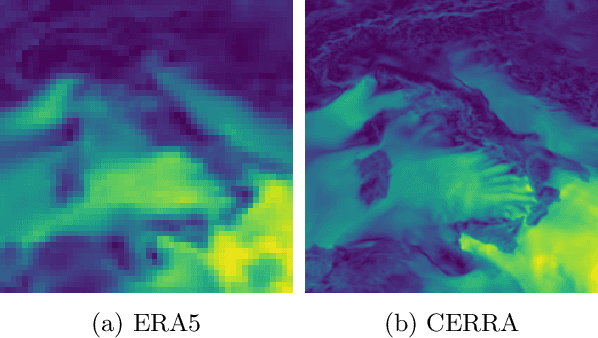

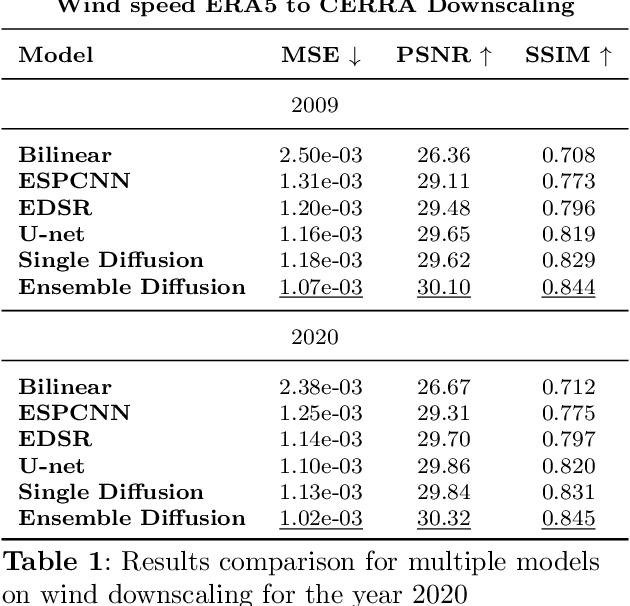

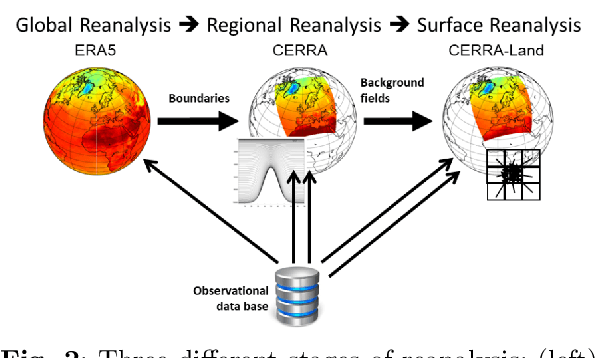

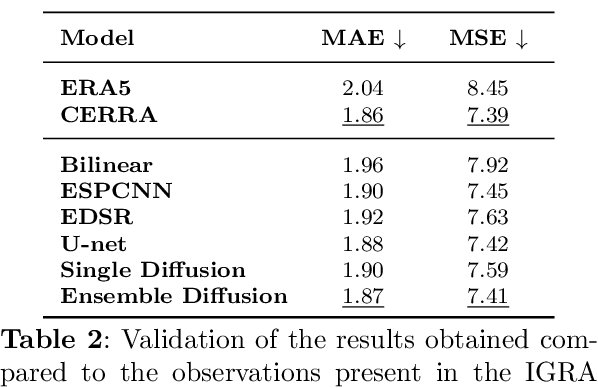

Abstract:The Copernicus Regional Reanalysis for Europe, CERRA, is a high-resolution regional reanalysis dataset for the European domain. In recent years it has shown significant utility across various climate-related tasks, ranging from forecasting and climate change research to renewable energy prediction, resource management, air quality risk assessment, and the forecasting of rare events, among others. Unfortunately, the availability of CERRA is lagging two years behind the current date, due to constraints in acquiring the requisite external data and the intensive computational demands inherent in its generation. As a solution, this paper introduces a novel method using diffusion models to approximate CERRA downscaling in a data-driven manner, without additional informations. By leveraging the lower resolution ERA5 dataset, which provides boundary conditions for CERRA, we approach this as a super-resolution task. Focusing on wind speed around Italy, our model, trained on existing CERRA data, shows promising results, closely mirroring original CERRA data. Validation with in-situ observations further confirms the model's accuracy in approximating ground measurements.

Precipitation nowcasting with generative diffusion models

Aug 13, 2023

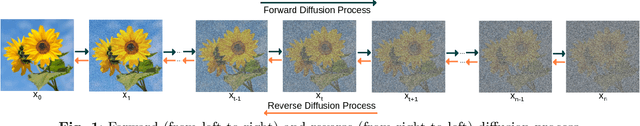

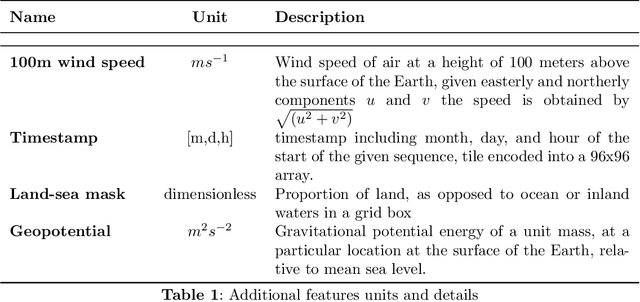

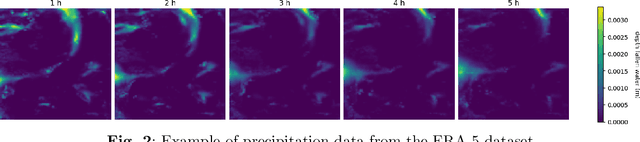

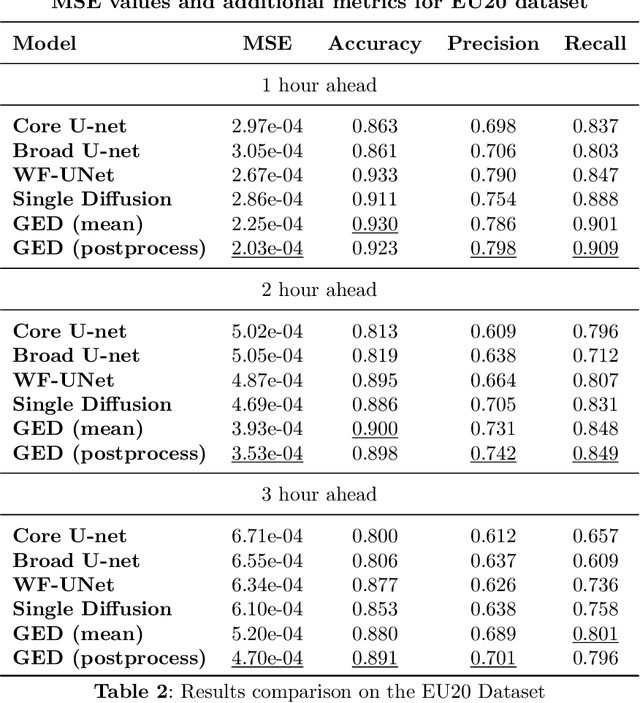

Abstract:In recent years traditional numerical methods for accurate weather prediction have been increasingly challenged by deep learning methods. Numerous historical datasets used for short and medium-range weather forecasts are typically organized into a regular spatial grid structure. This arrangement closely resembles images: each weather variable can be visualized as a map or, when considering the temporal axis, as a video. Several classes of generative models, comprising Generative Adversarial Networks, Variational Autoencoders, or the recent Denoising Diffusion Models have largely proved their applicability to the next-frame prediction problem, and is thus natural to test their performance on the weather prediction benchmarks. Diffusion models are particularly appealing in this context, due to the intrinsically probabilistic nature of weather forecasting: what we are really interested to model is the probability distribution of weather indicators, whose expected value is the most likely prediction. In our study, we focus on a specific subset of the ERA-5 dataset, which includes hourly data pertaining to Central Europe from the years 2016 to 2021. Within this context, we examine the efficacy of diffusion models in handling the task of precipitation nowcasting. Our work is conducted in comparison to the performance of well-established U-Net models, as documented in the existing literature. Our proposed approach of Generative Ensemble Diffusion (GED) utilizes a diffusion model to generate a set of possible weather scenarios which are then amalgamated into a probable prediction via the use of a post-processing network. This approach, in comparison to recent deep learning models, substantially outperformed them in terms of overall performance.

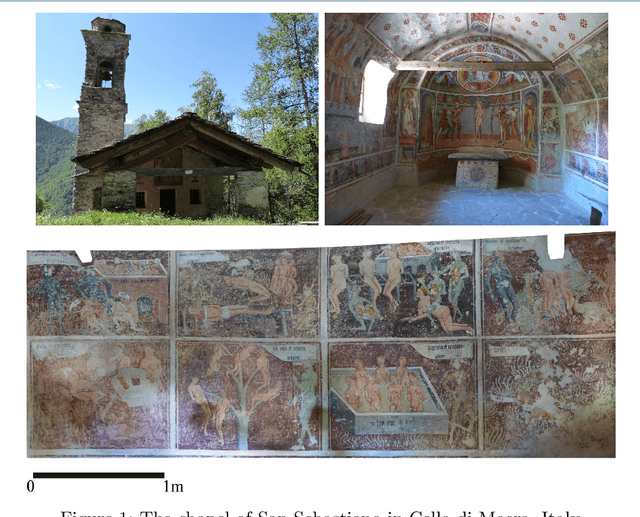

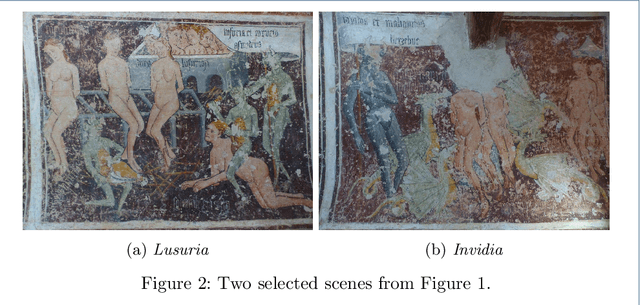

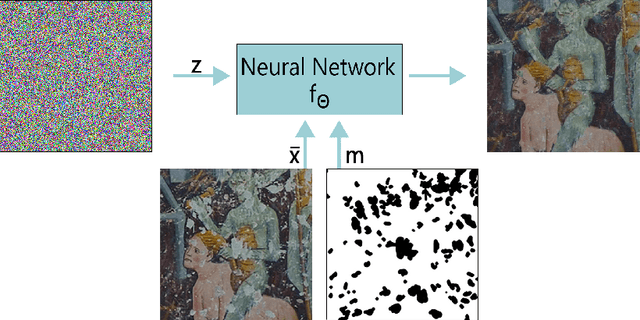

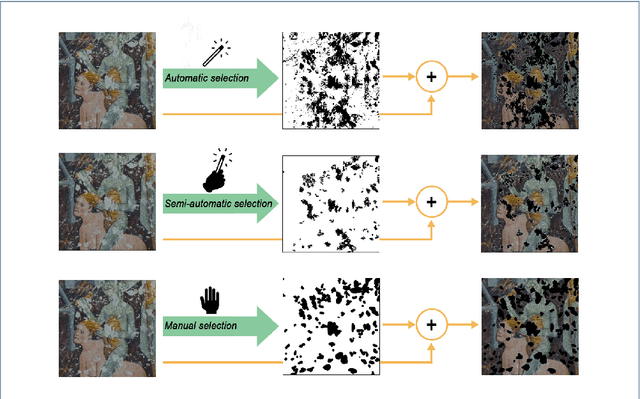

Deep image prior inpainting of ancient frescoes in the Mediterranean Alpine arc

Jun 25, 2023

Abstract:The unprecedented success of image reconstruction approaches based on deep neural networks has revolutionised both the processing and the analysis paradigms in several applied disciplines. In the field of digital humanities, the task of digital reconstruction of ancient frescoes is particularly challenging due to the scarce amount of available training data caused by ageing, wear, tear and retouching over time. To overcome these difficulties, we consider the Deep Image Prior (DIP) inpainting approach which computes appropriate reconstructions by relying on the progressive updating of an untrained convolutional neural network so as to match the reliable piece of information in the image at hand while promoting regularisation elsewhere. In comparison with state-of-the-art approaches (based on variational/PDEs and patch-based methods), DIP-based inpainting reduces artefacts and better adapts to contextual/non-local information, thus providing a valuable and effective tool for art historians. As a case study, we apply such approach to reconstruct missing image contents in a dataset of highly damaged digital images of medieval paintings located into several chapels in the Mediterranean Alpine Arc and provide a detailed description on how visible and invisible (e.g., infrared) information can be integrated for identifying and reconstructing damaged image regions.

Detecting Anomalous Cryptocurrency Transactions: an AML/CFT Application of Machine Learning-based Forensics

Jun 07, 2022

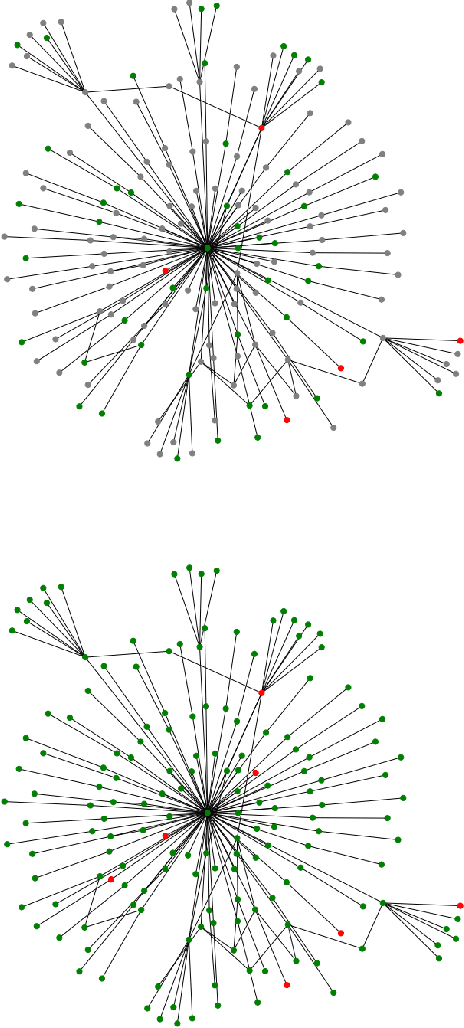

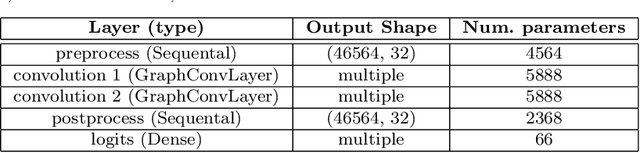

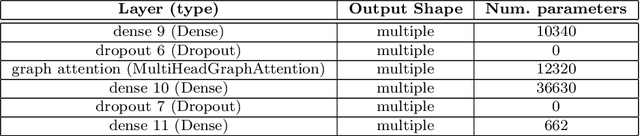

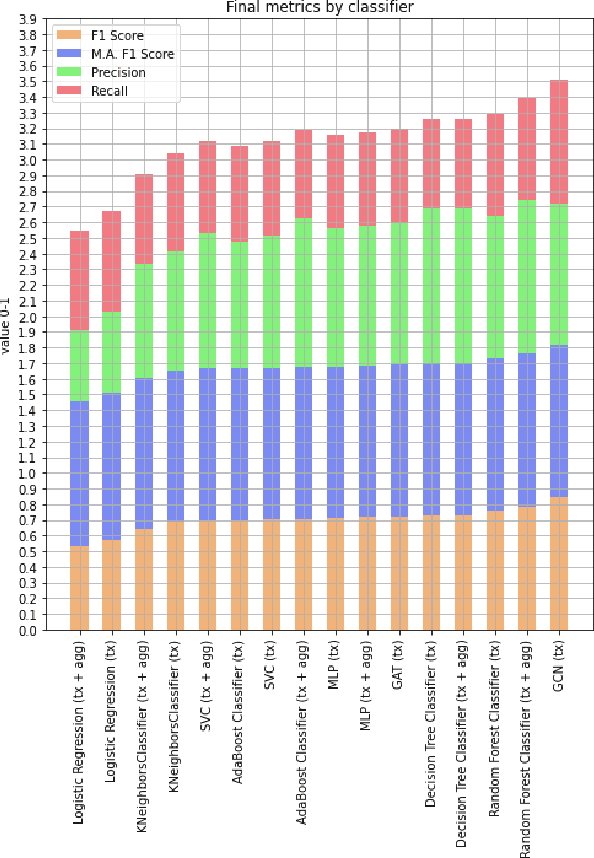

Abstract:The rise of blockchain and distributed ledger technologies (DLTs) in the financial sector has generated a socio-economic shift that triggered legal concerns and regulatory initiatives. While the anonymity of DLTs may safeguard the right to privacy, data protection and other civil liberties, lack of identification hinders accountability, investigation and enforcement. The resulting challenges extend to the rules to combat money laundering and the financing of terrorism and proliferation (AML/CFT). As law enforcement agencies and analytics companies have begun to successfully apply forensics to track currency across blockchain ecosystems, in this paper we focus on the increasing relevance of these techniques. In particular, we offer insights into the application to the Internet of Money (IoM) of machine learning, network and transaction graph analysis. After providing some background on the notion of anonymity in the IoM and on the interplay between AML/CFT and blockchain forensics, we focus on anomaly detection approaches leading to our experiments. Namely, we analyzed a real-world dataset of Bitcoin transactions represented as a directed graph network through various machine learning techniques. Our claim is that the AML/CFT domain could benefit from novel graph analysis methods in machine learning. Indeed, our findings show that the Graph Convolutional Networks (GCN) and Graph Attention Networks (GAT) neural network types represent a promising solution for AML/CFT compliance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge