En-Te Lin

Diffusion Suction Grasping with Large-Scale Parcel Dataset

Feb 11, 2025

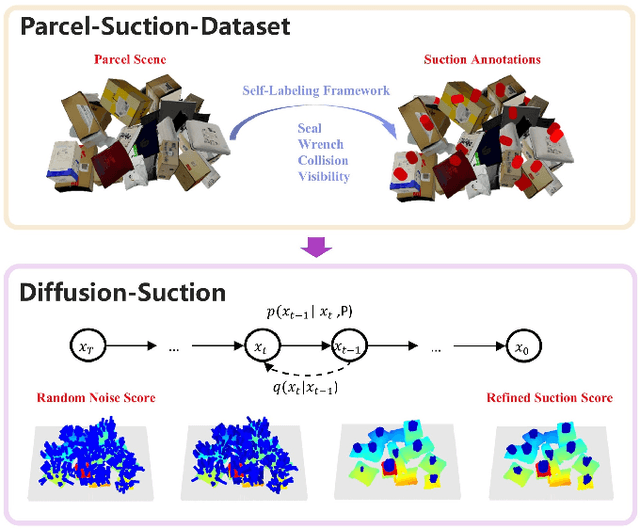

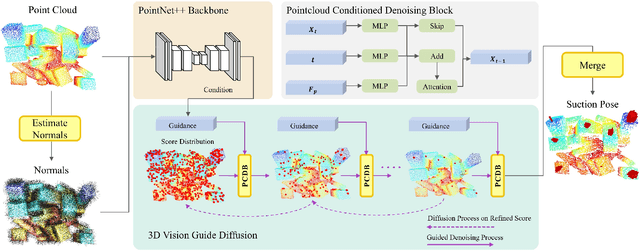

Abstract:While recent advances in object suction grasping have shown remarkable progress, significant challenges persist particularly in cluttered and complex parcel handling scenarios. Two fundamental limitations hinder current approaches: (1) the lack of a comprehensive suction grasp dataset tailored for parcel manipulation tasks, and (2) insufficient adaptability to diverse object characteristics including size variations, geometric complexity, and textural diversity. To address these challenges, we present Parcel-Suction-Dataset, a large-scale synthetic dataset containing 25 thousand cluttered scenes with 410 million precision-annotated suction grasp poses. This dataset is generated through our novel geometric sampling algorithm that enables efficient generation of optimal suction grasps incorporating both physical constraints and material properties. We further propose Diffusion-Suction, an innovative framework that reformulates suction grasp prediction as a conditional generation task through denoising diffusion probabilistic models. Our method iteratively refines random noise into suction grasp score maps through visual-conditioned guidance from point cloud observations, effectively learning spatial point-wise affordances from our synthetic dataset. Extensive experiments demonstrate that the simple yet efficient Diffusion-Suction achieves new state-of-the-art performance compared to previous models on both Parcel-Suction-Dataset and the public SuctionNet-1Billion benchmark.

SD-Net: Symmetric-Aware Keypoint Prediction and Domain Adaptation for 6D Pose Estimation In Bin-picking Scenarios

Mar 14, 2024

Abstract:Despite the success in 6D pose estimation in bin-picking scenarios, existing methods still struggle to produce accurate prediction results for symmetry objects and real world scenarios. The primary bottlenecks include 1) the ambiguity keypoints caused by object symmetries; 2) the domain gap between real and synthetic data. To circumvent these problem, we propose a new 6D pose estimation network with symmetric-aware keypoint prediction and self-training domain adaptation (SD-Net). SD-Net builds on pointwise keypoint regression and deep hough voting to perform reliable detection keypoint under clutter and occlusion. Specifically, at the keypoint prediction stage, we designe a robust 3D keypoints selection strategy considering the symmetry class of objects and equivalent keypoints, which facilitate locating 3D keypoints even in highly occluded scenes. Additionally, we build an effective filtering algorithm on predicted keypoint to dynamically eliminate multiple ambiguity and outlier keypoint candidates. At the domain adaptation stage, we propose the self-training framework using a student-teacher training scheme. To carefully distinguish reliable predictions, we harnesses a tailored heuristics for 3D geometry pseudo labelling based on semi-chamfer distance. On public Sil'eane dataset, SD-Net achieves state-of-the-art results, obtaining an average precision of 96%. Testing learning and generalization abilities on public Parametric datasets, SD-Net is 8% higher than the state-of-the-art method. The code is available at https://github.com/dingthuang/SD-Net.

NormNet: Scale Normalization for 6D Pose Estimation in Stacked Scenarios

Nov 15, 2023

Abstract:Existing Object Pose Estimation (OPE) methods for stacked scenarios are not robust to changes in object scale. This paper proposes a new 6DoF OPE network (NormNet) for different scale objects in stacked scenarios. Specifically, each object's scale is first learned with point-wise regression. Then, all objects in the stacked scenario are normalized into the same scale through semantic segmentation and affine transformation. Finally, they are fed into a shared pose estimator to recover their 6D poses. In addition, we introduce a new Sim-to-Real transfer pipeline, combining style transfer and domain randomization. This improves the NormNet's performance on real data even if we only train it on synthetic data. Extensive experiments demonstrate that the proposed method achieves state-of-the-art performance on public benchmarks and the MultiScale dataset we constructed. The real-world experiments show that our method can robustly estimate the 6D pose of objects at different scales.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge