Emma Hughson

Good Things Come in Trees: Emotion and Context Aware Behaviour Trees for Ethical Robotic Decision-Making

May 10, 2024Abstract:Emotions guide our decision making process and yet have been little explored in practical ethical decision making scenarios. In this challenge, we explore emotions and how they can influence ethical decision making in a home robot context: which fetch requests should a robot execute, and why or why not? We discuss, in particular, two aspects of emotion: (1) somatic markers: objects to be retrieved are tagged as negative (dangerous, e.g. knives or mind-altering, e.g. medicine with overdose potential), providing a quick heuristic for where to focus attention to avoid the classic Frame Problem of artificial intelligence, (2) emotion inference: users' valence and arousal levels are taken into account in defining how and when a robot should respond to a human's requests, e.g. to carefully consider giving dangerous items to users experiencing intense emotions. Our emotion-based approach builds a foundation for the primary consideration of Safety, and is complemented by policies that support overriding based on Context (e.g. age of user, allergies) and Privacy (e.g. administrator settings). Transparency is another key aspect of our solution. Our solution is defined using behaviour trees, towards an implementable design that can provide reasoning information in real-time.

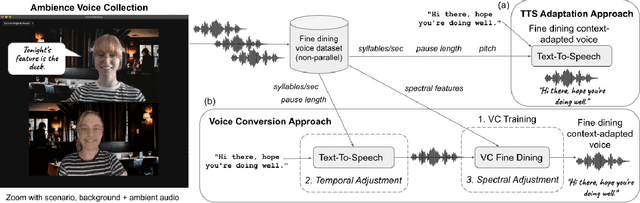

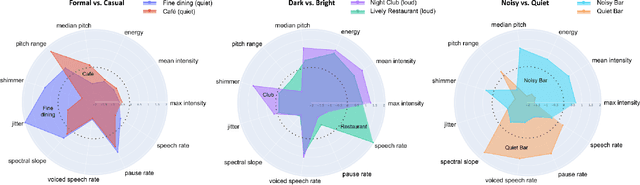

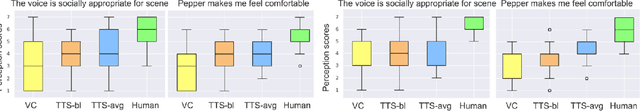

Read the Room: Adapting a Robot's Voice to Ambient and Social Contexts

May 10, 2022

Abstract:Adapting one's voice to different ambient environments and social interactions is required for human social interaction. In robotics, the ability to recognize speech in noisy and quiet environments has received significant attention, but considering ambient cues in the production of social speech features has been little explored. Our research aims to modify a robot's speech to maximize acceptability in various social and acoustic contexts, starting with a use case for service robots in varying restaurants. We created an original dataset collected over Zoom with participants conversing in scripted and unscripted tasks given 7 different ambient sounds and background images. Voice conversion methods, in addition to altered Text-to-Speech that matched ambient specific data, were used for speech synthesis tasks. We conducted a subjective perception study that showed humans prefer synthetic speech that matches ambience and social context, ultimately preferring more human-like voices. This work provides three solutions to ambient and socially appropriate synthetic voices: (1) a novel protocol to collect real contextual audio voice data, (2) tools and directions to manipulate robot speech for appropriate social and ambient specific interactions, and (3) insight into voice conversion's role in flexibly altering robot speech to match different ambient environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge