Elena Pagani

PLIERS: a Popularity-Based Recommender System for Content Dissemination in Online Social Networks

Jul 06, 2023

Abstract:In this paper, we propose a novel tag-based recommender system called PLIERS, which relies on the assumption that users are mainly interested in items and tags with similar popularity to those they already own. PLIERS is aimed at reaching a good tradeoff between algorithmic complexity and the level of personalization of recommended items. To evaluate PLIERS, we performed a set of experiments on real OSN datasets, demonstrating that it outperforms state-of-the-art solutions in terms of personalization, relevance, and novelty of recommendations.

Transfer Learning for the Efficient Detection of COVID-19 from Smartphone Audio Data

Jul 06, 2023

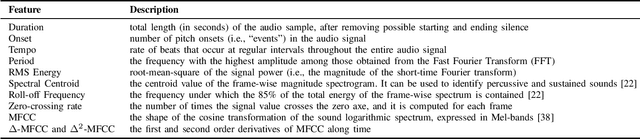

Abstract:Disease detection from smartphone data represents an open research challenge in mobile health (m-health) systems. COVID-19 and its respiratory symptoms are an important case study in this area and their early detection is a potential real instrument to counteract the pandemic situation. The efficacy of this solution mainly depends on the performances of AI algorithms applied to the collected data and their possible implementation directly on the users' mobile devices. Considering these issues, and the limited amount of available data, in this paper we present the experimental evaluation of 3 different deep learning models, compared also with hand-crafted features, and of two main approaches of transfer learning in the considered scenario: both feature extraction and fine-tuning. Specifically, we considered VGGish, YAMNET, and L\textsuperscript{3}-Net (including 12 different configurations) evaluated through user-independent experiments on 4 different datasets (13,447 samples in total). Results clearly show the advantages of L\textsuperscript{3}-Net in all the experimental settings as it overcomes the other solutions by 12.3\% in terms of Precision-Recall AUC as features extractor, and by 10\% when the model is fine-tuned. Moreover, we note that to fine-tune only the fully-connected layers of the pre-trained models generally leads to worse performances, with an average drop of 6.6\% with respect to feature extraction. %highlighting the need for further investigations. Finally, we evaluate the memory footprints of the different models for their possible applications on commercial mobile devices.

A Transfer Learning and Explainable Solution to Detect mpox from Smartphones images

May 29, 2023

Abstract:In recent months, the monkeypox (mpox) virus -- previously endemic in a limited area of the world -- has started spreading in multiple countries until being declared a ``public health emergency of international concern'' by the World Health Organization. The alert was renewed in February 2023 due to a persisting sustained incidence of the virus in several countries and worries about possible new outbreaks. Low-income countries with inadequate infrastructures for vaccine and testing administration are particularly at risk. A symptom of mpox infection is the appearance of skin rashes and eruptions, which can drive people to seek medical advice. A technology that might help perform a preliminary screening based on the aspect of skin lesions is the use of Machine Learning for image classification. However, to make this technology suitable on a large scale, it should be usable directly on mobile devices of people, with a possible notification to a remote medical expert. In this work, we investigate the adoption of Deep Learning to detect mpox from skin lesion images. The proposal leverages Transfer Learning to cope with the scarce availability of mpox image datasets. As a first step, a homogenous, unpolluted, dataset is produced by manual selection and preprocessing of available image data. It will also be released publicly to researchers in the field. Then, a thorough comparison is conducted amongst several Convolutional Neural Networks, based on a 10-fold stratified cross-validation. The best models are then optimized through quantization for use on mobile devices; measures of classification quality, memory footprint, and processing times validate the feasibility of our proposal. Additionally, the use of eXplainable AI is investigated as a suitable instrument to both technically and clinically validate classification outcomes.

L3-Net Deep Audio Embeddings to Improve COVID-19 Detection from Smartphone Data

May 16, 2022

Abstract:Smartphones and wearable devices, along with Artificial Intelligence, can represent a game-changer in the pandemic control, by implementing low-cost and pervasive solutions to recognize the development of new diseases at their early stages and by potentially avoiding the rise of new outbreaks. Some recent works show promise in detecting diagnostic signals of COVID-19 from voice and coughs by using machine learning and hand-crafted acoustic features. In this paper, we decided to investigate the capabilities of the recently proposed deep embedding model L3-Net to automatically extract meaningful features from raw respiratory audio recordings in order to improve the performances of standard machine learning classifiers in discriminating between COVID-19 positive and negative subjects from smartphone data. We evaluated the proposed model on 3 datasets, comparing the obtained results with those of two reference works. Results show that the combination of L3-Net with hand-crafted features overcomes the performance of the other works of 28.57% in terms of AUC in a set of subject-independent experiments. This result paves the way to further investigation on different deep audio embeddings, also for the automatic detection of different diseases.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge