Elad Vromen

Language Models as Semiotic Machines: Reconceptualizing AI Language Systems through Structuralist and Post-Structuralist Theories of Language

Oct 16, 2024

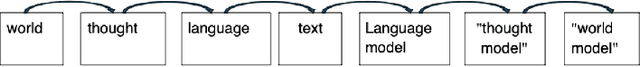

Abstract:This paper proposes a novel framework for understanding large language models (LLMs) by reconceptualizing them as semiotic machines rather than as imitations of human cognition. Drawing from structuralist and post-structuralist theories of language-specifically the works of Ferdinand de Saussure and Jacques Derrida-I argue that LLMs should be understood as models of language itself, aligning with Derrida's concept of 'writing' (l'ecriture). The paper is structured into three parts. First, I lay the theoretical groundwork by explaining how the word2vec embedding algorithm operates within Saussure's framework of language as a relational system of signs. Second, I apply Derrida's critique of Saussure to position 'writing' as the object modeled by LLMs, offering a view of the machine's 'mind' as a statistical approximation of sign behavior. Finally, the third section addresses how modern LLMs reflect post-structuralist notions of unfixed meaning, arguing that the "next token generation" mechanism effectively captures the dynamic nature of meaning. By reconceptualizing LLMs as semiotic machines rather than cognitive models, this framework provides an alternative lens through which to assess the strengths and limitations of LLMs, offering new avenues for future research.

Generating Piano Practice Policy with a Gaussian Process

Jun 07, 2024

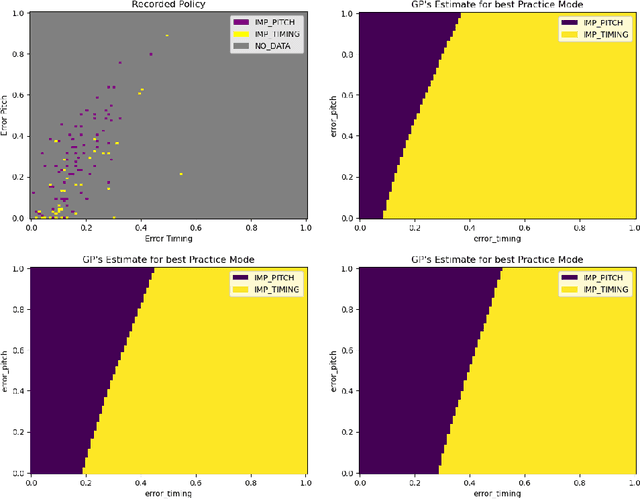

Abstract:A typical process of learning to play a piece on a piano consists of a progression through a series of practice units that focus on individual dimensions of the skill, the so-called practice modes. Practice modes in learning to play music comprise a particularly large set of possibilities, such as hand coordination, posture, articulation, ability to read a music score, correct timing or pitch, etc. Self-guided practice is known to be suboptimal, and a model that schedules optimal practice to maximize a learner's progress still does not exist. Because we each learn differently and there are many choices for possible piano practice tasks and methods, the set of practice modes should be dynamically adapted to the human learner, a process typically guided by a teacher. However, having a human teacher guide individual practice is not always feasible since it is time-consuming, expensive, and often unavailable. In this work, we present a modeling framework to guide the human learner through the learning process by choosing the practice modes generated by a policy model. To this end, we present a computational architecture building on a Gaussian process that incorporates 1) the learner state, 2) a policy that selects a suitable practice mode, 3) performance evaluation, and 4) expert knowledge. The proposed policy model is trained to approximate the expert-learner interaction during a practice session. In our future work, we will test different Bayesian optimization techniques, e.g., different acquisition functions, and evaluate their effect on the learning progress.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge