Douglas Gillespie

Variational autoencoders stabilise TCN performance when classifying weakly labelled bioacoustics data

Oct 22, 2024

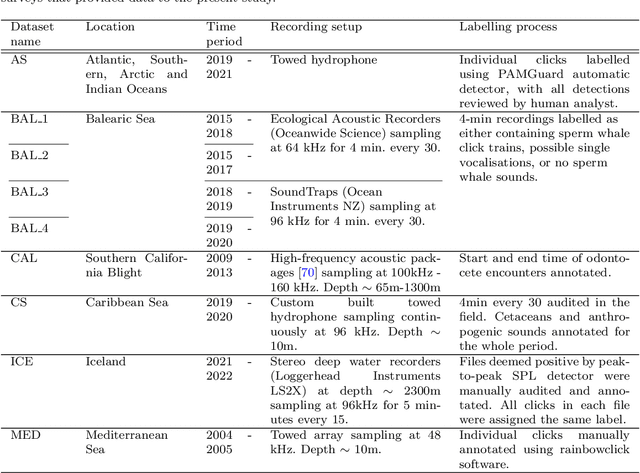

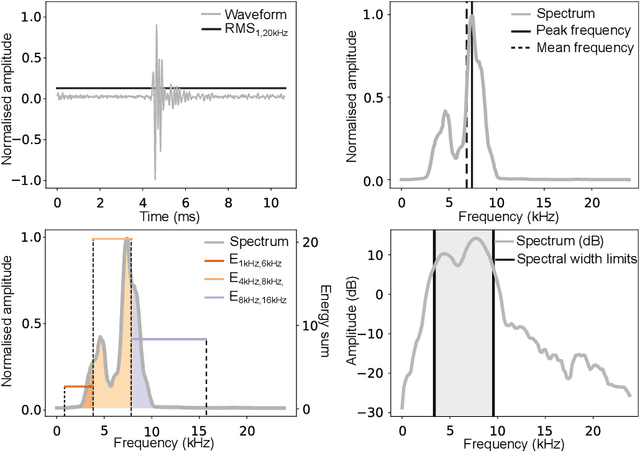

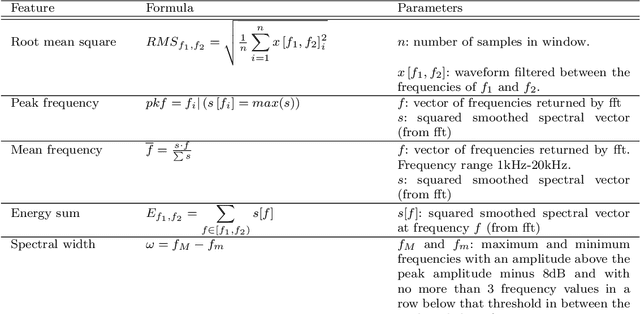

Abstract:Passive acoustic monitoring (PAM) data is often weakly labelled, audited at the scale of detection presence or absence on timescales of minutes to hours. Moreover, this data exhibits great variability from one deployment to the next, due to differences in ambient noise and the signals across sources and geographies. This study proposes a two-step solution to leverage weakly annotated data for training Deep Learning (DL) detection models. Our case study involves binary classification of the presence/absence of sperm whale (\textit{Physeter macrocephalus}) click trains in 4-minute-long recordings from a dataset comprising diverse sources and deployment conditions to maximise generalisability. We tested methods for extracting acoustic features from lengthy audio segments and integrated Temporal Convolutional Networks (TCNs) trained on the extracted features for sequence classification. For feature extraction, we introduced a new approach using Variational AutoEncoders (VAEs) to extract information from both waveforms and spectrograms, which eliminates the necessity for manual threshold setting or time-consuming strong labelling. For classification, TCNs were trained separately on sequences of either VAE embeddings or handpicked acoustic features extracted from the waveform and spectrogram representations using classical methods, to compare the efficacy of the two approaches. The TCN demonstrated robust classification capabilities on a validation set, achieving accuracies exceeding 85\% when applied to 4-minute acoustic recordings. Notably, TCNs trained on handpicked acoustic features exhibited greater variability in performance across recordings from diverse deployment conditions, whereas those trained on VAEs showed a more consistent performance, highlighting the robust transferability of VAEs for feature extraction across different deployment conditions.

Learning Stage-wise GANs for Whistle Extraction in Time-Frequency Spectrograms

Apr 05, 2023

Abstract:Whistle contour extraction aims to derive animal whistles from time-frequency spectrograms as polylines. For toothed whales, whistle extraction results can serve as the basis for analyzing animal abundance, species identity, and social activities. During the last few decades, as long-term recording systems have become affordable, automated whistle extraction algorithms were proposed to process large volumes of recording data. Recently, a deep learning-based method demonstrated superior performance in extracting whistles under varying noise conditions. However, training such networks requires a large amount of labor-intensive annotation, which is not available for many species. To overcome this limitation, we present a framework of stage-wise generative adversarial networks (GANs), which compile new whistle data suitable for deep model training via three stages: generation of background noise in the spectrogram, generation of whistle contours, and generation of whistle signals. By separating the generation of different components in the samples, our framework composes visually promising whistle data and labels even when few expert annotated data are available. Regardless of the amount of human-annotated data, the proposed data augmentation framework leads to a consistent improvement in performance of the whistle extraction model, with a maximum increase of 1.69 in the whistle extraction mean F1-score. Our stage-wise GAN also surpasses one single GAN in improving whistle extraction models with augmented data. The data and code will be available at https://github.com/Paul-LiPu/CompositeGAN\_WhistleAugment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge