Dorian F. Henning

GloPro: Globally-Consistent Uncertainty-Aware 3D Human Pose Estimation & Tracking in the Wild

Sep 20, 2023

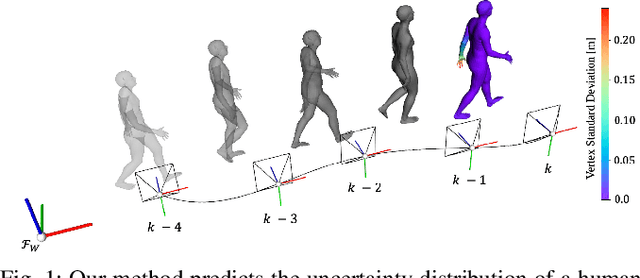

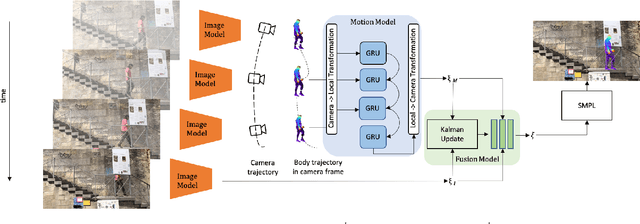

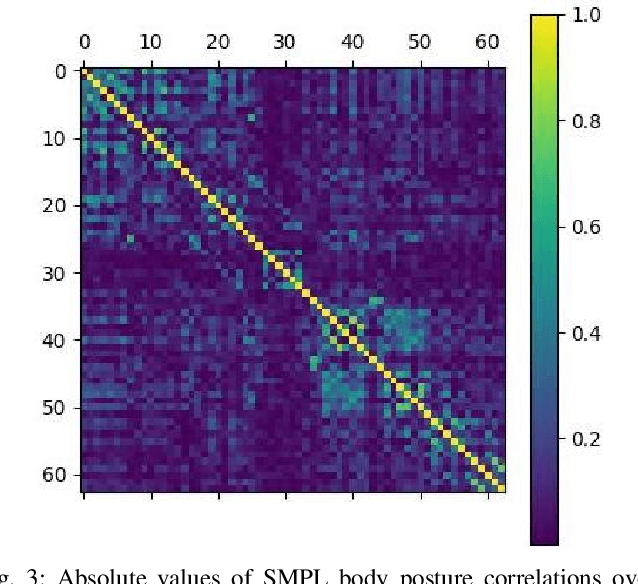

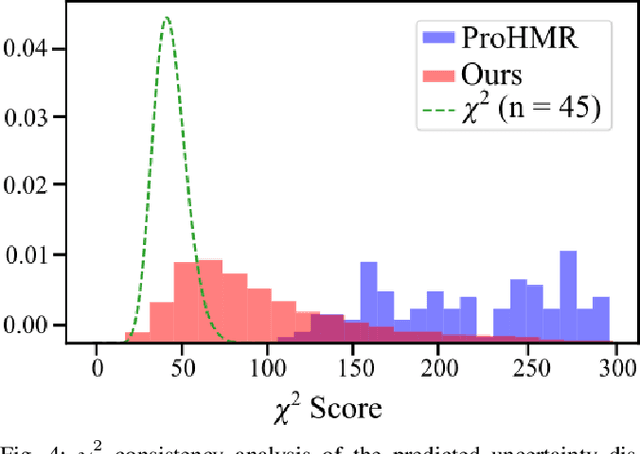

Abstract:An accurate and uncertainty-aware 3D human body pose estimation is key to enabling truly safe but efficient human-robot interactions. Current uncertainty-aware methods in 3D human pose estimation are limited to predicting the uncertainty of the body posture, while effectively neglecting the body shape and root pose. In this work, we present GloPro, which to the best of our knowledge the first framework to predict an uncertainty distribution of a 3D body mesh including its shape, pose, and root pose, by efficiently fusing visual clues with a learned motion model. We demonstrate that it vastly outperforms state-of-the-art methods in terms of human trajectory accuracy in a world coordinate system (even in the presence of severe occlusions), yields consistent uncertainty distributions, and can run in real-time.

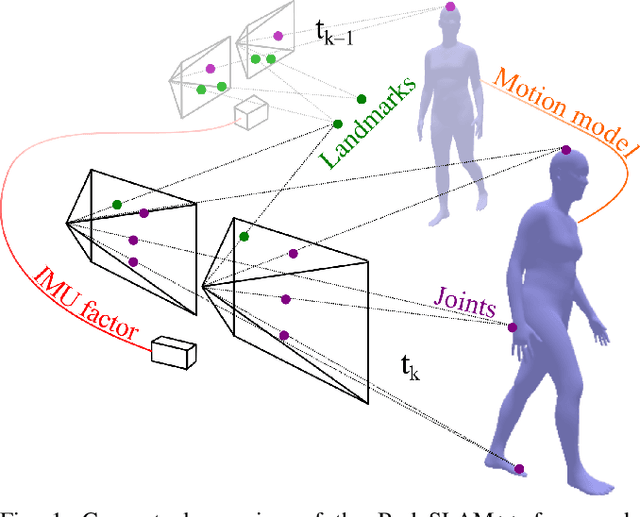

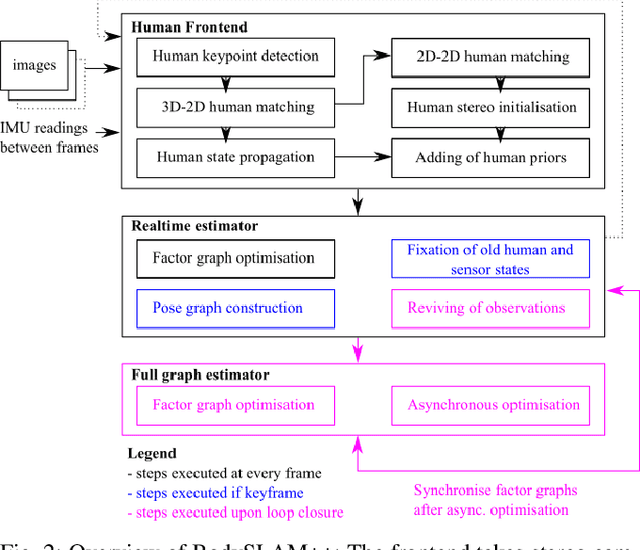

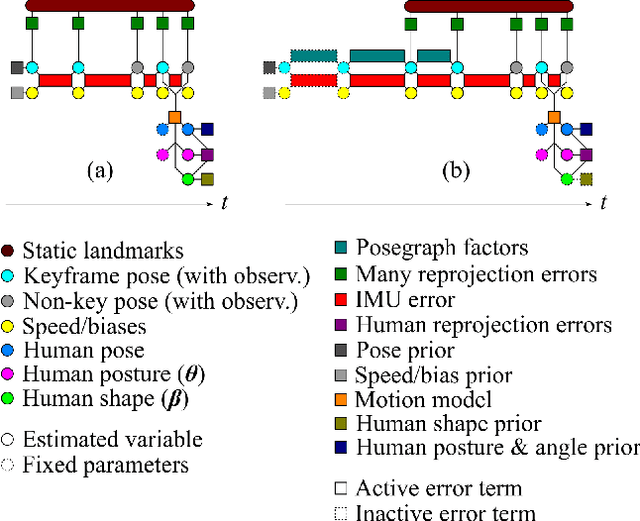

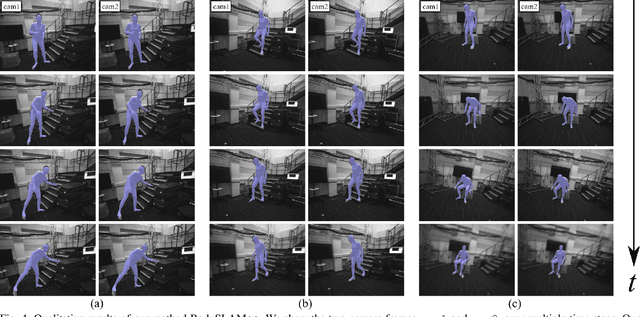

BodySLAM++: Fast and Tightly-Coupled Visual-Inertial Camera and Human Motion Tracking

Sep 03, 2023

Abstract:Robust, fast, and accurate human state - 6D pose and posture - estimation remains a challenging problem. For real-world applications, the ability to estimate the human state in real-time is highly desirable. In this paper, we present BodySLAM++, a fast, efficient, and accurate human and camera state estimation framework relying on visual-inertial data. BodySLAM++ extends an existing visual-inertial state estimation framework, OKVIS2, to solve the dual task of estimating camera and human states simultaneously. Our system improves the accuracy of both human and camera state estimation with respect to baseline methods by 26% and 12%, respectively, and achieves real-time performance at 15+ frames per second on an Intel i7-model CPU. Experiments were conducted on a custom dataset containing both ground truth human and camera poses collected with an indoor motion tracking system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge