Dongzeng Tan

Unleashing the Power of Emojis in Texts via Self-supervised Graph Pre-Training

Sep 26, 2024

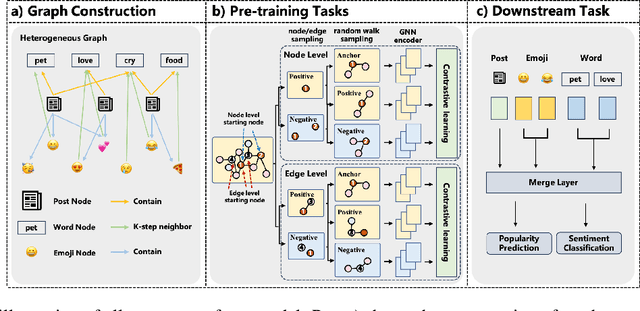

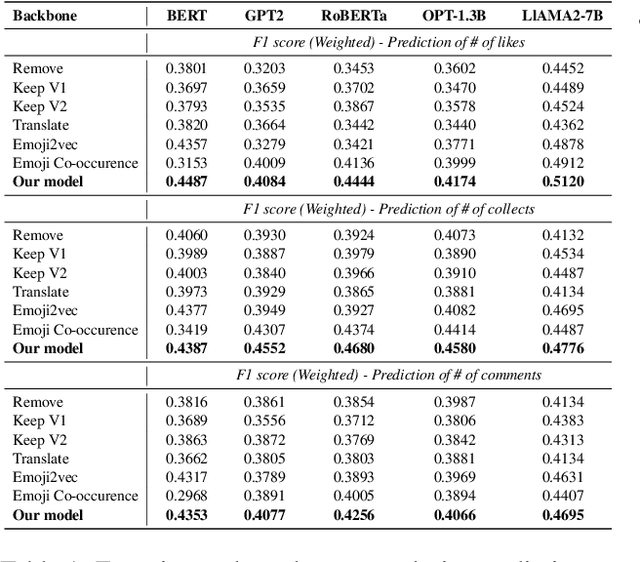

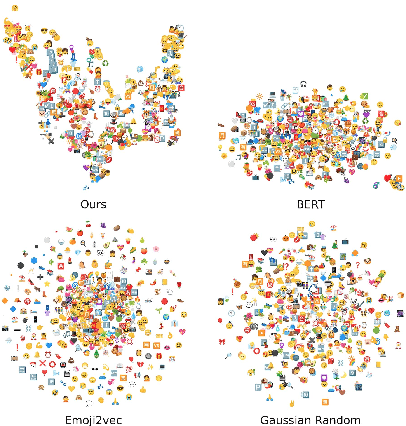

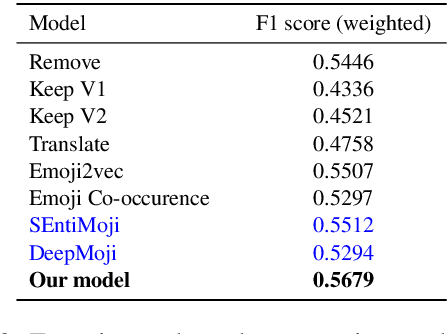

Abstract:Emojis have gained immense popularity on social platforms, serving as a common means to supplement or replace text. However, existing data mining approaches generally either completely ignore or simply treat emojis as ordinary Unicode characters, which may limit the model's ability to grasp the rich semantic information in emojis and the interaction between emojis and texts. Thus, it is necessary to release the emoji's power in social media data mining. To this end, we first construct a heterogeneous graph consisting of three types of nodes, i.e. post, word and emoji nodes to improve the representation of different elements in posts. The edges are also well-defined to model how these three elements interact with each other. To facilitate the sharing of information among post, word and emoji nodes, we propose a graph pre-train framework for text and emoji co-modeling, which contains two graph pre-training tasks: node-level graph contrastive learning and edge-level link reconstruction learning. Extensive experiments on the Xiaohongshu and Twitter datasets with two types of downstream tasks demonstrate that our approach proves significant improvement over previous strong baseline methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge