Dmitriy Kulikov

Applicability limitations of differentiable full-reference image-quality

Dec 11, 2022Abstract:Subjective image-quality measurement plays a critical role in the development of image-processing applications. The purpose of a visual-quality metric is to approximate the results of subjective assessment. In this regard, more and more metrics are under development, but little research has considered their limitations. This paper addresses that deficiency: we show how image preprocessing before compression can artificially increase the quality scores provided by the popular metrics DISTS, LPIPS, HaarPSI, and VIF as well as how these scores are inconsistent with subjective-quality scores. We propose a series of neural-network preprocessing models that increase DISTS by up to 34.5%, LPIPS by up to 36.8%, VIF by up to 98.0%, and HaarPSI by up to 22.6% in the case of JPEG-compressed images. A subjective comparison of preprocessed images showed that for most of the metrics we examined, visual quality drops or stays unchanged, limiting the applicability of these metrics.

Video compression dataset and benchmark of learning-based video-quality metrics

Nov 22, 2022Abstract:Video-quality measurement is a critical task in video processing. Nowadays, many implementations of new encoding standards - such as AV1, VVC, and LCEVC - use deep-learning-based decoding algorithms with perceptual metrics that serve as optimization objectives. But investigations of the performance of modern video- and image-quality metrics commonly employ videos compressed using older standards, such as AVC. In this paper, we present a new benchmark for video-quality metrics that evaluates video compression. It is based on a new dataset consisting of about 2,500 streams encoded using different standards, including AVC, HEVC, AV1, VP9, and VVC. Subjective scores were collected using crowdsourced pairwise comparisons. The list of evaluated metrics includes recent ones based on machine learning and neural networks. The results demonstrate that new no-reference metrics exhibit a high correlation with subjective quality and approach the capability of top full-reference metrics.

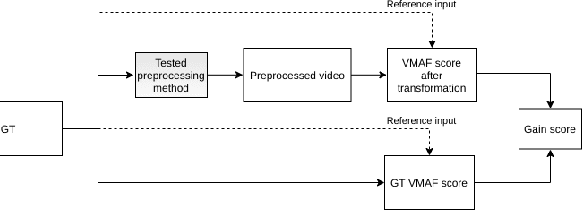

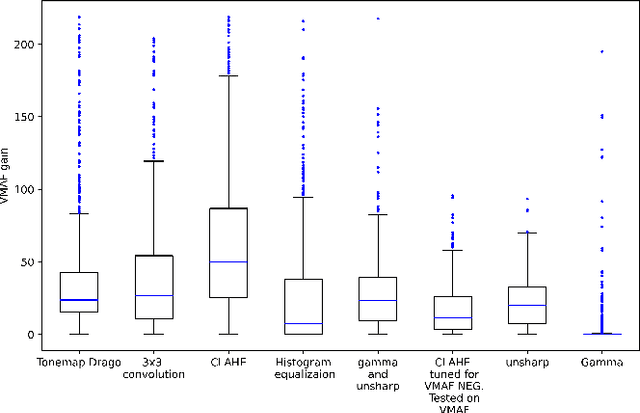

Hacking VMAF and VMAF NEG: vulnerability to different preprocessing methods

Aug 16, 2021

Abstract:Video-quality measurement plays a critical role in the development of video-processing applications. In this paper, we show how video preprocessing can artificially increase the popular quality metric VMAF and its tuning-resistant version, VMAF NEG. We propose a pipeline that tunes processing-algorithm parameters to increase VMAF by up to 218.8%. A subjective comparison revealed that for most preprocessing methods, a video's visual quality drops or stays unchanged. We also show that some preprocessing methods can increase VMAF NEG scores by up to 23.6%.

Objective video quality metrics application to video codecs comparisons: choosing the best for subjective quality estimation

Jul 21, 2021

Abstract:Quality assessment plays a key role in creating and comparing video compression algorithms. Despite the development of a large number of new methods for assessing quality, generally accepted and well-known codecs comparisons mainly use the classical methods like PSNR, SSIM and new method VMAF. These methods can be calculated following different rules: they can use different frame-by-frame averaging techniques or different summation of color components. In this paper, a fundamental comparison of various versions of generally accepted metrics is carried out to find the most relevant and recommended versions of video quality metrics to be used in codecs comparisons. For comparison, we used a set of videos encoded with video codecs of different standards, and visual quality scores collected for the resulting set of streams since 2018 until 2021

Barriers towards no-reference metrics application to compressed video quality analysis: on the example of no-reference metric NIQE

Aug 29, 2019

Abstract:This paper analyses the application of no-reference metric NIQE to the task of video-codec comparison. A number of issues in the metric behaviour on videos was detected and described. The metric has outlying scores on black and solid-coloured frames. The proposed averaging technique for metric quality scores helped to improve the results in some cases. Also, NIQE has low-quality scores for videos with detailed textures and higher scores for videos of lower bitrates due to the blurring of these textures after compression. Although NIQE showed natural results for many tested videos, it is not universal and currently can not be used for video-codec comparisons.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge