Dingjie Peng

Time Step Generating: A Universal Synthesized Deepfake Image Detector

Nov 20, 2024Abstract:Currently, high-fidelity text-to-image models are developed in an accelerating pace. Among them, Diffusion Models have led to a remarkable improvement in the quality of image generation, making it vary challenging to distinguish between real and synthesized images. It simultaneously raises serious concerns regarding privacy and security. Some methods are proposed to distinguish the diffusion model generated images through reconstructing. However, the inversion and denoising processes are time-consuming and heavily reliant on the pre-trained generative model. Consequently, if the pre-trained generative model meet the problem of out-of-domain, the detection performance declines. To address this issue, we propose a universal synthetic image detector Time Step Generating (TSG), which does not rely on pre-trained models' reconstructing ability, specific datasets, or sampling algorithms. Our method utilizes a pre-trained diffusion model's network as a feature extractor to capture fine-grained details, focusing on the subtle differences between real and synthetic images. By controlling the time step t of the network input, we can effectively extract these distinguishing detail features. Then, those features can be passed through a classifier (i.e. Resnet), which efficiently detects whether an image is synthetic or real. We test the proposed TSG on the large-scale GenImage benchmark and it achieves significant improvements in both accuracy and generalizability.

Evaluating Large Language Models on Financial Report Summarization: An Empirical Study

Nov 11, 2024

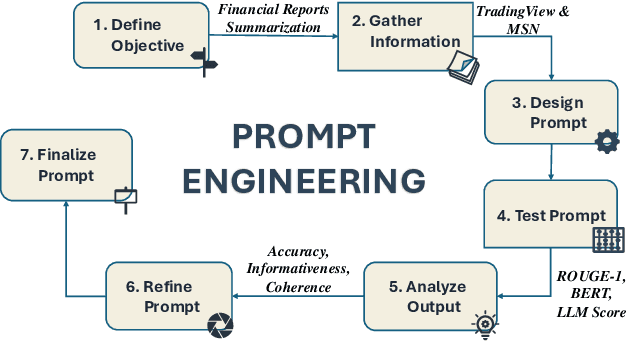

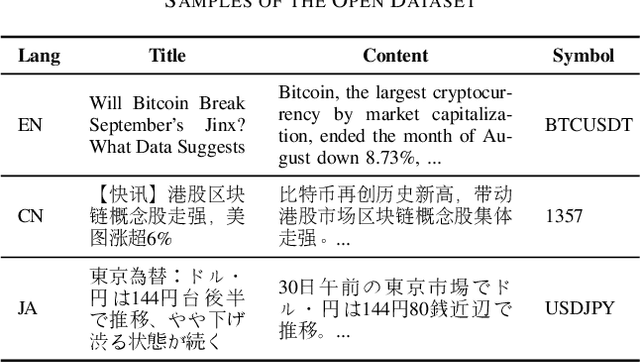

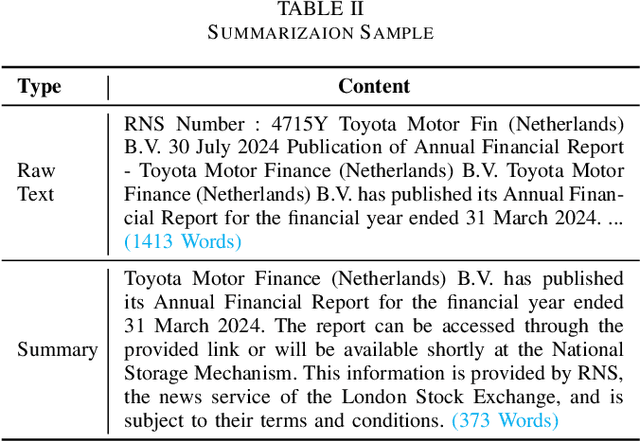

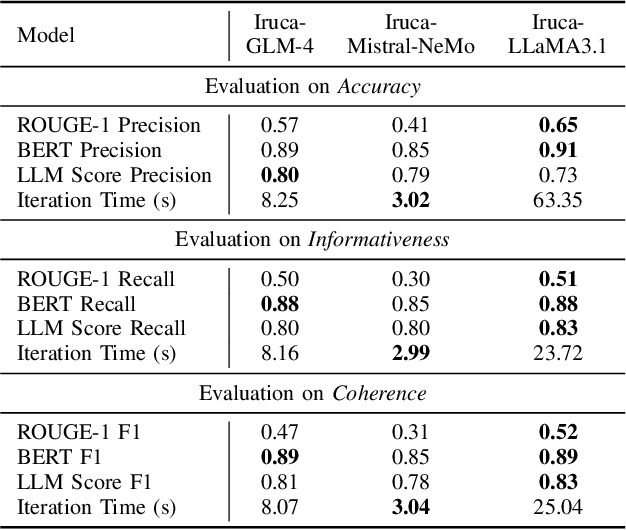

Abstract:In recent years, Large Language Models (LLMs) have demonstrated remarkable versatility across various applications, including natural language understanding, domain-specific knowledge tasks, etc. However, applying LLMs to complex, high-stakes domains like finance requires rigorous evaluation to ensure reliability, accuracy, and compliance with industry standards. To address this need, we conduct a comprehensive and comparative study on three state-of-the-art LLMs, GLM-4, Mistral-NeMo, and LLaMA3.1, focusing on their effectiveness in generating automated financial reports. Our primary motivation is to explore how these models can be harnessed within finance, a field demanding precision, contextual relevance, and robustness against erroneous or misleading information. By examining each model's capabilities, we aim to provide an insightful assessment of their strengths and limitations. Our paper offers benchmarks for financial report analysis, encompassing proposed metrics such as ROUGE-1, BERT Score, and LLM Score. We introduce an innovative evaluation framework that integrates both quantitative metrics (e.g., precision, recall) and qualitative analyses (e.g., contextual fit, consistency) to provide a holistic view of each model's output quality. Additionally, we make our financial dataset publicly available, inviting researchers and practitioners to leverage, scrutinize, and enhance our findings through broader community engagement and collaborative improvement. Our dataset is available on huggingface.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge