Dimitri Staufer

Lost in Moderation: How Commercial Content Moderation APIs Over- and Under-Moderate Group-Targeted Hate Speech and Linguistic Variations

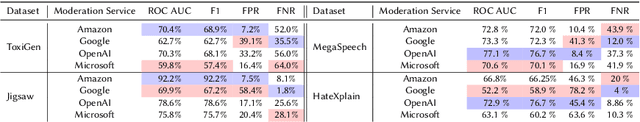

Mar 03, 2025Abstract:Commercial content moderation APIs are marketed as scalable solutions to combat online hate speech. However, the reliance on these APIs risks both silencing legitimate speech, called over-moderation, and failing to protect online platforms from harmful speech, known as under-moderation. To assess such risks, this paper introduces a framework for auditing black-box NLP systems. Using the framework, we systematically evaluate five widely used commercial content moderation APIs. Analyzing five million queries based on four datasets, we find that APIs frequently rely on group identity terms, such as ``black'', to predict hate speech. While OpenAI's and Amazon's services perform slightly better, all providers under-moderate implicit hate speech, which uses codified messages, especially against LGBTQIA+ individuals. Simultaneously, they over-moderate counter-speech, reclaimed slurs and content related to Black, LGBTQIA+, Jewish, and Muslim people. We recommend that API providers offer better guidance on API implementation and threshold setting and more transparency on their APIs' limitations. Warning: This paper contains offensive and hateful terms and concepts. We have chosen to reproduce these terms for reasons of transparency.

Watching the Watchers: A Comparative Fairness Audit of Cloud-based Content Moderation Services

Jun 20, 2024

Abstract:Online platforms face the challenge of moderating an ever-increasing volume of content, including harmful hate speech. In the absence of clear legal definitions and a lack of transparency regarding the role of algorithms in shaping decisions on content moderation, there is a critical need for external accountability. Our study contributes to filling this gap by systematically evaluating four leading cloud-based content moderation services through a third-party audit, highlighting issues such as biases against minorities and vulnerable groups that may arise through over-reliance on these services. Using a black-box audit approach and four benchmark data sets, we measure performance in explicit and implicit hate speech detection as well as counterfactual fairness through perturbation sensitivity analysis and present disparities in performance for certain target identity groups and data sets. Our analysis reveals that all services had difficulties detecting implicit hate speech, which relies on more subtle and codified messages. Moreover, our results point to the need to remove group-specific bias. It seems that biases towards some groups, such as Women, have been mostly rectified, while biases towards other groups, such as LGBTQ+ and PoC remain.

Silencing the Risk, Not the Whistle: A Semi-automated Text Sanitization Tool for Mitigating the Risk of Whistleblower Re-Identification

May 02, 2024Abstract:Whistleblowing is essential for ensuring transparency and accountability in both public and private sectors. However, (potential) whistleblowers often fear or face retaliation, even when reporting anonymously. The specific content of their disclosures and their distinct writing style may re-identify them as the source. Legal measures, such as the EU WBD, are limited in their scope and effectiveness. Therefore, computational methods to prevent re-identification are important complementary tools for encouraging whistleblowers to come forward. However, current text sanitization tools follow a one-size-fits-all approach and take an overly limited view of anonymity. They aim to mitigate identification risk by replacing typical high-risk words (such as person names and other NE labels) and combinations thereof with placeholders. Such an approach, however, is inadequate for the whistleblowing scenario since it neglects further re-identification potential in textual features, including writing style. Therefore, we propose, implement, and evaluate a novel classification and mitigation strategy for rewriting texts that involves the whistleblower in the assessment of the risk and utility. Our prototypical tool semi-automatically evaluates risk at the word/term level and applies risk-adapted anonymization techniques to produce a grammatically disjointed yet appropriately sanitized text. We then use a LLM that we fine-tuned for paraphrasing to render this text coherent and style-neutral. We evaluate our tool's effectiveness using court cases from the ECHR and excerpts from a real-world whistleblower testimony and measure the protection against authorship attribution (AA) attacks and utility loss statistically using the popular IMDb62 movie reviews dataset. Our method can significantly reduce AA accuracy from 98.81% to 31.22%, while preserving up to 73.1% of the original content's semantics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge