Dexin Duan

An Online Adaptation Method for Robust Depth Estimation and Visual Odometry in the Open World

Apr 16, 2025Abstract:Recently, learning-based robotic navigation systems have gained extensive research attention and made significant progress. However, the diversity of open-world scenarios poses a major challenge for the generalization of such systems to practical scenarios. Specifically, learned systems for scene measurement and state estimation tend to degrade when the application scenarios deviate from the training data, resulting to unreliable depth and pose estimation. Toward addressing this problem, this work aims to develop a visual odometry system that can fast adapt to diverse novel environments in an online manner. To this end, we construct a self-supervised online adaptation framework for monocular visual odometry aided by an online-updated depth estimation module. Firstly, we design a monocular depth estimation network with lightweight refiner modules, which enables efficient online adaptation. Then, we construct an objective for self-supervised learning of the depth estimation module based on the output of the visual odometry system and the contextual semantic information of the scene. Specifically, a sparse depth densification module and a dynamic consistency enhancement module are proposed to leverage camera poses and contextual semantics to generate pseudo-depths and valid masks for the online adaptation. Finally, we demonstrate the robustness and generalization capability of the proposed method in comparison with state-of-the-art learning-based approaches on urban, in-house datasets and a robot platform. Code is publicly available at: https://github.com/jixingwu/SOL-SLAM.

Brain-Inspired Online Adaptation for Remote Sensing with Spiking Neural Network

Sep 03, 2024

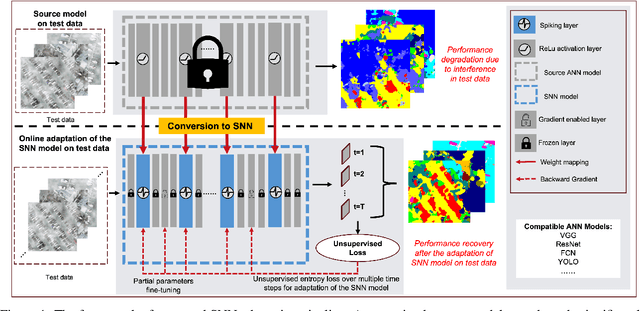

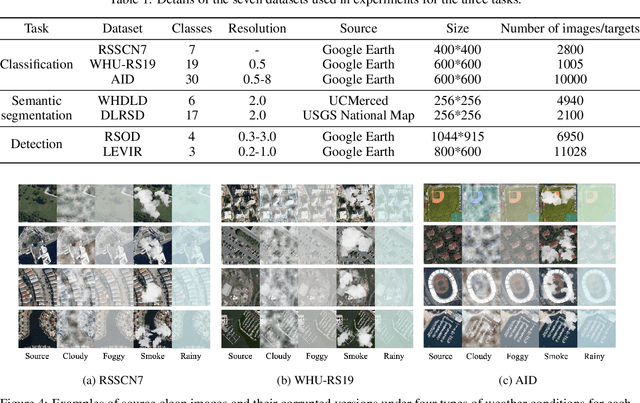

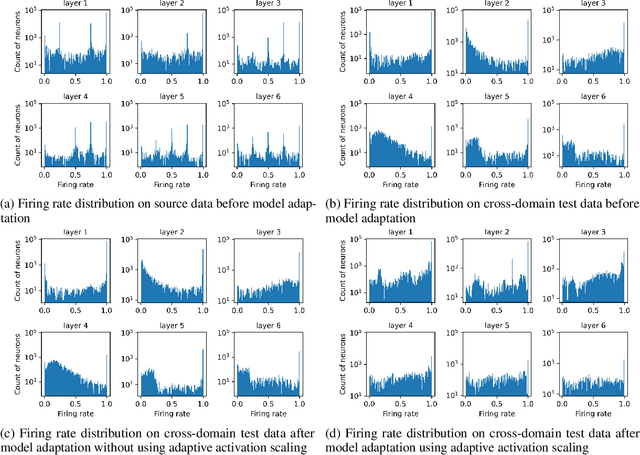

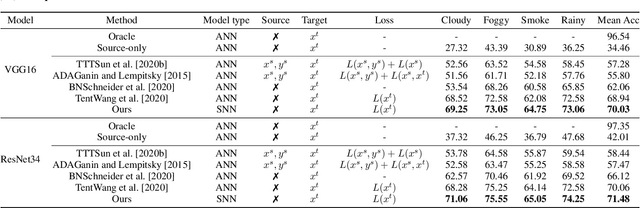

Abstract:On-device computing, or edge computing, is becoming increasingly important for remote sensing, particularly in applications like deep network-based perception on on-orbit satellites and unmanned aerial vehicles (UAVs). In these scenarios, two brain-like capabilities are crucial for remote sensing models: (1) high energy efficiency, allowing the model to operate on edge devices with limited computing resources, and (2) online adaptation, enabling the model to quickly adapt to environmental variations, weather changes, and sensor drift. This work addresses these needs by proposing an online adaptation framework based on spiking neural networks (SNNs) for remote sensing. Starting with a pretrained SNN model, we design an efficient, unsupervised online adaptation algorithm, which adopts an approximation of the BPTT algorithm and only involves forward-in-time computation that significantly reduces the computational complexity of SNN adaptation learning. Besides, we propose an adaptive activation scaling scheme to boost online SNN adaptation performance, particularly in low time-steps. Furthermore, for the more challenging remote sensing detection task, we propose a confidence-based instance weighting scheme, which substantially improves adaptation performance in the detection task. To our knowledge, this work is the first to address the online adaptation of SNNs. Extensive experiments on seven benchmark datasets across classification, segmentation, and detection tasks demonstrate that our proposed method significantly outperforms existing domain adaptation and domain generalization approaches under varying weather conditions. The proposed method enables energy-efficient and fast online adaptation on edge devices, and has much potential in applications such as remote perception on on-orbit satellites and UAV.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge