Dejan Kostic

CASTILLO: Characterizing Response Length Distributions of Large Language Models

May 22, 2025Abstract:Efficiently managing compute resources for Large Language Model (LLM) inference remains challenging due to the inherently stochastic and variable lengths of autoregressive text generation. Accurately estimating response lengths in advance enables proactive resource allocation, yet existing approaches either bias text generation towards certain lengths or rely on assumptions that ignore model- and prompt-specific variability. We introduce CASTILLO, a dataset characterizing response length distributions across 13 widely-used open-source LLMs evaluated on seven distinct instruction-following corpora. For each $\langle$prompt, model$\rangle$ sample pair, we generate 10 independent completions using fixed decoding hyper-parameters, record the token length of each response, and publish summary statistics (mean, std-dev, percentiles), along with the shortest and longest completions, and the exact generation settings. Our analysis reveals significant inter- and intra-model variability in response lengths (even under identical generation settings), as well as model-specific behaviors and occurrences of partial text degeneration in only subsets of responses. CASTILLO enables the development of predictive models for proactive scheduling and provides a systematic framework for analyzing model-specific generation behaviors. We publicly release the dataset and code to foster research at the intersection of generative language modeling and systems.

Priority-Aware Preemptive Scheduling for Mixed-Priority Workloads in MoE Inference

Mar 12, 2025

Abstract:Large Language Models have revolutionized natural language processing, yet serving them efficiently in data centers remains challenging due to mixed workloads comprising latency-sensitive (LS) and best-effort (BE) jobs. Existing inference systems employ iteration-level first-come-first-served scheduling, causing head-of-line blocking when BE jobs delay LS jobs. We introduce QLLM, a novel inference system designed for Mixture of Experts (MoE) models, featuring a fine-grained, priority-aware preemptive scheduler. QLLM enables expert-level preemption, deferring BE job execution while minimizing LS time-to-first-token (TTFT). Our approach removes iteration-level scheduling constraints, enabling the scheduler to preempt jobs at any layer based on priority. Evaluations on an Nvidia A100 GPU show that QLLM significantly improves performance. It reduces LS TTFT by an average of $65.5\times$ and meets the SLO at up to $7$ requests/sec, whereas the baseline fails to do so under the tested workload. Additionally, it cuts LS turnaround time by up to $12.8\times$ without impacting throughput. QLLM is modular, extensible, and seamlessly integrates with Hugging Face MoE models.

Deriving Coding-Specific Sub-Models from LLMs using Resource-Efficient Pruning

Jan 09, 2025

Abstract:Large Language Models (LLMs) have demonstrated their exceptional performance in various complex code generation tasks. However, their broader adoption is limited by significant computational demands and high resource requirements, particularly memory and processing power. To mitigate such requirements, model pruning techniques are used to create more compact models with significantly fewer parameters. However, current approaches do not focus on the efficient extraction of programming-language-specific sub-models. In this work, we explore the idea of efficiently deriving coding-specific sub-models through unstructured pruning (i.e., Wanda). We investigate the impact of different domain-specific calibration datasets on pruning outcomes across three distinct domains and extend our analysis to extracting four language-specific sub-models: Python, Java, C++, and JavaScript. We are the first to efficiently extract programming-language-specific sub-models using appropriate calibration datasets while maintaining acceptable accuracy w.r.t. full models. We are also the first to provide analytical evidence that domain-specific tasks activate distinct regions within LLMs, supporting the creation of specialized sub-models through unstructured pruning. We believe that this work has significant potential to enhance LLM accessibility for coding by reducing computational requirements to enable local execution on consumer-grade hardware, and supporting faster inference times critical for real-time development feedback.

Automating the Detection of Code Vulnerabilities by Analyzing GitHub Issues

Jan 09, 2025Abstract:In today's digital landscape, the importance of timely and accurate vulnerability detection has significantly increased. This paper presents a novel approach that leverages transformer-based models and machine learning techniques to automate the identification of software vulnerabilities by analyzing GitHub issues. We introduce a new dataset specifically designed for classifying GitHub issues relevant to vulnerability detection. We then examine various classification techniques to determine their effectiveness. The results demonstrate the potential of this approach for real-world application in early vulnerability detection, which could substantially reduce the window of exploitation for software vulnerabilities. This research makes a key contribution to the field by providing a scalable and computationally efficient framework for automated detection, enabling the prevention of compromised software usage before official notifications. This work has the potential to enhance the security of open-source software ecosystems.

Robust Generalization of Graph Neural Networks for Carrier Scheduling

Jul 11, 2024Abstract:Battery-free sensor tags are devices that leverage backscatter techniques to communicate with standard IoT devices, thereby augmenting a network's sensing capabilities in a scalable way. For communicating, a sensor tag relies on an unmodulated carrier provided by a neighboring IoT device, with a schedule coordinating this provisioning across the network. Carrier scheduling--computing schedules to interrogate all sensor tags while minimizing energy, spectrum utilization, and latency--is an NP-Hard optimization problem. Recent work introduces learning-based schedulers that achieve resource savings over a carefully-crafted heuristic, generalizing to networks of up to 60 nodes. However, we find that their advantage diminishes in networks with hundreds of nodes, and degrades further in larger setups. This paper introduces RobustGANTT, a GNN-based scheduler that improves generalization (without re-training) to networks up to 1000 nodes (100x training topology sizes). RobustGANTT not only achieves better and more consistent generalization, but also computes schedules requiring up to 2x less resources than existing systems. Our scheduler exhibits average runtimes of hundreds of milliseconds, allowing it to react fast to changing network conditions. Our work not only improves resource utilization in large-scale backscatter networks, but also offers valuable insights in learning-based scheduling.

FMM-Head: Enhancing Autoencoder-based ECG anomaly detection with prior knowledge

Oct 06, 2023Abstract:Detecting anomalies in electrocardiogram data is crucial to identifying deviations from normal heartbeat patterns and providing timely intervention to at-risk patients. Various AutoEncoder models (AE) have been proposed to tackle the anomaly detection task with ML. However, these models do not consider the specific patterns of ECG leads and are unexplainable black boxes. In contrast, we replace the decoding part of the AE with a reconstruction head (namely, FMM-Head) based on prior knowledge of the ECG shape. Our model consistently achieves higher anomaly detection capabilities than state-of-the-art models, up to 0.31 increase in area under the ROC curve (AUROC), with as little as half the original model size and explainable extracted features. The processing time of our model is four orders of magnitude lower than solving an optimization problem to obtain the same parameters, thus making it suitable for real-time ECG parameters extraction and anomaly detection.

Fast Server Learning Rate Tuning for Coded Federated Dropout

Jan 26, 2022

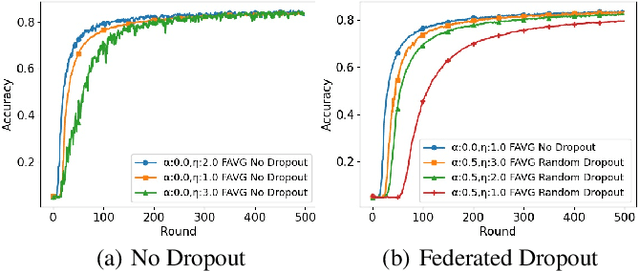

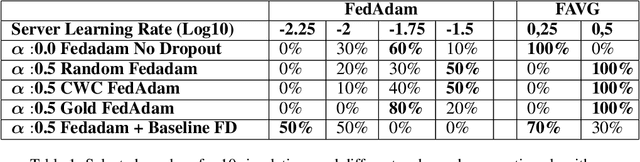

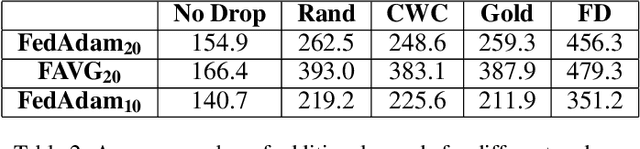

Abstract:In cross-device Federated Learning (FL), clients with low computational power train a common machine model by exchanging parameters updates instead of potentially private data. Federated Dropout (FD) is a technique that improves the communication efficiency of a FL session by selecting a subset of model variables to be updated in each training round. However, FD produces considerably lower accuracy and higher convergence time compared to standard FL. In this paper, we leverage coding theory to enhance FD by allowing a different sub-model to be used at each client. We also show that by carefully tuning the server learning rate hyper-parameter, we can achieve higher training speed and up to the same final accuracy of the no dropout case. For the EMNIST dataset, our mechanism achieves 99.6 % of the final accuracy of the no dropout case while requiring 2.43x less bandwidth to achieve this accuracy level.

DeepGANTT: A Scalable Deep Learning Scheduler for Backscatter Networks

Dec 24, 2021

Abstract:Recent backscatter communication techniques enable ultra low power wireless devices that operate without batteries while interoperating directly with unmodified commodity wireless devices. Commodity devices cooperate in providing the unmodulated carrier that the battery-free nodes need to communicate while collecting energy from their environment to perform sensing, computation, and communication tasks. The optimal provision of the unmodulated carrier limits the size of the network because it is an NP-hard combinatorial optimization problem. Consequently, previous works either ignore carrier optimization altogether or resort to suboptimal heuristics, wasting valuable energy and spectral resources. We present DeepGANTT, a deep learning scheduler for battery-free devices interoperating with wireless commodity ones. DeepGANTT leverages graph neural networks to overcome variable input and output size challenges inherent to this problem. We train our deep learning scheduler with optimal schedules of relatively small size obtained from a constraint optimization solver. DeepGANTT not only outperforms a carefully crafted heuristic solution but also performs within ~3% of the optimal scheduler on trained problem sizes. Finally, DeepGANTT generalizes to problems more than four times larger than the maximum used for training, therefore breaking the scalability limitations of the optimal scheduler and paving the way for more efficient backscatter networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge