Decai Chen

Adaptive and Temporally Consistent Gaussian Surfels for Multi-view Dynamic Reconstruction

Nov 10, 2024Abstract:3D Gaussian Splatting has recently achieved notable success in novel view synthesis for dynamic scenes and geometry reconstruction in static scenes. Building on these advancements, early methods have been developed for dynamic surface reconstruction by globally optimizing entire sequences. However, reconstructing dynamic scenes with significant topology changes, emerging or disappearing objects, and rapid movements remains a substantial challenge, particularly for long sequences. To address these issues, we propose AT-GS, a novel method for reconstructing high-quality dynamic surfaces from multi-view videos through per-frame incremental optimization. To avoid local minima across frames, we introduce a unified and adaptive gradient-aware densification strategy that integrates the strengths of conventional cloning and splitting techniques. Additionally, we reduce temporal jittering in dynamic surfaces by ensuring consistency in curvature maps across consecutive frames. Our method achieves superior accuracy and temporal coherence in dynamic surface reconstruction, delivering high-fidelity space-time novel view synthesis, even in complex and challenging scenes. Extensive experiments on diverse multi-view video datasets demonstrate the effectiveness of our approach, showing clear advantages over baseline methods. Project page: \url{https://fraunhoferhhi.github.io/AT-GS}

Dynamic Multi-View Scene Reconstruction Using Neural Implicit Surface

Feb 28, 2023Abstract:Reconstructing general dynamic scenes is important for many computer vision and graphics applications. Recent works represent the dynamic scene with neural radiance fields for photorealistic view synthesis, while their surface geometry is under-constrained and noisy. Other works introduce surface constraints to the implicit neural representation to disentangle the ambiguity of geometry and appearance field for static scene reconstruction. To bridge the gap between rendering dynamic scenes and recovering static surface geometry, we propose a template-free method to reconstruct surface geometry and appearance using neural implicit representations from multi-view videos. We leverage topology-aware deformation and the signed distance field to learn complex dynamic surfaces via differentiable volume rendering without scene-specific prior knowledge like template models. Furthermore, we propose a novel mask-based ray selection strategy to significantly boost the optimization on challenging time-varying regions. Experiments on different multi-view video datasets demonstrate that our method achieves high-fidelity surface reconstruction as well as photorealistic novel view synthesis.

Recovering Fine Details for Neural Implicit Surface Reconstruction

Nov 21, 2022

Abstract:Recent works on implicit neural representations have made significant strides. Learning implicit neural surfaces using volume rendering has gained popularity in multi-view reconstruction without 3D supervision. However, accurately recovering fine details is still challenging, due to the underlying ambiguity of geometry and appearance representation. In this paper, we present D-NeuS, a volume rendering-base neural implicit surface reconstruction method capable to recover fine geometry details, which extends NeuS by two additional loss functions targeting enhanced reconstruction quality. First, we encourage the rendered surface points from alpha compositing to have zero signed distance values, alleviating the geometry bias arising from transforming SDF to density for volume rendering. Second, we impose multi-view feature consistency on the surface points, derived by interpolating SDF zero-crossings from sampled points along rays. Extensive quantitative and qualitative results demonstrate that our method reconstructs high-accuracy surfaces with details, and outperforms the state of the art.

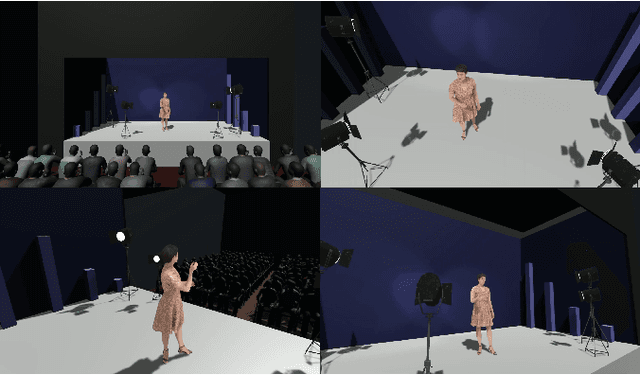

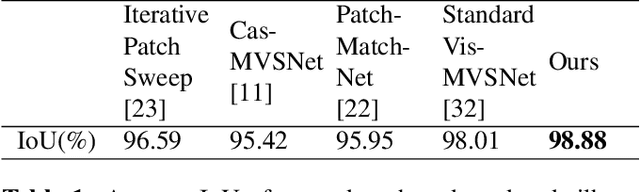

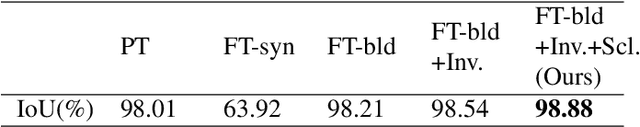

Accurate Human Body Reconstruction for Volumetric Video

Feb 26, 2022

Abstract:In this work, we enhance a professional end-to-end volumetric video production pipeline to achieve high-fidelity human body reconstruction using only passive cameras. While current volumetric video approaches estimate depth maps using traditional stereo matching techniques, we introduce and optimize deep learning-based multi-view stereo networks for depth map estimation in the context of professional volumetric video reconstruction. Furthermore, we propose a novel depth map post-processing approach including filtering and fusion, by taking into account photometric confidence, cross-view geometric consistency, foreground masks as well as camera viewing frustums. We show that our method can generate high levels of geometric detail for reconstructed human bodies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge