David R. Walton

Blind Augmentation: Calibration-free Camera Distortion Model Estimation for Real-time Mixed-reality Consistency

Mar 03, 2025

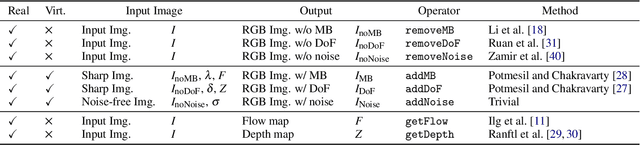

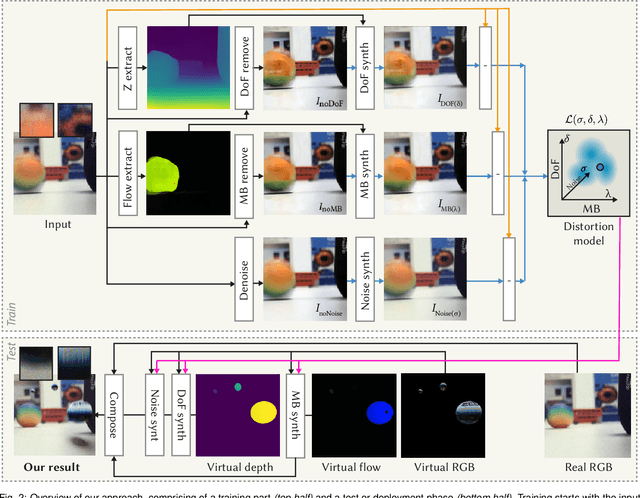

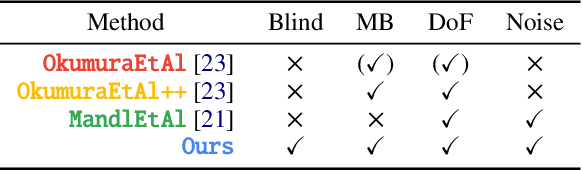

Abstract:Real camera footage is subject to noise, motion blur (MB) and depth of field (DoF). In some applications these might be considered distortions to be removed, but in others it is important to model them because it would be ineffective, or interfere with an aesthetic choice, to simply remove them. In augmented reality applications where virtual content is composed into a live video feed, we can model noise, MB and DoF to make the virtual content visually consistent with the video. Existing methods for this typically suffer two main limitations. First, they require a camera calibration step to relate a known calibration target to the specific cameras response. Second, existing work require methods that can be (differentiably) tuned to the calibration, such as slow and specialized neural networks. We propose a method which estimates parameters for noise, MB and DoF instantly, which allows using off-the-shelf real-time simulation methods from e.g., a game engine in compositing augmented content. Our main idea is to unlock both features by showing how to use modern computer vision methods that can remove noise, MB and DoF from the video stream, essentially providing self-calibration. This allows to auto-tune any black-box real-time noise+MB+DoF method to deliver fast and high-fidelity augmentation consistency.

MaskFlow: Object-Aware Motion Estimation

Nov 21, 2023Abstract:We introduce a novel motion estimation method, MaskFlow, that is capable of estimating accurate motion fields, even in very challenging cases with small objects, large displacements and drastic appearance changes. In addition to lower-level features, that are used in other Deep Neural Network (DNN)-based motion estimation methods, MaskFlow draws from object-level features and segmentations. These features and segmentations are used to approximate the objects' translation motion field. We propose a novel and effective way of incorporating the incomplete translation motion field into a subsequent motion estimation network for refinement and completion. We also produced a new challenging synthetic dataset with motion field ground truth, and also provide extra ground truth for the object-instance matchings and corresponding segmentation masks. We demonstrate that MaskFlow outperforms state of the art methods when evaluated on our new challenging dataset, whilst still producing comparable results on the popular FlyingThings3D benchmark dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge