David C. Schedl

The Way Up: A Dataset for Hold Usage Detection in Sport Climbing

May 19, 2025Abstract:Detecting an athlete's position on a route and identifying hold usage are crucial in various climbing-related applications. However, no climbing dataset with detailed hold usage annotations exists to our knowledge. To address this issue, we introduce a dataset of 22 annotated climbing videos, providing ground-truth labels for hold locations, usage order, and time of use. Furthermore, we explore the application of keypoint-based 2D pose-estimation models for detecting hold usage in sport climbing. We determine usage by analyzing the key points of certain joints and the corresponding overlap with climbing holds. We evaluate multiple state-of-the-art models and analyze their accuracy on our dataset, identifying and highlighting climbing-specific challenges. Our dataset and results highlight key challenges in climbing-specific pose estimation and establish a foundation for future research toward AI-assisted systems for sports climbing.

Touch Sensing on Semi-Elastic Textiles with Border-Based Sensors

May 17, 2023Abstract:This study presents a novel approach for touch sensing using semi-elastic textile surfaces that does not require the placement of additional sensors in the sensing area, instead relying on sensors located on the border of the textile. The proposed approach is demonstrated through experiments involving an elastic Jersey fabric and a variety of machine-learning models. The performance of one particular border-based sensor design is evaluated in depth. By using visual markers, the best-performing visual sensor arrangement predicts a single touch point with a mean squared error of 1.36 mm on an area of 125mm by 125mm. We built a textile only prototype that is able to classify touch at three indent levels (0, 15, and 20 mm) with an accuracy of 82.85%. Our results suggest that this approach has potential applications in wearable technology and smart textiles, making it a promising avenue for further exploration in these fields.

Through-Foliage Tracking with Airborne Optical Sectioning

Nov 30, 2021

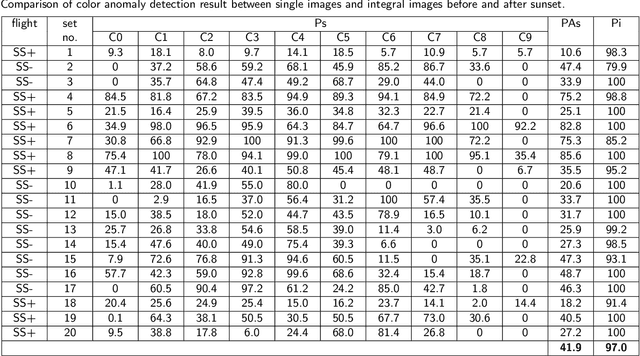

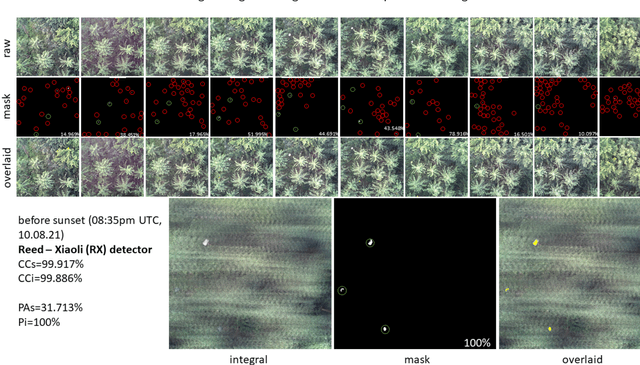

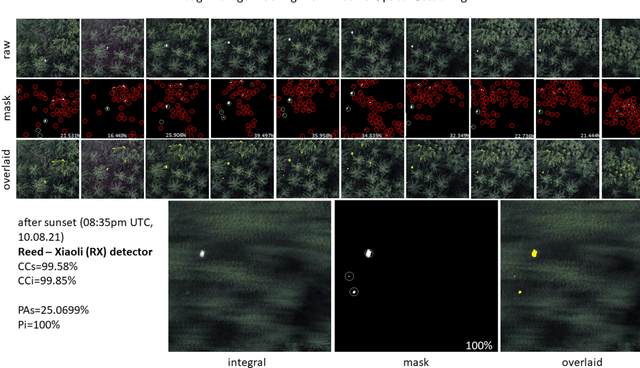

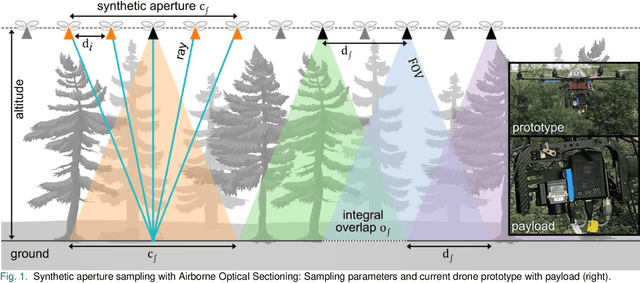

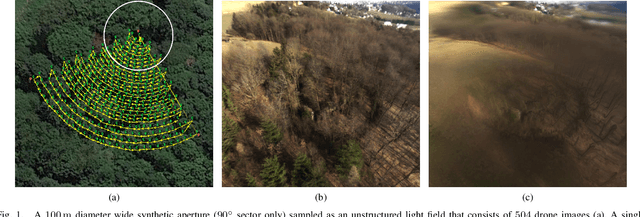

Abstract:Detecting and tracking moving targets through foliage is difficult, and for many cases even impossible in regular aerial images and videos. We present an initial light-weight and drone-operated 1D camera array that supports parallel synthetic aperture aerial imaging. Our main finding is that color anomaly detection benefits significantly from image integration when compared to conventional raw images or video frames (on average 97% vs. 42% in precision in our field experiments). We demonstrate, that these two contributions can lead to the detection and tracking of moving people through densely occluding forest.

Combined Person Classification with Airborne Optical Sectioning

Jun 18, 2021

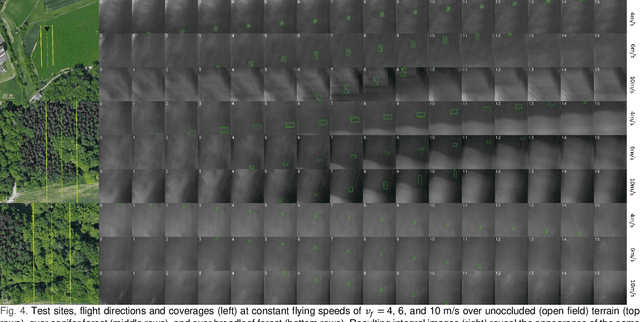

Abstract:Fully autonomous drones have been demonstrated to find lost or injured persons under strongly occluding forest canopy. Airborne Optical Sectioning (AOS), a novel synthetic aperture imaging technique, together with deep-learning-based classification enables high detection rates under realistic search-and-rescue conditions. We demonstrate that false detections can be significantly suppressed and true detections boosted by combining classifications from multiple AOS rather than single integral images. This improves classification rates especially in the presence of occlusion. To make this possible, we modified the AOS imaging process to support large overlaps between subsequent integrals, enabling real-time and on-board scanning and processing of groundspeeds up to 10 m/s.

Pose Error Reduction for Focus Enhancement in Thermal Synthetic Aperture Visualization

Dec 15, 2020Abstract:Airborne optical sectioning, an effective aerial synthetic aperture imaging technique for revealing artifacts occluded by forests, requires precise measurements of drone poses. In this article we present a new approach for reducing pose estimation errors beyond the possibilities of conventional Perspective-n-Point solutions by considering the underlying optimization as a focusing problem. We present an efficient image integration technique, which also reduces the parameter search space to achieve realistic processing times, and improves the quality of resulting synthetic integral images.

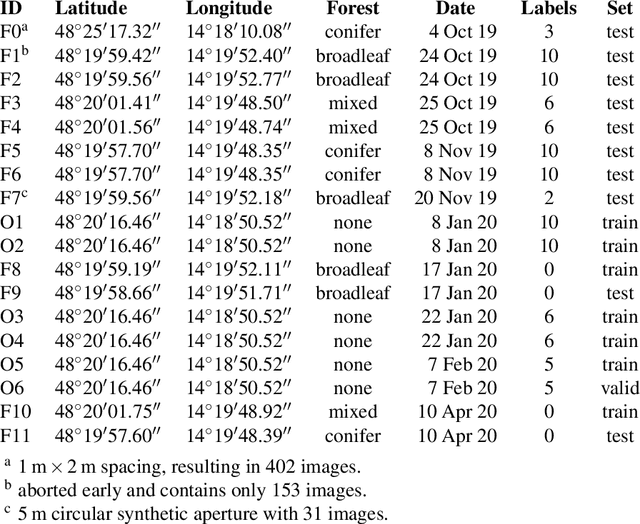

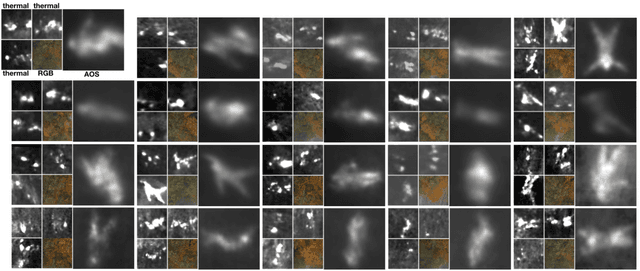

Search and Rescue with Airborne Optical Sectioning

Sep 18, 2020

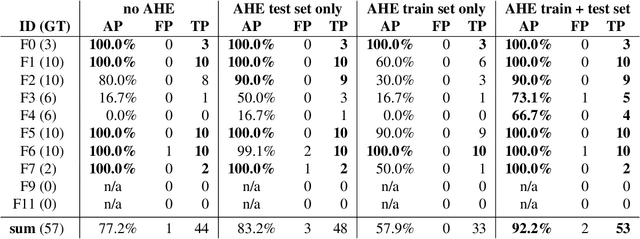

Abstract:We show that automated person detection under occlusion conditions can be significantly improved by combining multi-perspective images before classification. Here, we employed image integration by Airborne Optical Sectioning (AOS)---a synthetic aperture imaging technique that uses camera drones to capture unstructured thermal light fields---to achieve this with a precision/recall of 96/93%. Finding lost or injured people in dense forests is not generally feasible with thermal recordings, but becomes practical with use of AOS integral images. Our findings lay the foundation for effective future search and rescue technologies that can be applied in combination with autonomous or manned aircraft. They can also be beneficial for other fields that currently suffer from inaccurate classification of partially occluded people, animals, or objects.

Fast Automatic Visibility Optimization for Thermal Synthetic Aperture Visualization

May 08, 2020

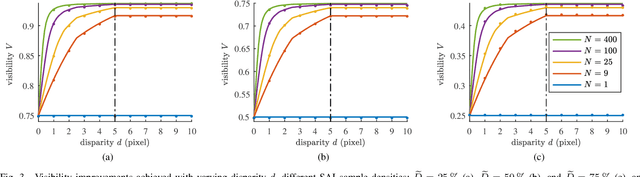

Abstract:In this article, we describe and validate the first fully automatic parameter optimization for thermal synthetic aperture visualization. It replaces previous manual exploration of the parameter space, which is time consuming and error prone. We prove that the visibility of targets in thermal integral images is proportional to the variance of the targets' image. Since this is invariant to occlusion it represents a suitable objective function for optimization. Our findings have the potential to enable fully autonomous search and recuse operations with camera drones.

A Statistical View on Synthetic Aperture Imaging for Occlusion Removal

Jun 15, 2019

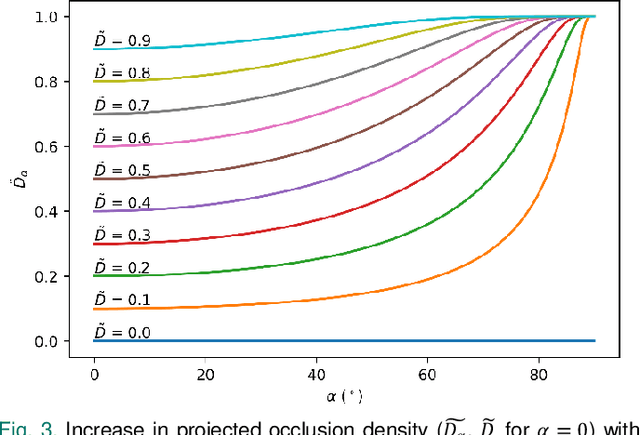

Abstract:Synthetic apertures find applications in many fields, such as radar, radio telescopes, microscopy, sonar, ultrasound, LiDAR, and optical imaging. They approximate the signal of a single hypothetical wide aperture sensor with either an array of static small aperture sensors or a single moving small aperture sensor. Common sense in synthetic aperture sampling is that a dense sampling pattern within a wide aperture is required to reconstruct a clear signal. In this article we show that there exists practical limits to both, synthetic aperture size and number of samples for the application of occlusion removal. This leads to an understanding on how to design synthetic aperture sampling patterns and sensors in a most optimal and practically efficient way. We apply our findings to airborne optical sectioning which uses camera drones and synthetic aperture imaging to computationally remove occluding vegetation or trees for inspecting ground surfaces.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge