Daniel Nyga

Joint Probability Trees

Feb 14, 2023

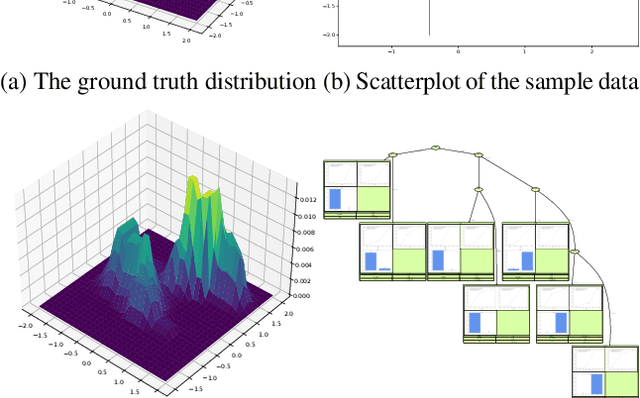

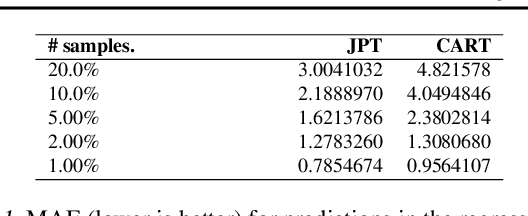

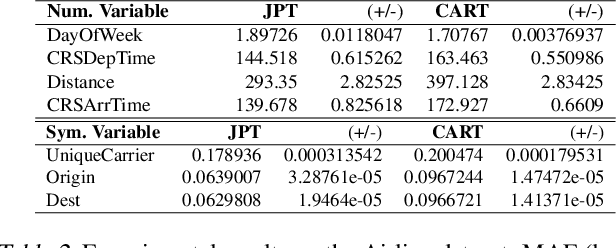

Abstract:We introduce Joint Probability Trees (JPT), a novel approach that makes learning of and reasoning about joint probability distributions tractable for practical applications. JPTs support both symbolic and subsymbolic variables in a single hybrid model, and they do not rely on prior knowledge about variable dependencies or families of distributions. JPT representations build on tree structures that partition the problem space into relevant subregions that are elicited from the training data instead of postulating a rigid dependency model prior to learning. Learning and reasoning scale linearly in JPTs, and the tree structure allows white-box reasoning about any posterior probability $P(Q|E)$, such that interpretable explanations can be provided for any inference result. Our experiments showcase the practical applicability of JPTs in high-dimensional heterogeneous probability spaces with millions of training samples, making it a promising alternative to classic probabilistic graphical models.

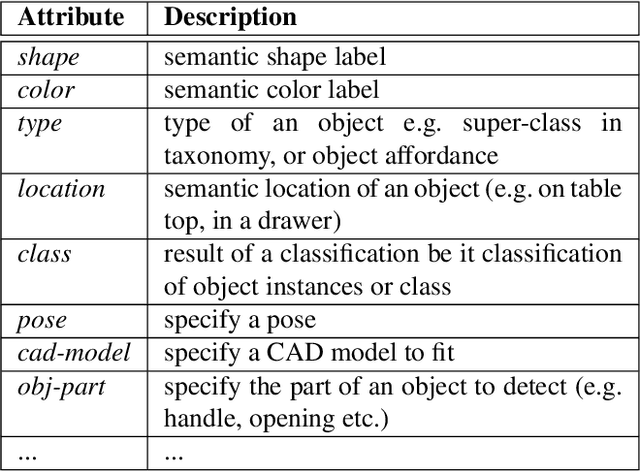

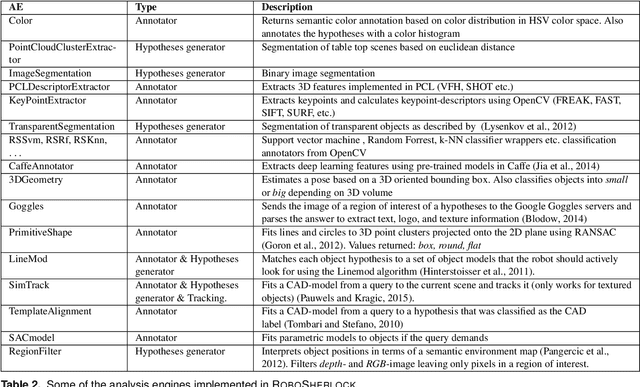

RoboSherlock: Cognition-enabled Robot Perception for Everyday Manipulation Tasks

Nov 22, 2019

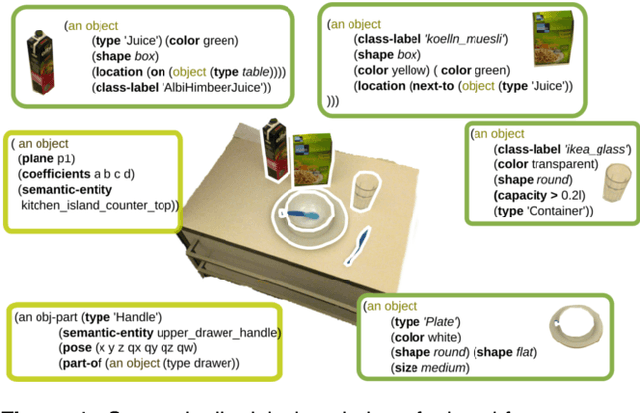

Abstract:A pressing question when designing intelligent autonomous systems is how to integrate the various subsystems concerned with complementary tasks. More specifically, robotic vision must provide task-relevant information about the environment and the objects in it to various planning related modules. In most implementations of the traditional Perception-Cognition-Action paradigm these tasks are treated as quasi-independent modules that function as black boxes for each other. It is our view that perception can benefit tremendously from a tight collaboration with cognition. We present RoboSherlock, a knowledge-enabled cognitive perception systems for mobile robots performing human-scale everyday manipulation tasks. In RoboSherlock, perception and interpretation of realistic scenes is formulated as an unstructured information management(UIM) problem. The application of the UIM principle supports the implementation of perception systems that can answer task-relevant queries about objects in a scene, boost object recognition performance by combining the strengths of multiple perception algorithms, support knowledge-enabled reasoning about objects and enable automatic and knowledge-driven generation of processing pipelines. We demonstrate the potential of the proposed framework through feasibility studies of systems for real-world scene perception that have been built on top of the framework.

Reasoning about Unmodelled Concepts - Incorporating Class Taxonomies in Probabilistic Relational Models

Apr 21, 2015

Abstract:A key problem in the application of first-order probabilistic methods is the enormous size of graphical models they imply. The size results from the possible worlds that can be generated by a domain of objects and relations. One of the reasons for this explosion is that so far the approaches do not sufficiently exploit the structure and similarity of possible worlds in order to encode the models more compactly. We propose fuzzy inference in Markov logic networks, which enables the use of taxonomic knowledge as a source of imposing structure onto possible worlds. We show that by exploiting this structure, probability distributions can be represented more compactly and that the reasoning systems become capable of reasoning about concepts not contained in the probabilistic knowledge base.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge