Get our free extension to see links to code for papers anywhere online!Free add-on: code for papers everywhere!Free add-on: See code for papers anywhere!

Damián A. Furman

RoBERTuito: a pre-trained language model for social media text in Spanish

Nov 18, 2021Figures and Tables:

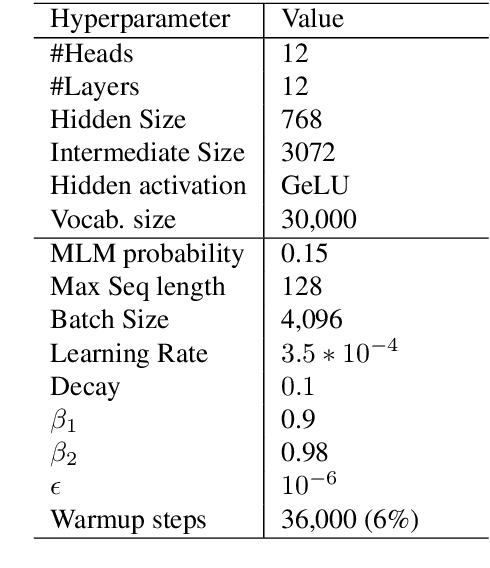

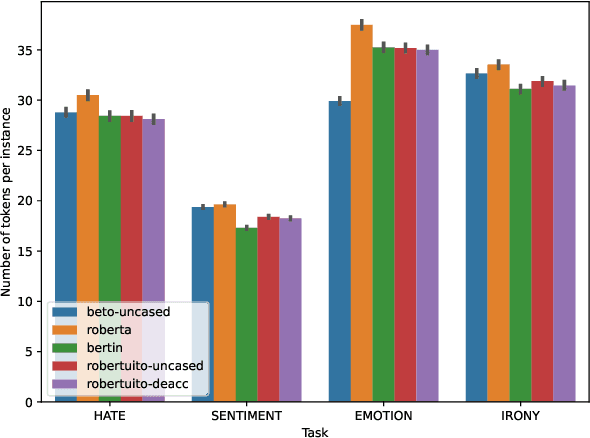

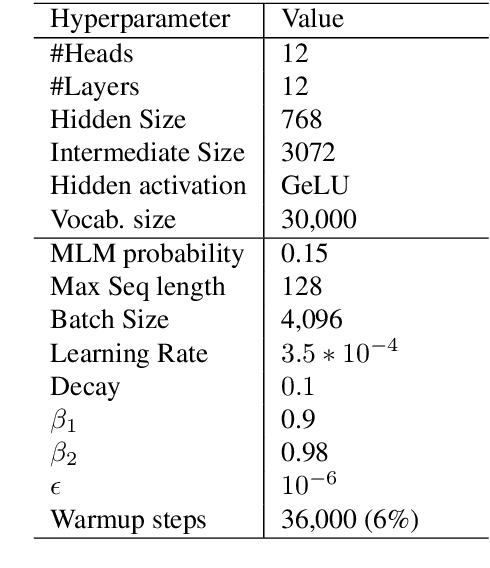

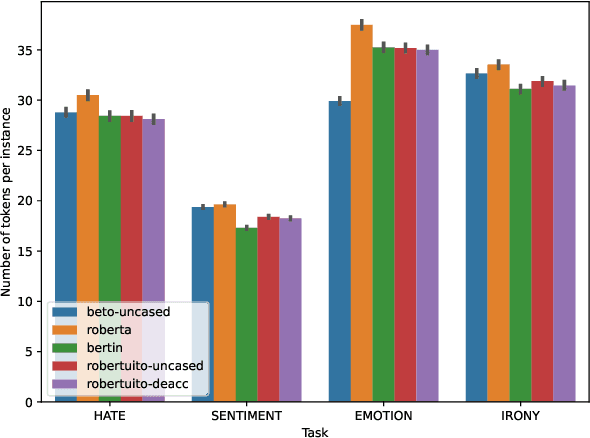

Abstract:Since BERT appeared, Transformer language models and transfer learning have become state-of-the-art for Natural Language Understanding tasks. Recently, some works geared towards pre-training, specially-crafted models for particular domains, such as scientific papers, medical documents, and others. In this work, we present RoBERTuito, a pre-trained language model for user-generated content in Spanish. We trained RoBERTuito on 500 million tweets in Spanish. Experiments on a benchmark of 4 tasks involving user-generated text showed that RoBERTuito outperformed other pre-trained language models for Spanish. In order to help further research, we make RoBERTuito publicly available at the HuggingFace model hub.

* 4 pages, 2 figures

Via

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge