Daehun Nyang

ML-based IoT Malware Detection Under Adversarial Settings: A Systematic Evaluation

Aug 30, 2021

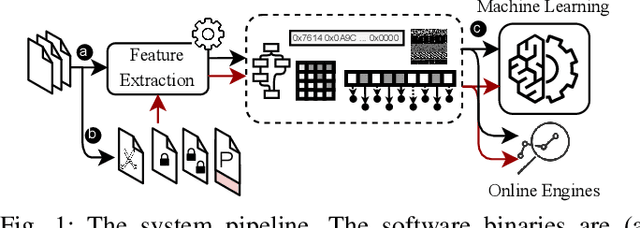

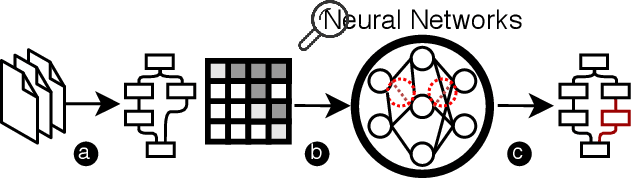

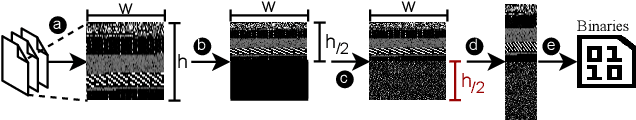

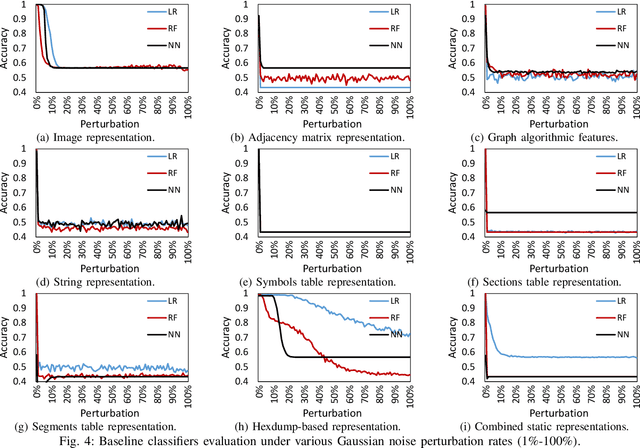

Abstract:The rapid growth of the Internet of Things (IoT) devices is paralleled by them being on the front-line of malicious attacks. This has led to an explosion in the number of IoT malware, with continued mutations, evolution, and sophistication. These malicious software are detected using machine learning (ML) algorithms alongside the traditional signature-based methods. Although ML-based detectors improve the detection performance, they are susceptible to malware evolution and sophistication, making them limited to the patterns that they have been trained upon. This continuous trend motivates the large body of literature on malware analysis and detection research, with many systems emerging constantly, and outperforming their predecessors. In this work, we systematically examine the state-of-the-art malware detection approaches, that utilize various representation and learning techniques, under a range of adversarial settings. Our analyses highlight the instability of the proposed detectors in learning patterns that distinguish the benign from the malicious software. The results exhibit that software mutations with functionality-preserving operations, such as stripping and padding, significantly deteriorate the accuracy of such detectors. Additionally, our analysis of the industry-standard malware detectors shows their instability to the malware mutations.

Hate, Obscenity, and Insults: Measuring the Exposure of Children to Inappropriate Comments in YouTube

Mar 03, 2021

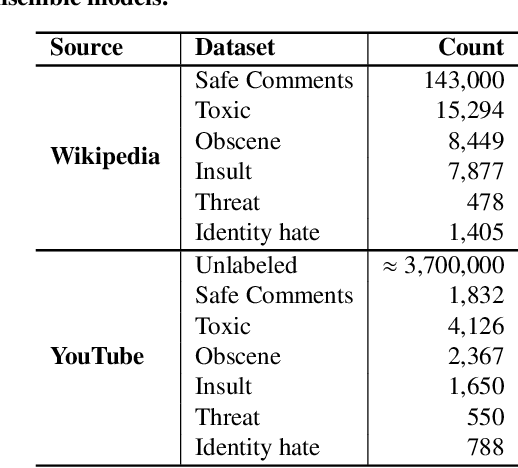

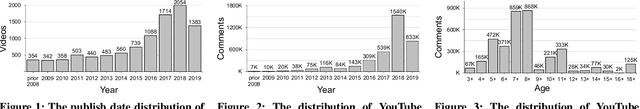

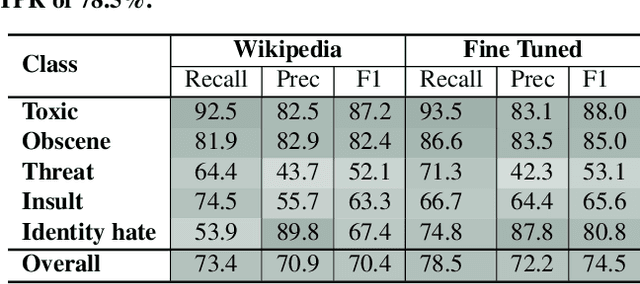

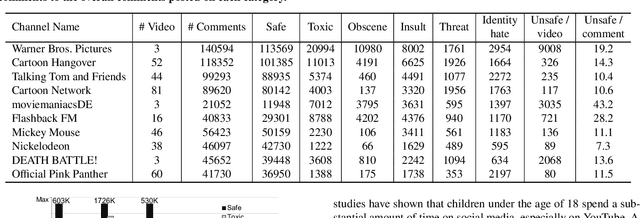

Abstract:Social media has become an essential part of the daily routines of children and adolescents. Moreover, enormous efforts have been made to ensure the psychological and emotional well-being of young users as well as their safety when interacting with various social media platforms. In this paper, we investigate the exposure of those users to inappropriate comments posted on YouTube videos targeting this demographic. We collected a large-scale dataset of approximately four million records and studied the presence of five age-inappropriate categories and the amount of exposure to each category. Using natural language processing and machine learning techniques, we constructed ensemble classifiers that achieved high accuracy in detecting inappropriate comments. Our results show a large percentage of worrisome comments with inappropriate content: we found 11% of the comments on children's videos to be toxic, highlighting the importance of monitoring comments, particularly on children's platforms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge