Craig Gin

Deep Learning Models for Global Coordinate Transformations that Linearize PDEs

Nov 07, 2019

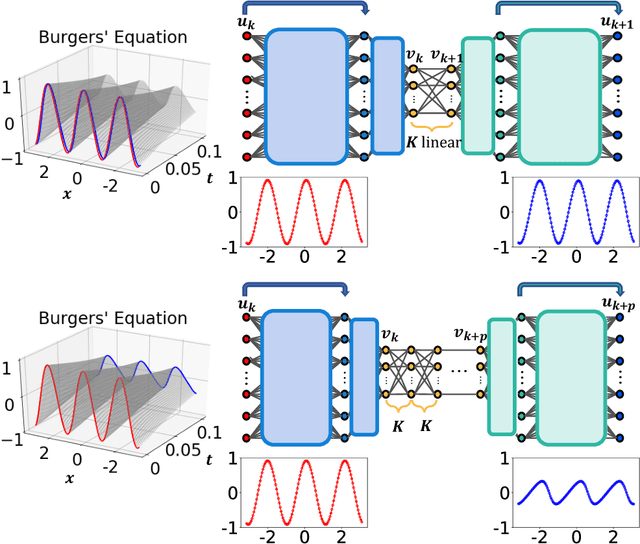

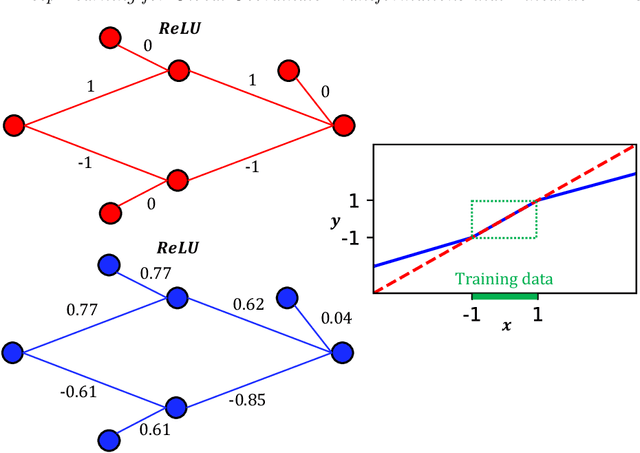

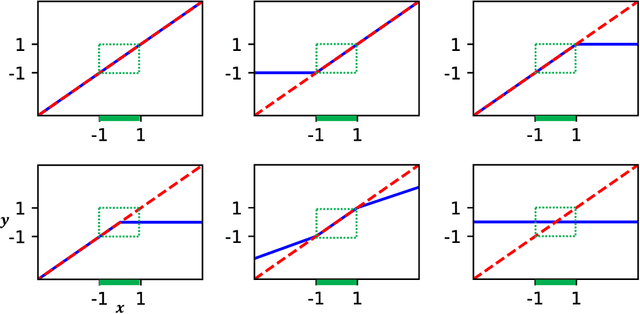

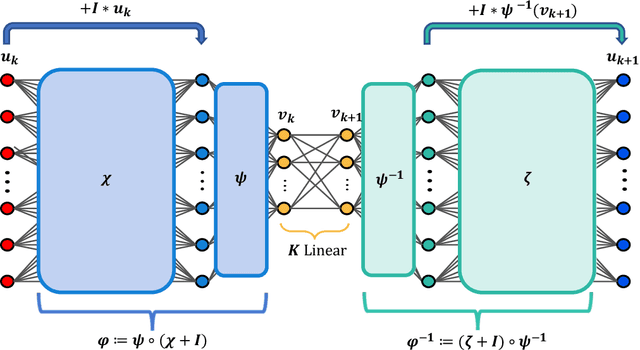

Abstract:We develop a deep autoencoder architecture that can be used to find a coordinate transformation which turns a nonlinear PDE into a linear PDE. Our architecture is motivated by the linearizing transformations provided by the Cole-Hopf transform for Burgers equation and the inverse scattering transform for completely integrable PDEs. By leveraging a residual network architecture, a near-identity transformation can be exploited to encode intrinsic coordinates in which the dynamics are linear. The resulting dynamics are given by a Koopman operator matrix $\mathbf{K}$. The decoder allows us to transform back to the original coordinates as well. Multiple time step prediction can be performed by repeated multiplication by the matrix $\mathbf{K}$ in the intrinsic coordinates. We demonstrate our method on a number of examples, including the heat equation and Burgers equation, as well as the substantially more challenging Kuramoto-Sivashinsky equation, showing that our method provides a robust architecture for discovering interpretable, linearizing transforms for nonlinear PDEs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge