Ciprian Paduraru

A Trace-Based Assurance Framework for Agentic AI Orchestration: Contracts, Testing, and Governance

Mar 18, 2026Abstract:In Agentic AI, Large Language Models (LLMs) are increasingly used in the orchestration layer to coordinate multiple agents and to interact with external services, retrieval components, and shared memory. In this setting, failures are not limited to incorrect final outputs. They also arise from long-horizon interaction, stochastic decisions, and external side effects (such as API calls, database writes, and message sends). Common failures include non-termination, role drift, propagation of unsupported claims, and attacks via untrusted context or external channels. This paper presents an assurance framework for such Agentic AI systems. Executions are instrumented as Message-Action Traces (MAT) with explicit step and trace contracts. Contracts provide machine-checkable verdicts, localize the first violating step, and support deterministic replay. The framework includes stress testing, formulated as a budgeted counterexample search over bounded perturbations. It also supports structured fault injection at service, retrieval, and memory boundaries to assess containment under realistic operational faults and degraded conditions. Finally, governance is treated as a runtime component, enforcing per-agent capability limits and action mediation (allow, rewrite, block) at the language-to-action boundary. To support comparative evaluations across stochastic seeds, models, and orchestration configurations, the paper defines trace-based metrics for task success, termination reliability, contract compliance, factuality indicators, containment rate, and governance outcome distributions. More broadly, the framework is intended as a common abstraction to support testing and evaluation of multi-agent LLM systems, and to facilitate reproducible comparison across orchestration designs and configurations.

Stochastic Proximal Gradient Algorithm with Minibatches. Application to Large Scale Learning Models

Mar 30, 2020

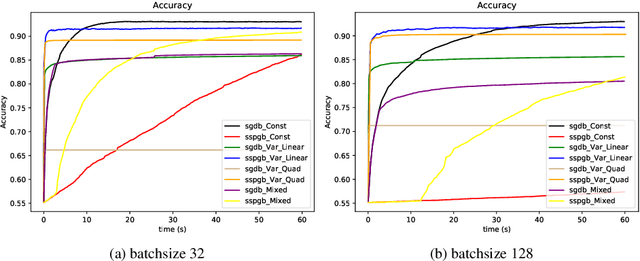

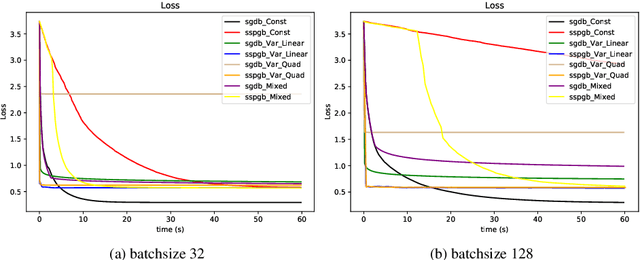

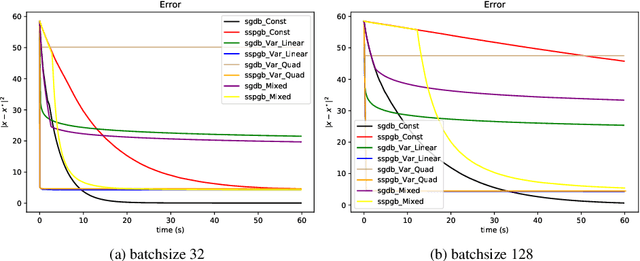

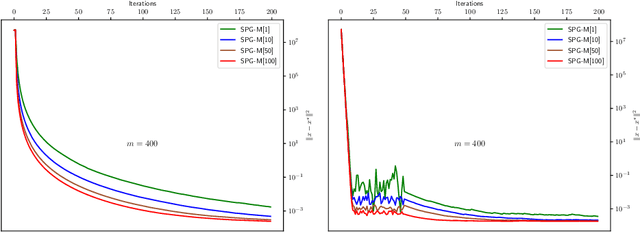

Abstract:Stochastic optimization lies at the core of most statistical learning models. The recent great development of stochastic algorithmic tools focused significantly onto proximal gradient iterations, in order to find an efficient approach for nonsmooth (composite) population risk functions. The complexity of finding optimal predictors by minimizing regularized risk is largely understood for simple regularizations such as $\ell_1/\ell_2$ norms. However, more complex properties desired for the predictor necessitates highly difficult regularizers as used in grouped lasso or graph trend filtering. In this chapter we develop and analyze minibatch variants of stochastic proximal gradient algorithm for general composite objective functions with stochastic nonsmooth components. We provide iteration complexity for constant and variable stepsize policies obtaining that, for minibatch size $N$, after $\mathcal{O}(\frac{1}{N\epsilon})$ iterations $\epsilon-$suboptimality is attained in expected quadratic distance to optimal solution. The numerical tests on $\ell_2-$regularized SVMs and parametric sparse representation problems confirm the theoretical behaviour and surpasses minibatch SGD performance.

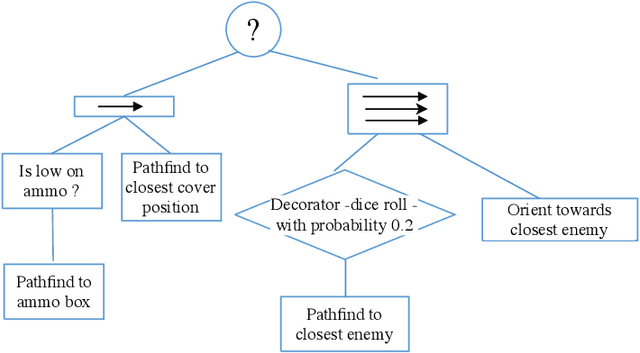

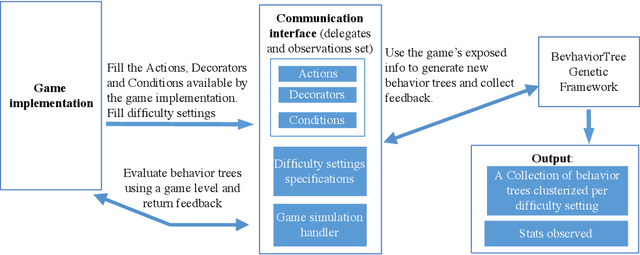

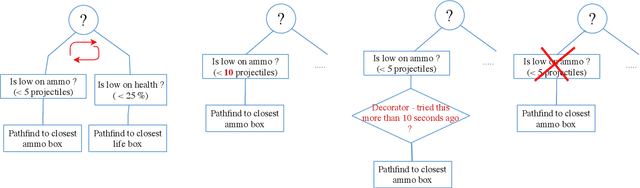

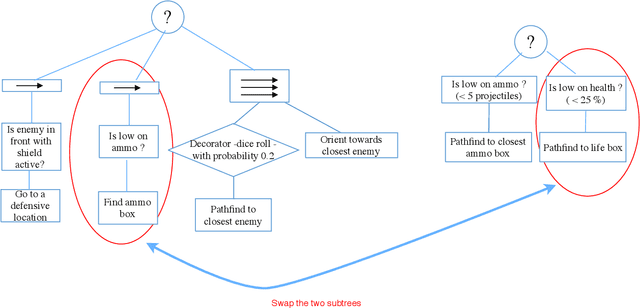

Automatic difficulty management and testing in games using a framework based on behavior trees and genetic algorithms

Sep 10, 2019

Abstract:The diversity of agent behaviors is an important topic for the quality of video games and virtual environments in general. Offering the most compelling experience for users with different skills is a difficult task, and usually needs important manual human effort for tuning existing code. This can get even harder when dealing with adaptive difficulty systems. Our paper's main purpose is to create a framework that can automatically create behaviors for game agents of different difficulty classes and enough diversity. In parallel with this, a second purpose is to create more automated tests for showing defects in the source code or possible logic exploits with less human effort.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge