Christopher Lazarus

Deep Binary Reinforcement Learning for Scalable Verification

Mar 11, 2022

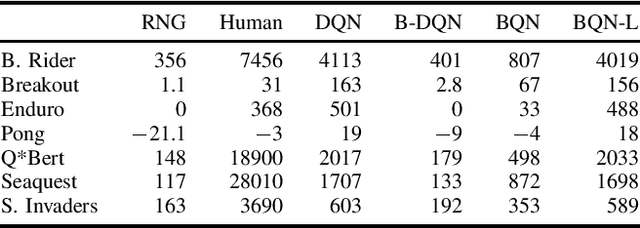

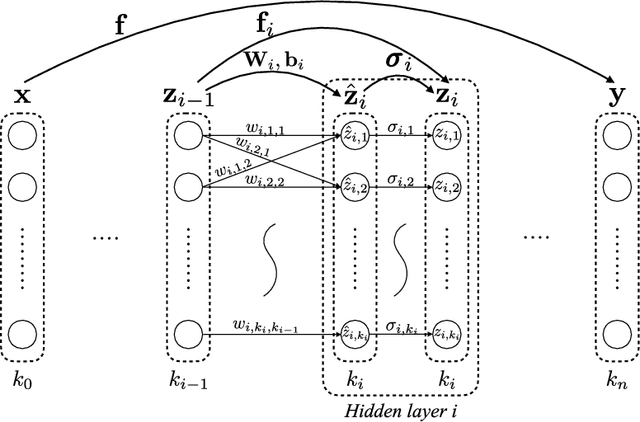

Abstract:The use of neural networks as function approximators has enabled many advances in reinforcement learning (RL). The generalization power of neural networks combined with advances in RL algorithms has reignited the field of artificial intelligence. Despite their power, neural networks are considered black boxes, and their use in safety-critical settings remains a challenge. Recently, neural network verification has emerged as a way to certify safety properties of networks. Verification is a hard problem, and it is difficult to scale to large networks such as the ones used in deep reinforcement learning. We provide an approach to train RL policies that are more easily verifiable. We use binarized neural networks (BNNs), a type of network with mostly binary parameters. We present an RL algorithm tailored specifically for BNNs. After training BNNs for the Atari environments, we verify robustness properties.

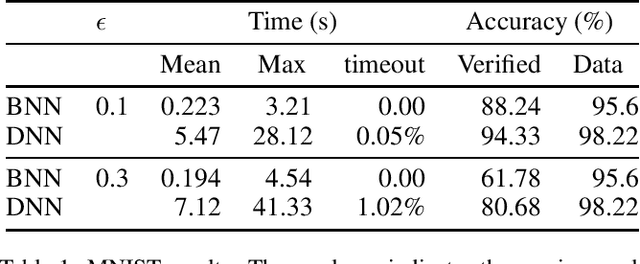

A Mixed Integer Programming Approach for Verifying Properties of Binarized Neural Networks

Mar 11, 2022

Abstract:Many approaches for verifying input-output properties of neural networks have been proposed recently. However, existing algorithms do not scale well to large networks. Recent work in the field of model compression studied binarized neural networks (BNNs), whose parameters and activations are binary. BNNs tend to exhibit a slight decrease in performance compared to their full-precision counterparts, but they can be easier to verify. This paper proposes a simple mixed integer programming formulation for BNN verification that leverages network structure. We demonstrate our approach by verifying properties of BNNs trained on the MNIST dataset and an aircraft collision avoidance controller. We compare the runtime of our approach against state-of-the-art verification algorithms for full-precision neural networks. The results suggest that the difficulty of training BNNs might be worth the reduction in runtime achieved by our verification algorithm.

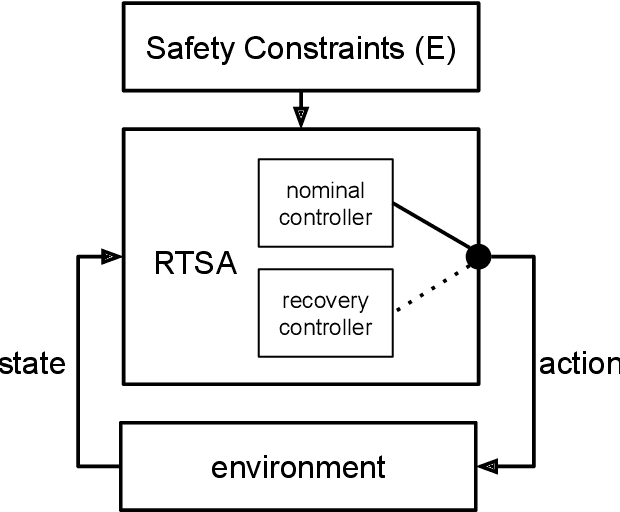

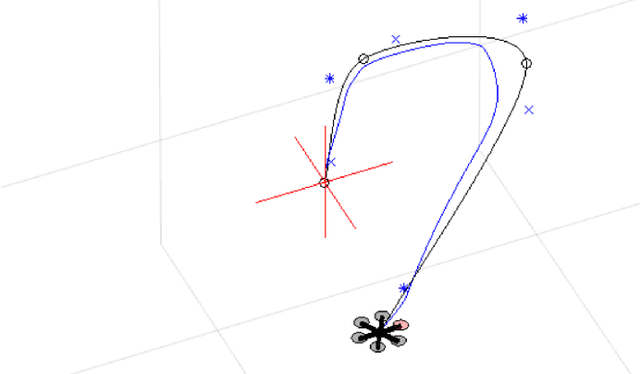

Runtime Safety Assurance Using Reinforcement Learning

Oct 20, 2020

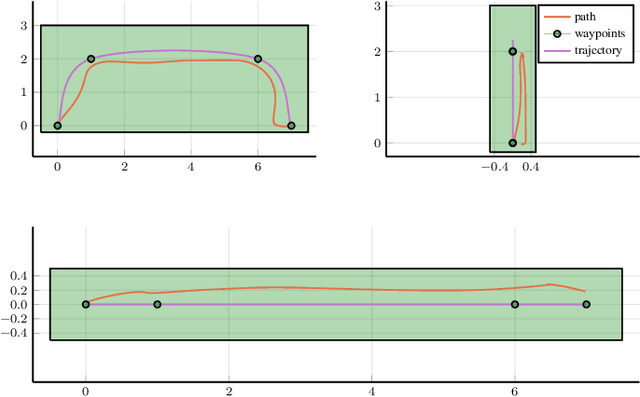

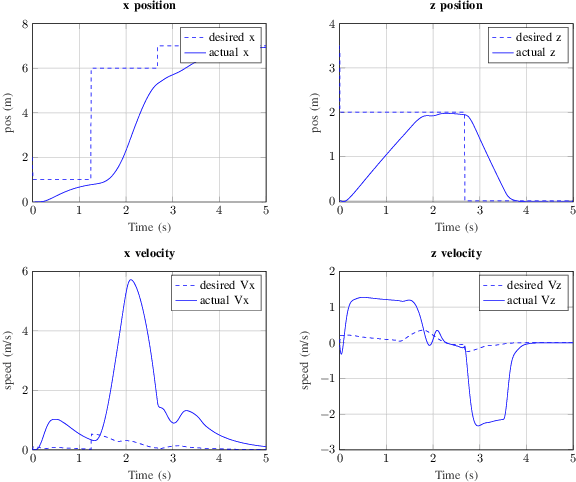

Abstract:The airworthiness and safety of a non-pedigreed autopilot must be verified, but the cost to formally do so can be prohibitive. We can bypass formal verification of non-pedigreed components by incorporating Runtime Safety Assurance (RTSA) as mechanism to ensure safety. RTSA consists of a meta-controller that observes the inputs and outputs of a non-pedigreed component and verifies formally specified behavior as the system operates. When the system is triggered, a verified recovery controller is deployed. Recovery controllers are designed to be safe but very likely disruptive to the operational objective of the system, and thus RTSA systems must balance safety and efficiency. The objective of this paper is to design a meta-controller capable of identifying unsafe situations with high accuracy. High dimensional and non-linear dynamics in which modern controllers are deployed along with the black-box nature of the nominal controllers make this a difficult problem. Current approaches rely heavily on domain expertise and human engineering. We frame the design of RTSA with the Markov decision process (MDP) framework and use reinforcement learning (RL) to solve it. Our learned meta-controller consistently exhibits superior performance in our experiments compared to our baseline, human engineered approach.

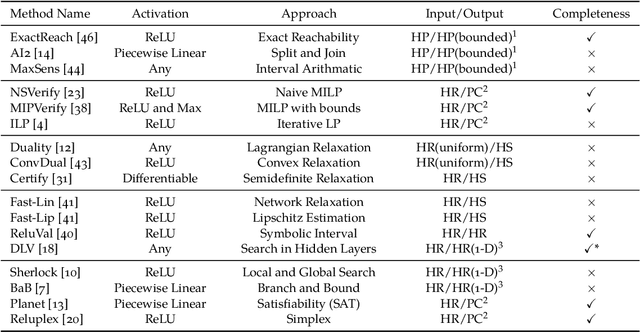

Algorithms for Verifying Deep Neural Networks

Mar 15, 2019

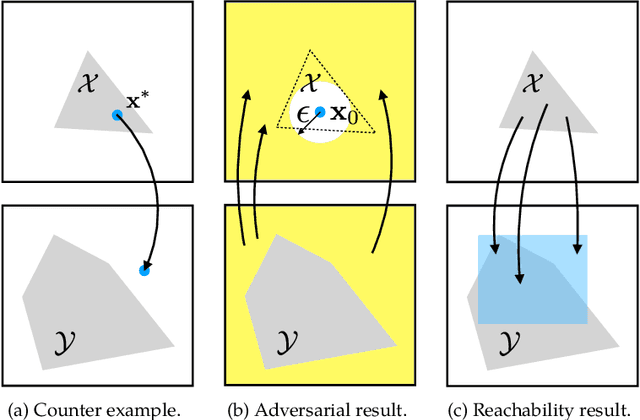

Abstract:Deep neural networks are widely used for nonlinear function approximation with applications ranging from computer vision to control. Although these networks involve the composition of simple arithmetic operations, it can be very challenging to verify whether a particular network satisfies certain input-output properties. This article surveys methods that have emerged recently for soundly verifying such properties. These methods borrow insights from reachability analysis, optimization, and search. We discuss fundamental differences and connections between existing algorithms. In addition, we provide pedagogical implementations of existing methods and compare them on a set of benchmark problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge