Christopher Granade

Bayesian inference via rejection filtering

Dec 03, 2015

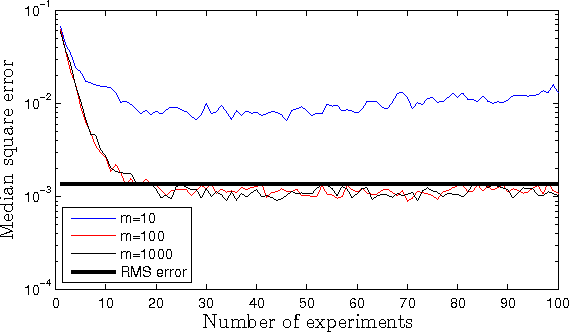

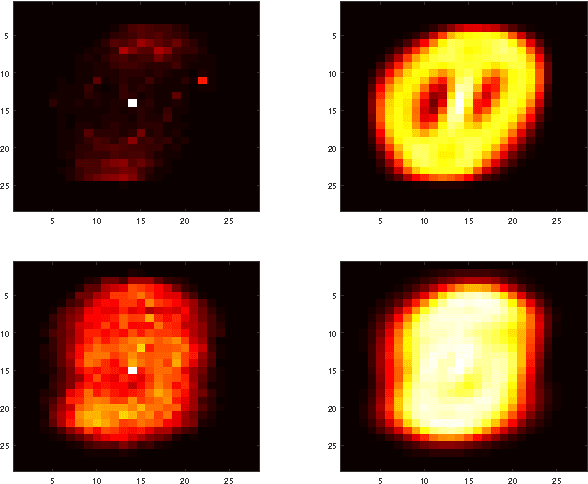

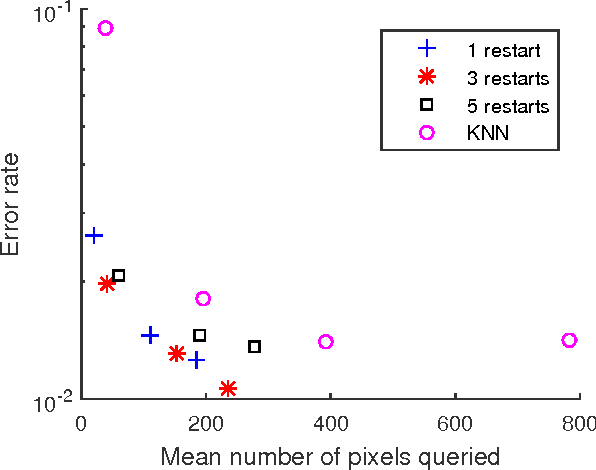

Abstract:We provide a method for approximating Bayesian inference using rejection sampling. We not only make the process efficient, but also dramatically reduce the memory required relative to conventional methods by combining rejection sampling with particle filtering. We also provide an approximate form of rejection sampling that makes rejection filtering tractable in cases where exact rejection sampling is not efficient. Finally, we present several numerical examples of rejection filtering that show its ability to track time dependent parameters in online settings and also benchmark its performance on MNIST classification problems.

Quantum Inspired Training for Boltzmann Machines

Jul 09, 2015

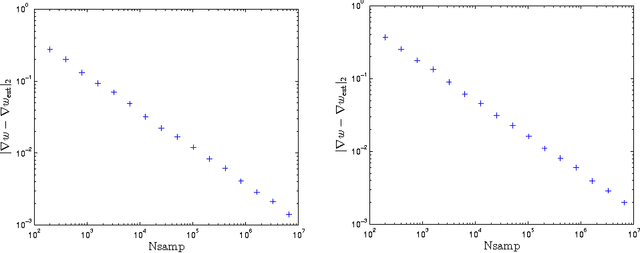

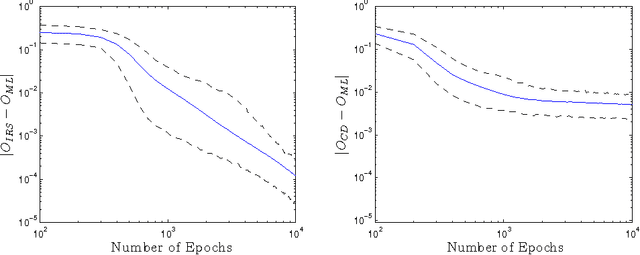

Abstract:We present an efficient classical algorithm for training deep Boltzmann machines (DBMs) that uses rejection sampling in concert with variational approximations to estimate the gradients of the training objective function. Our algorithm is inspired by a recent quantum algorithm for training DBMs. We obtain rigorous bounds on the errors in the approximate gradients; in turn, we find that choosing the instrumental distribution to minimize the alpha=2 divergence with the Gibbs state minimizes the asymptotic algorithmic complexity. Our rejection sampling approach can yield more accurate gradients than low-order contrastive divergence training and the costs incurred in finding increasingly accurate gradients can be easily parallelized. Finally our algorithm can train full Boltzmann machines and scales more favorably with the number of layers in a DBM than greedy contrastive divergence training.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge