Christian Agrell

Gaussian Process Surrogate Models for Efficient Estimation of Structural Response Distributions and Order Statistics

Mar 03, 2025

Abstract:Engineering disciplines often rely on extensive simulations to ensure that structures are designed to withstand harsh conditions while avoiding over-engineering for unlikely scenarios. Assessments such as Serviceability Limit State (SLS) involve evaluating weather events, including estimating loads not expected to be exceeded more than a specified number of times (e.g., 100) throughout the structure's design lifetime. Although physics-based simulations provide robust and detailed insights, they are computationally expensive, making it challenging to generate statistically valid representations of a wide range of weather conditions. To address these challenges, we propose an approach using Gaussian Process (GP) surrogate models trained on a limited set of simulation outputs to directly generate the structural response distribution. We apply this method to an SLS assessment for estimating the order statistics \(Y_{100}\), representing the 100th highest response, of a structure exposed to 25 years of historical weather observations. Our results indicate that the GP surrogate models provide comparable results to full simulations but at a fraction of the computational cost.

The Probabilistic Tsetlin Machine: A Novel Approach to Uncertainty Quantification

Oct 23, 2024

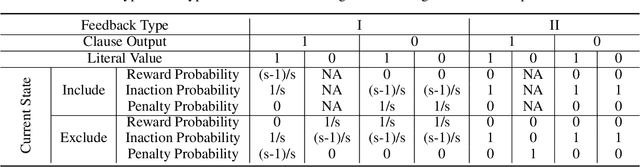

Abstract:Tsetlin Machines (TMs) have emerged as a compelling alternative to conventional deep learning methods, offering notable advantages such as smaller memory footprint, faster inference, fault-tolerant properties, and interpretability. Although various adaptations of TMs have expanded their applicability across diverse domains, a fundamental gap remains in understanding how TMs quantify uncertainty in their predictions. In response, this paper introduces the Probabilistic Tsetlin Machine (PTM) framework, aimed at providing a robust, reliable, and interpretable approach for uncertainty quantification. Unlike the original TM, the PTM learns the probability of staying on each state of each Tsetlin Automaton (TA) across all clauses. These probabilities are updated using the feedback tables that are part of the TM framework: Type I and Type II feedback. During inference, TAs decide their actions by sampling states based on learned probability distributions, akin to Bayesian neural networks when generating weight values. In our experimental analysis, we first illustrate the spread of the probabilities across TA states for the noisy-XOR dataset. Then we evaluate the PTM alongside benchmark models using both simulated and real-world datasets. The experiments on the simulated dataset reveal the PTM's effectiveness in uncertainty quantification, particularly in delineating decision boundaries and identifying regions of high uncertainty. Moreover, when applied to multiclass classification tasks using the Iris dataset, the PTM demonstrates competitive performance in terms of predictive entropy and expected calibration error, showcasing its potential as a reliable tool for uncertainty estimation. Our findings underscore the importance of selecting appropriate models for accurate uncertainty quantification in predictive tasks, with the PTM offering a particularly interpretable and effective solution.

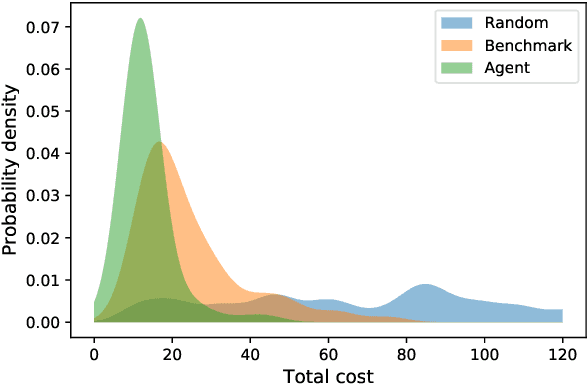

Optimal sequential decision making with probabilistic digital twins

Mar 12, 2021

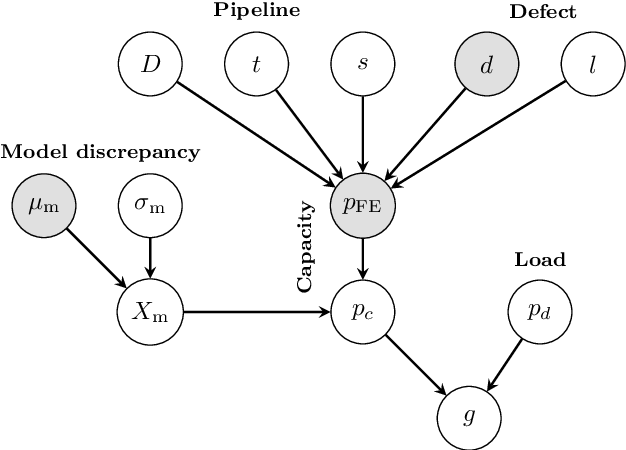

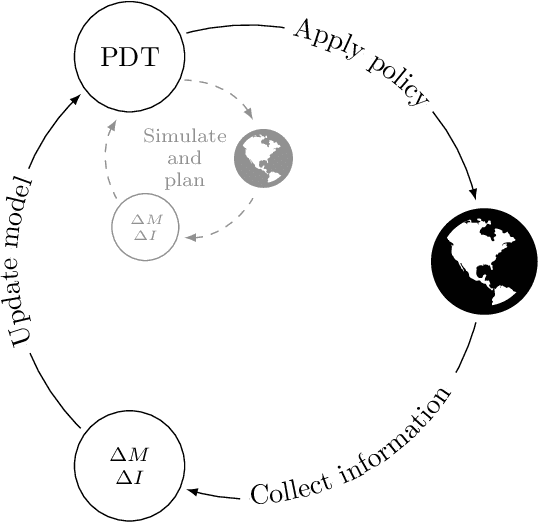

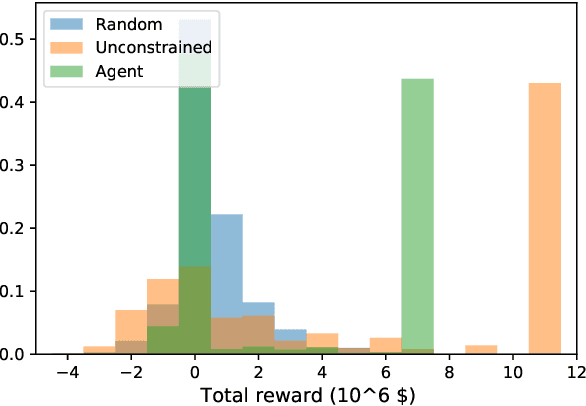

Abstract:Digital twins are emerging in many industries, typically consisting of simulation models and data associated with a specific physical system. One of the main reasons for developing a digital twin, is to enable the simulation of possible consequences of a given action, without the need to interfere with the physical system itself. Physical systems of interest, and the environments they operate in, do not always behave deterministically. Moreover, information about the system and its environment is typically incomplete or imperfect. Probabilistic representations of systems and environments may therefore be called for, especially to support decisions in application areas where actions may have severe consequences. In this paper we introduce the probabilistic digital twin (PDT). We will start by discussing how epistemic uncertainty can be treated using measure theory, by modelling epistemic information via $\sigma$-algebras. Based on this, we give a formal definition of how epistemic uncertainty can be updated in a PDT. We then study the problem of optimal sequential decision making. That is, we consider the case where the outcome of each decision may inform the next. Within the PDT framework, we formulate this optimization problem. We discuss how this problem may be solved (at least in theory) via the maximum principle method or the dynamic programming principle. However, due to the curse of dimensionality, these methods are often not tractable in practice. To mend this, we propose a generic approximate solution using deep reinforcement learning together with neural networks defined on sets. We illustrate the method on a practical problem, considering optimal information gathering for the estimation of a failure probability.

Gaussian processes with linear operator inequality constraints

Jan 10, 2019

Abstract:This paper presents an approach for constrained Gaussian Process (GP) regression where we assume that a set of linear transformations of the process are bounded. It is motivated by machine learning applications for high-consequence engineering systems, where this kind of information is often made available from phenomenological knowledge, and the resulting constraints may be essential to achieve the level of confidence needed. We consider a GP $f$ over functions on $\mathcal{X} \subset \mathbb{R}^{n}$ taking values in $\mathbb{R}$, where the process $\mathcal{L}f$ is still Gaussian when $\mathcal{L}$ is a linear operator. Our goal is to model $f$ under the constraint that realizations of $\mathcal{L}f$ are confined to a convex set of functions. In particular we require that $a \leq \mathcal{L}f \leq b$ given two functions $a$ and $b$ where $a < b$ pointwise. This formulation provides a consistent way of encoding multiple linear constraints, such as shape-constraints based on e.g. boundedness, monotonicity or convexity as a relevant example. We adopt the approach of using a sufficiently dense set of virtual observation locations where the constraint is required to hold, and derive the exact posterior for a conjugate likelihood. The results needed for stable numerical implementation are derived, together with an efficient sampling scheme for estimating the posterior process which is exact in the limit. A few numerical examples focusing on noiseless observations are given. This is relevant for computer code emulation and is also more computationally demanding than the alternative scenario with i.i.d. Gaussian noise.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge