Chathika Gunaratne

Agent-Based Modeling and Deep Neural Networks for Establishing Digital Twins of Secure Facilities under Sensing Restrictions

Mar 29, 2025Abstract:Digital twin technologies help practitioners simulate, monitor, and predict undesirable outcomes in-silico, while avoiding the cost and risks of conducting live simulation exercises. Virtual reality (VR) based digital twin technologies are especially useful when monitoring human Patterns of Life (POL) in secure nuclear facilities, where live simulation exercises are too dangerous and costly to ever perform. However, the high-security status of such facilities may restrict modelers from deploying human activity sensors for data collection. This problem was encountered when deploying MetaPOL, a digital twin system to prevent insider threat or sabotage of secure facilities, at a secure nuclear reactor facility at Oak Ridge National Laboratory (ORNL). This challenge was addressed using an agent-based model (ABM), driven by anecdotal evidence of facility personnel POL, to generate synthetic movement trajectories. These synthetic trajectories were then used to train deep neural network surrogates for next location and stay duration prediction to drive NPCs in the VR environment. In this study, we evaluate the efficacy of this technique for establishing NPC movement within MetaPOL and the ability to distinguish NPC movement during normal operations from that during a simulated emergency response. Our results demonstrate the success of using a multi-layer perceptron for next location prediction and mixture density network for stay duration prediction to predict the ABM generated trajectories. We also find that NPC movement in the VR environment driven by the deep neural networks under normal operations remain significantly different to that seen when simulating responses to a simulated emergency scenario.

SuperNeuro: A Fast and Scalable Simulator for Neuromorphic Computing

May 04, 2023

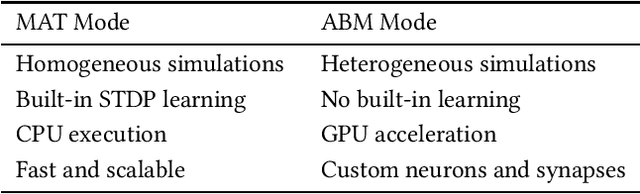

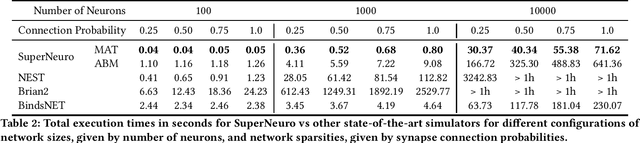

Abstract:In many neuromorphic workflows, simulators play a vital role for important tasks such as training spiking neural networks (SNNs), running neuroscience simulations, and designing, implementing and testing neuromorphic algorithms. Currently available simulators are catered to either neuroscience workflows (such as NEST and Brian2) or deep learning workflows (such as BindsNET). While the neuroscience-based simulators are slow and not very scalable, the deep learning-based simulators do not support certain functionalities such as synaptic delay that are typical of neuromorphic workloads. In this paper, we address this gap in the literature and present SuperNeuro, which is a fast and scalable simulator for neuromorphic computing, capable of both homogeneous and heterogeneous simulations as well as GPU acceleration. We also present preliminary results comparing SuperNeuro to widely used neuromorphic simulators such as NEST, Brian2 and BindsNET in terms of computation times. We demonstrate that SuperNeuro can be approximately 10--300 times faster than some of the other simulators for small sparse networks. On large sparse and large dense networks, SuperNeuro can be approximately 2.2 and 3.4 times faster than the other simulators respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge