Cameron Domenico Kirk-Giannini

What Is AI Safety? What Do We Want It to Be?

May 05, 2025Abstract:The field of AI safety seeks to prevent or reduce the harms caused by AI systems. A simple and appealing account of what is distinctive of AI safety as a field holds that this feature is constitutive: a research project falls within the purview of AI safety just in case it aims to prevent or reduce the harms caused by AI systems. Call this appealingly simple account The Safety Conception of AI safety. Despite its simplicity and appeal, we argue that The Safety Conception is in tension with at least two trends in the ways AI safety researchers and organizations think and talk about AI safety: first, a tendency to characterize the goal of AI safety research in terms of catastrophic risks from future systems; second, the increasingly popular idea that AI safety can be thought of as a branch of safety engineering. Adopting the methodology of conceptual engineering, we argue that these trends are unfortunate: when we consider what concept of AI safety it would be best to have, there are compelling reasons to think that The Safety Conception is the answer. Descriptively, The Safety Conception allows us to see how work on topics that have historically been treated as central to the field of AI safety is continuous with work on topics that have historically been treated as more marginal, like bias, misinformation, and privacy. Normatively, taking The Safety Conception seriously means approaching all efforts to prevent or mitigate harms from AI systems based on their merits rather than drawing arbitrary distinctions between them.

A Case for AI Consciousness: Language Agents and Global Workspace Theory

Oct 15, 2024

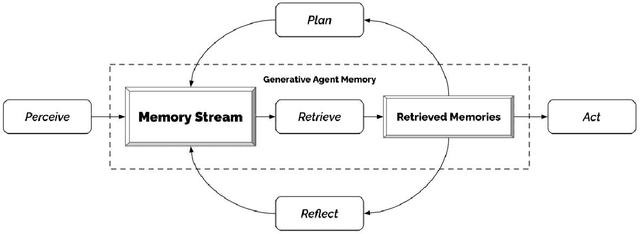

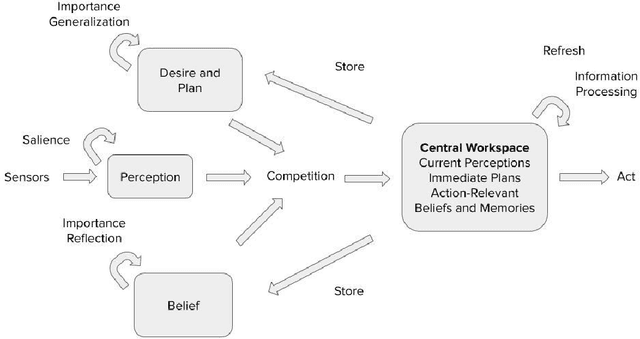

Abstract:It is generally assumed that existing artificial systems are not phenomenally conscious, and that the construction of phenomenally conscious artificial systems would require significant technological progress if it is possible at all. We challenge this assumption by arguing that if Global Workspace Theory (GWT) - a leading scientific theory of phenomenal consciousness - is correct, then instances of one widely implemented AI architecture, the artificial language agent, might easily be made phenomenally conscious if they are not already. Along the way, we articulate an explicit methodology for thinking about how to apply scientific theories of consciousness to artificial systems and employ this methodology to arrive at a set of necessary and sufficient conditions for phenomenal consciousness according to GWT.

Artificial Intelligence: Arguments for Catastrophic Risk

Jan 27, 2024Abstract:Recent progress in artificial intelligence (AI) has drawn attention to the technology's transformative potential, including what some see as its prospects for causing large-scale harm. We review two influential arguments purporting to show how AI could pose catastrophic risks. The first argument -- the Problem of Power-Seeking -- claims that, under certain assumptions, advanced AI systems are likely to engage in dangerous power-seeking behavior in pursuit of their goals. We review reasons for thinking that AI systems might seek power, that they might obtain it, that this could lead to catastrophe, and that we might build and deploy such systems anyway. The second argument claims that the development of human-level AI will unlock rapid further progress, culminating in AI systems far more capable than any human -- this is the Singularity Hypothesis. Power-seeking behavior on the part of such systems might be particularly dangerous. We discuss a variety of objections to both arguments and conclude by assessing the state of the debate.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge