Byunghyun Kim

Accelerating Diffusion via Hybrid Data-Pipeline Parallelism Based on Conditional Guidance Scheduling

Feb 25, 2026Abstract:Diffusion models have achieved remarkable progress in high-fidelity image, video, and audio generation, yet inference remains computationally expensive. Nevertheless, current diffusion acceleration methods based on distributed parallelism suffer from noticeable generation artifacts and fail to achieve substantial acceleration proportional to the number of GPUs. Therefore, we propose a hybrid parallelism framework that combines a novel data parallel strategy, condition-based partitioning, with an optimal pipeline scheduling method, adaptive parallelism switching, to reduce generation latency and achieve high generation quality in conditional diffusion models. The key ideas are to (i) leverage the conditional and unconditional denoising paths as a new data-partitioning perspective and (ii) adaptively enable optimal pipeline parallelism according to the denoising discrepancy between these two paths. Our framework achieves $2.31\times$ and $2.07\times$ latency reductions on SDXL and SD3, respectively, using two NVIDIA RTX~3090 GPUs, while preserving image quality. This result confirms the generality of our approach across U-Net-based diffusion models and DiT-based flow-matching architectures. Our approach also outperforms existing methods in acceleration under high-resolution synthesis settings. Code is available at https://github.com/kaist-dmlab/Hybridiff.

Ultra-Light Test-Time Adaptation for Vision--Language Models

Nov 12, 2025Abstract:Vision-Language Models (VLMs) such as CLIP achieve strong zero-shot recognition by comparing image embeddings to text-derived class prototypes. However, under domain shift, they suffer from feature drift, class-prior mismatch, and severe miscalibration. Existing test-time adaptation (TTA) methods often require backpropagation through large backbones, covariance estimation, or heavy memory/state, which is problematic for streaming and edge scenarios. We propose Ultra-Light Test-Time Adaptation (UL-TTA), a fully training-free and backprop-free framework that freezes the backbone and adapts only logit-level parameters: class prototypes, class priors, and temperature. UL-TTA performs an online EM-style procedure with (i) selective sample filtering to use only confident predictions, (ii) closed-form Bayesian updates for prototypes and priors anchored by text and Dirichlet priors, (iii) decoupled temperatures for prediction vs. calibration, and (iv) lightweight guards (norm clipping, prior KL constraints, smoothed temperature) to prevent drift in long streams. Across large-scale cross-domain and OOD benchmarks (PACS, Office-Home, DomainNet, Terra Incognita, ImageNet-R/A/V2/Sketch; ~726K test samples) and strong TTA baselines including Tent, T3A, CoTTA, SAR, Tip-Adapter, and FreeTTA, UL-TTA consistently improves top-1 accuracy (e.g., +4.7 points over zero-shot CLIP on average) while reducing ECE by 20-30%, with less than 8% latency overhead. Long-stream experiments up to 200K samples show no collapse. Our results demonstrate that logit-level Bayesian adaptation is sufficient to obtain state-of-the-art accuracy-calibration trade-offs for VLMs under domain shift, without updating any backbone parameters.

Continuous-Time Linear Positional Embedding for Irregular Time Series Forecasting

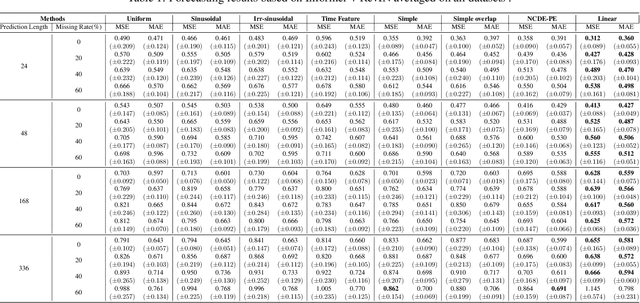

Sep 30, 2024

Abstract:Irregularly sampled time series forecasting, characterized by non-uniform intervals, is prevalent in practical applications. However, previous research have been focused on regular time series forecasting, typically relying on transformer architectures. To extend transformers to handle irregular time series, we tackle the positional embedding which represents the temporal information of the data. We propose CTLPE, a method learning a continuous linear function for encoding temporal information. The two challenges of irregular time series, inconsistent observation patterns and irregular time gaps, are solved by learning a continuous-time function and concise representation of position. Additionally, the linear continuous function is empirically shown superior to other continuous functions by learning a neural controlled differential equation-based positional embedding, and theoretically supported with properties of ideal positional embedding. CTLPE outperforms existing techniques across various irregularly-sampled time series datasets, showcasing its enhanced efficacy.

Universal Time-Series Representation Learning: A Survey

Jan 08, 2024

Abstract:Time-series data exists in every corner of real-world systems and services, ranging from satellites in the sky to wearable devices on human bodies. Learning representations by extracting and inferring valuable information from these time series is crucial for understanding the complex dynamics of particular phenomena and enabling informed decisions. With the learned representations, we can perform numerous downstream analyses more effectively. Among several approaches, deep learning has demonstrated remarkable performance in extracting hidden patterns and features from time-series data without manual feature engineering. This survey first presents a novel taxonomy based on three fundamental elements in designing state-of-the-art universal representation learning methods for time series. According to the proposed taxonomy, we comprehensively review existing studies and discuss their intuitions and insights into how these methods enhance the quality of learned representations. Finally, as a guideline for future studies, we summarize commonly used experimental setups and datasets and discuss several promising research directions. An up-to-date corresponding resource is available at https://github.com/itouchz/awesome-deep-time-series-representations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge