Bryan Liu

LLM-Based Emulation of the Radio Resource Control Layer: Towards AI-Native RAN Protocols

May 22, 2025Abstract:Integrating large AI models (LAMs) into 6G mobile networks promises to redefine protocol design and control-plane intelligence by enabling autonomous, cognitive network operations. While industry concepts, such as ETSI's Experiential Networked Intelligence (ENI), envision LAM-driven agents for adaptive network slicing and intent-based management, practical implementations still face challenges in protocol literacy and real-world deployment. This paper presents an end-to-end demonstration of a LAM that generates standards-compliant, ASN.1-encoded Radio Resource Control (RRC) messages as part of control-plane procedures inside a gNB. We treat RRC messaging as a domain-specific language and fine-tune a decoder-only transformer model (LLaMA class) using parameter-efficient Low-Rank Adaptation (LoRA) on RRC messages linearized to retain their ASN.1 syntactic structure before standard byte-pair encoding tokenization. This enables combinatorial generalization over RRC protocol states while minimizing training overhead. On 30k field-test request-response pairs, our 8 B model achieves a median cosine similarity of 0.97 with ground-truth messages on an edge GPU -- a 61 % relative gain over a zero-shot LLaMA-3 8B baseline -- indicating substantially improved structural and semantic RRC fidelity. Overall, our results show that LAMs, when augmented with Radio Access Network (RAN)-specific reasoning, can directly orchestrate control-plane procedures, representing a stepping stone toward the AI-native air-interface paradigm. Beyond RRC emulation, this work lays the groundwork for future AI-native wireless standards.

Towards Practical Deep Schedulers for Allocating Cellular Radio Resources

Nov 13, 2024

Abstract:Machine learning methods are often suggested to address wireless network functions, such as radio packet scheduling. However, a feasible 3GPP-compliant scheduler capable of delivering fair throughput across users, while keeping a low computational complexity for 5G and beyond is still missing. To address this, we first take a critical look at previous deep scheduler efforts. Secondly, we enhance State-of-the-Art (SoTA) deep Reinforcement Learning (RL) algorithms and adapt them to train our deep scheduler. In particular, we propose novel training techniques for Proximal Policy Optimization (PPO) and a new Distributional Soft Actor-Critic Discrete (DSACD) algorithm, which outperformed other tested variants. These improvements were achieved while maintaining minimal actor network complexity, making them suitable for real-time computing environments. Additionally, the entropy learning in SACD was fine-tuned to accommodate resource allocation action spaces of varying sizes. Our proposed deep schedulers exhibited strong generalization across different bandwidths, number of MU-MIMO layers, and traffic models. Ultimately, we show that our pre-trained deep schedulers outperform their heuristic rivals in realistic and standard-compliant 5G system-level simulations.

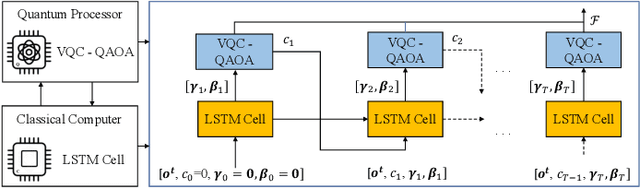

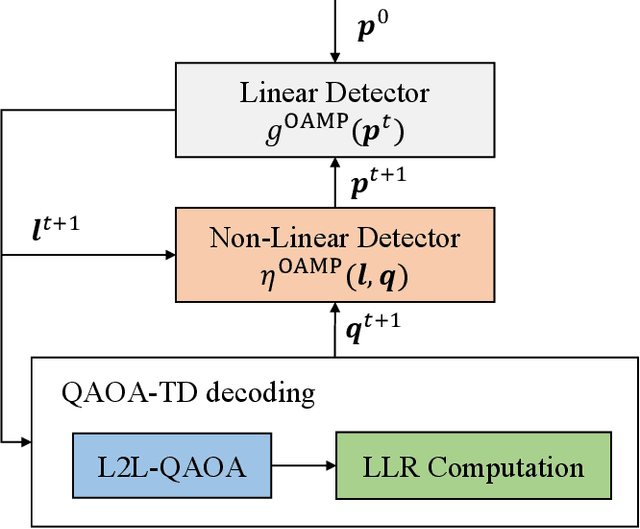

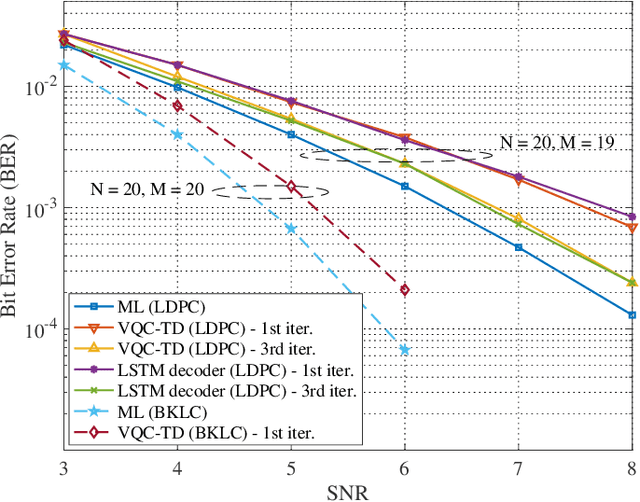

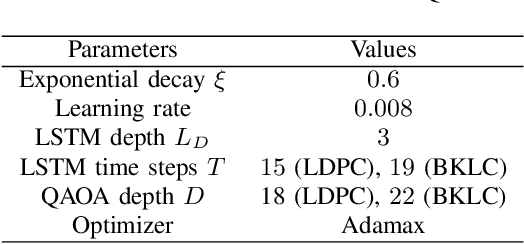

Learning to Learn Quantum Turbo Detection

May 17, 2022

Abstract:This paper investigates a turbo receiver employing a variational quantum circuit (VQC). The VQC is configured with an ansatz of the quantum approximate optimization algorithm (QAOA). We propose a 'learning to learn' (L2L) framework to optimize the turbo VQC decoder such that high fidelity soft-decision output is generated. Besides demonstrating the proposed algorithm's computational complexity, we show that the L2L VQC turbo decoder can achieve an excellent performance close to the optimal maximum-likelihood performance in a multiple-input multiple-output system.

Variational Quantum Compressed Sensing for Joint User and Channel State Acquisition in Grant-Free Device Access Systems

May 17, 2022

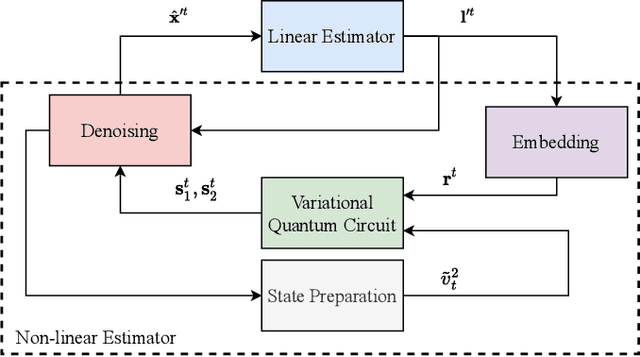

Abstract:This paper introduces a new quantum computing framework integrated with a two-step compressed sensing technique, applied to a joint channel estimation and user identification problem. We propose a variational quantum circuit (VQC) design as a new denoising solution. For a practical grant-free communications system having correlated device activities, variational quantum parameters for Pauli rotation gates in the proposed VQC system are optimized to facilitate to the non-linear estimation. Numerical results show that the VQC method can outperform modern compressed sensing techniques using an element-wise denoiser.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge