Brigitte Röder

Towards Modeling the Interaction of Spatial-Associative Neural Network Representations for Multisensory Perception

Jul 13, 2018

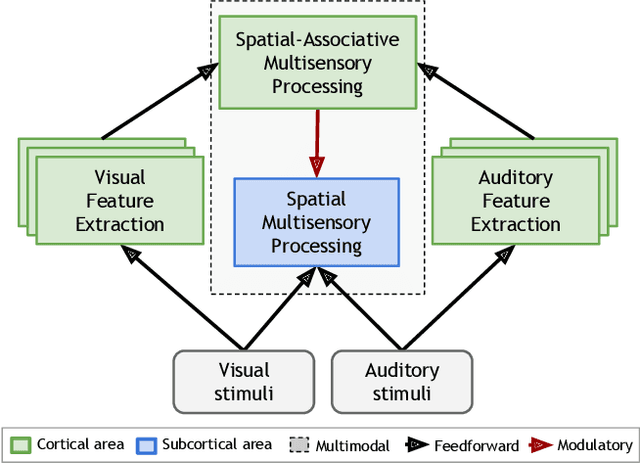

Abstract:Our daily perceptual experience is driven by different neural mechanisms that yield multisensory interaction as the interplay between exogenous stimuli and endogenous expectations. While the interaction of multisensory cues according to their spatiotemporal properties and the formation of multisensory feature-based representations have been widely studied, the interaction of spatial-associative neural representations has received considerably less attention. In this paper, we propose a neural network architecture that models the interaction of spatial-associative representations to perform causal inference of audiovisual stimuli. We investigate the spatial alignment of exogenous audiovisual stimuli modulated by associative congruence. In the spatial layer, topographically arranged networks account for the interaction of audiovisual input in terms of population codes. In the associative layer, congruent audiovisual representations are obtained via the experience-driven development of feature-based associations. Levels of congruency are obtained as a by-product of the neurodynamics of self-organizing networks, where the amount of neural activation triggered by the input can be expressed via a nonlinear distance function. Our novel proposal is that activity-driven levels of congruency can be used as top-down modulatory projections to spatially distributed representations of sensory input, e.g. semantically related audiovisual pairs will yield a higher level of integration than unrelated pairs. Furthermore, levels of neural response in unimodal layers may be seen as sensory reliability for the dynamic weighting of crossmodal cues. We describe a series of planned experiments to validate our model in the tasks of multisensory interaction on the basis of semantic congruence and unimodal cue reliability.

Closing the loop on multisensory interactions: A neural architecture for multisensory causal inference and recalibration

Mar 04, 2018

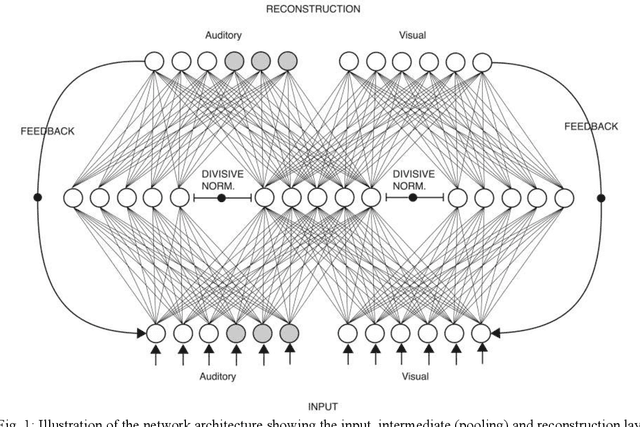

Abstract:When the brain receives input from multiple sensory systems, it is faced with the question of whether it is appropriate to process the inputs in combination, as if they originated from the same event, or separately, as if they originated from distinct events. Furthermore, it must also have a mechanism through which it can keep sensory inputs calibrated to maintain the accuracy of its internal representations. We have developed a neural network architecture capable of i) approximating optimal multisensory spatial integration, based on Bayesian causal inference, and ii) recalibrating the spatial encoding of sensory systems. The architecture is based on features of the dorsal processing hierarchy, including the spatial tuning properties of unisensory neurons and the convergence of different sensory inputs onto multisensory neurons. Furthermore, we propose that these unisensory and multisensory neurons play dual roles in i) encoding spatial location as separate or integrated estimates and ii) accumulating evidence for the independence or relatedness of multisensory stimuli. We further propose that top-down feedback connections spanning the dorsal pathway play key a role in recalibrating spatial encoding at the level of early unisensory cortices. Our proposed architecture provides possible explanations for a number of human electrophysiological and neuroimaging results and generates testable predictions linking neurophysiology with behaviour.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge