Boyuan Ji

An Ensemble Model for Distorted Images in Real Scenarios

Sep 26, 2023Abstract:Image acquisition conditions and environments can significantly affect high-level tasks in computer vision, and the performance of most computer vision algorithms will be limited when trained on distortion-free datasets. Even with updates in hardware such as sensors and deep learning methods, it will still not work in the face of variable conditions in real-world applications. In this paper, we apply the object detector YOLOv7 to detect distorted images from the dataset CDCOCO. Through carefully designed optimizations including data enhancement, detection box ensemble, denoiser ensemble, super-resolution models, and transfer learning, our model achieves excellent performance on the CDCOCO test set. Our denoising detection model can denoise and repair distorted images, making the model useful in a variety of real-world scenarios and environments.

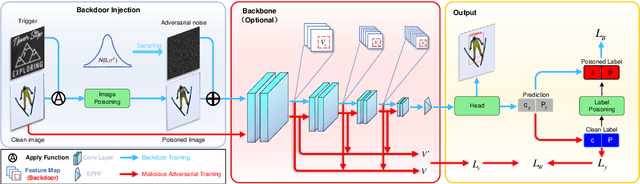

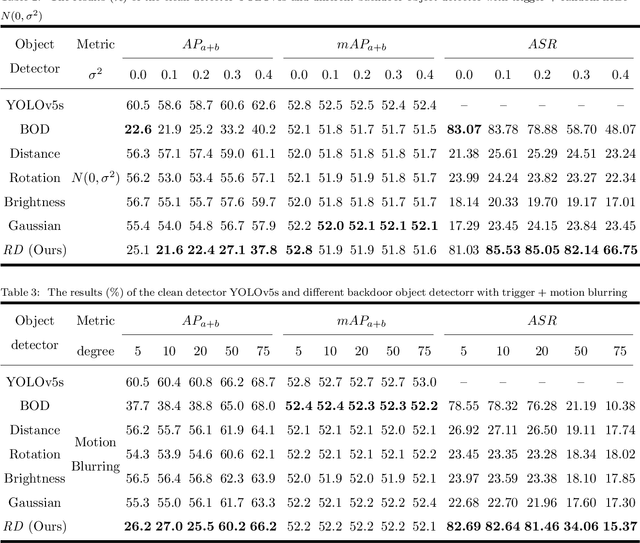

Robust Backdoor Attacks on Object Detection in Real World

Sep 16, 2023

Abstract:Deep learning models are widely deployed in many applications, such as object detection in various security fields. However, these models are vulnerable to backdoor attacks. Most backdoor attacks were intensively studied on classified models, but little on object detection. Previous works mainly focused on the backdoor attack in the digital world, but neglect the real world. Especially, the backdoor attack's effect in the real world will be easily influenced by physical factors like distance and illumination. In this paper, we proposed a variable-size backdoor trigger to adapt to the different sizes of attacked objects, overcoming the disturbance caused by the distance between the viewing point and attacked object. In addition, we proposed a backdoor training named malicious adversarial training, enabling the backdoor object detector to learn the feature of the trigger with physical noise. The experiment results show this robust backdoor attack (RBA) could enhance the attack success rate in the real world.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge