Borja Aizpurua

Only relative ranks matter in weight-clustered large language models

Mar 18, 2026Abstract:Large language models (LLMs) contain billions of parameters, yet many exact values are not essential. We show that what matters most is the relative rank of weights-whether one connection is stronger or weaker than another-rather than precise magnitudes. To reduce the number of unique weight values, we apply weight clustering to pretrained models, replacing every weight matrix with K shared values from K-means. For Llama 3.1-8B-Instruct and SmolLM2-135M, reducing each matrix to only 16-64 distinct values preserves strong accuracy without retraining, providing a simple, training-free method to compress LLMs on disk. Optionally fine-tuning only the cluster means (centroids) recovers 30-40 percent of the remaining accuracy gap at minimal cost. We then systematically randomize cluster means while keeping assignments fixed. Scrambling the relative ranks of the clusters degrades quality sharply-perplexity can increase by orders of magnitude-even when global statistics such as mean and variance are preserved. In contrast, rank-preserving randomizations cause almost no loss at mid and late layers. On the other hand, when many layers are perturbed simultaneously, progressive layer-by-layer replacement reveals that scale drift-not rank distortion-is the dominant collapse mechanism; however, an affine correction w' = aw + b with a > 0 (which preserves both rank order and overall weight distribution) can substantially delay this drift. This rank-based perspective offers a new lens on model compression and robustness.

Quantum Large Language Models via Tensor Network Disentanglers

Oct 22, 2024

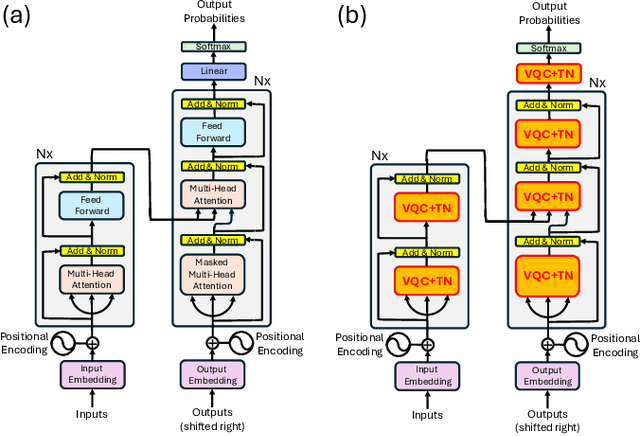

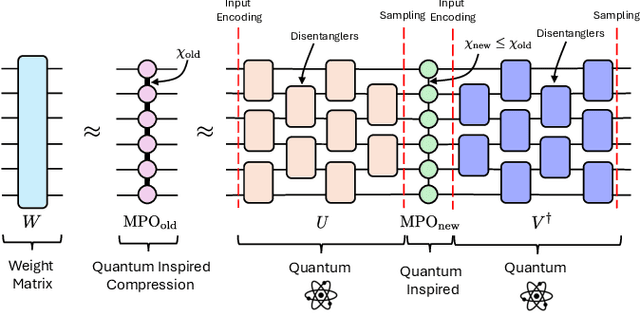

Abstract:We propose a method to enhance the performance of Large Language Models (LLMs) by integrating quantum computing and quantum-inspired techniques. Specifically, our approach involves replacing the weight matrices in the Self-Attention and Multi-layer Perceptron layers with a combination of two variational quantum circuits and a quantum-inspired tensor network, such as a Matrix Product Operator (MPO). This substitution enables the reproduction of classical LLM functionality by decomposing weight matrices through the application of tensor network disentanglers and MPOs, leveraging well-established tensor network techniques. By incorporating more complex and deeper quantum circuits, along with increasing the bond dimensions of the MPOs, our method captures additional correlations within the quantum-enhanced LLM, leading to improved accuracy beyond classical models while maintaining low memory overhead.

Tensor Networks for Explainable Machine Learning in Cybersecurity

Jan 05, 2024

Abstract:In this paper we show how tensor networks help in developing explainability of machine learning algorithms. Specifically, we develop an unsupervised clustering algorithm based on Matrix Product States (MPS) and apply it in the context of a real use-case of adversary-generated threat intelligence. Our investigation proves that MPS rival traditional deep learning models such as autoencoders and GANs in terms of performance, while providing much richer model interpretability. Our approach naturally facilitates the extraction of feature-wise probabilities, Von Neumann Entropy, and mutual information, offering a compelling narrative for classification of anomalies and fostering an unprecedented level of transparency and interpretability, something fundamental to understand the rationale behind artificial intelligence decisions.

Hacking Cryptographic Protocols with Advanced Variational Quantum Attacks

Nov 06, 2023

Abstract:Here we introduce an improved approach to Variational Quantum Attack Algorithms (VQAA) on crytographic protocols. Our methods provide robust quantum attacks to well-known cryptographic algorithms, more efficiently and with remarkably fewer qubits than previous approaches. We implement simulations of our attacks for symmetric-key protocols such as S-DES, S-AES and Blowfish. For instance, we show how our attack allows a classical simulation of a small 8-qubit quantum computer to find the secret key of one 32-bit Blowfish instance with 24 times fewer number of iterations than a brute-force attack. Our work also shows improvements in attack success rates for lightweight ciphers such as S-DES and S-AES. Further applications beyond symmetric-key cryptography are also discussed, including asymmetric-key protocols and hash functions. In addition, we also comment on potential future improvements of our methods. Our results bring one step closer assessing the vulnerability of large-size classical cryptographic protocols with Noisy Intermediate-Scale Quantum (NISQ) devices, and set the stage for future research in quantum cybersecurity.

Improving Gradient Methods via Coordinate Transformations: Applications to Quantum Machine Learning

Apr 13, 2023

Abstract:Machine learning algorithms, both in their classical and quantum versions, heavily rely on optimization algorithms based on gradients, such as gradient descent and alike. The overall performance is dependent on the appearance of local minima and barren plateaus, which slow-down calculations and lead to non-optimal solutions. In practice, this results in dramatic computational and energy costs for AI applications. In this paper we introduce a generic strategy to accelerate and improve the overall performance of such methods, allowing to alleviate the effect of barren plateaus and local minima. Our method is based on coordinate transformations, somehow similar to variational rotations, adding extra directions in parameter space that depend on the cost function itself, and which allow to explore the configuration landscape more efficiently. The validity of our method is benchmarked by boosting a number of quantum machine learning algorithms, getting a very significant improvement in their performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge