Bjørn-Richard Pedersen

More efficient manual review of automatically transcribed tabular data

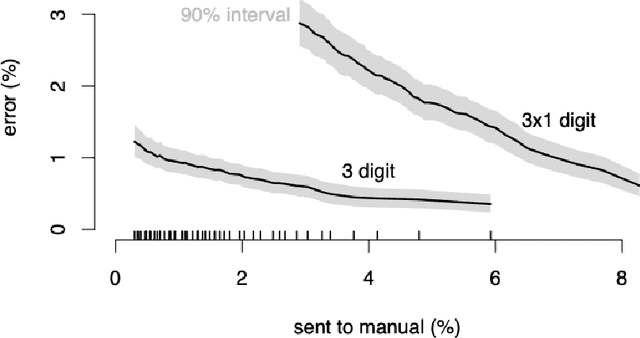

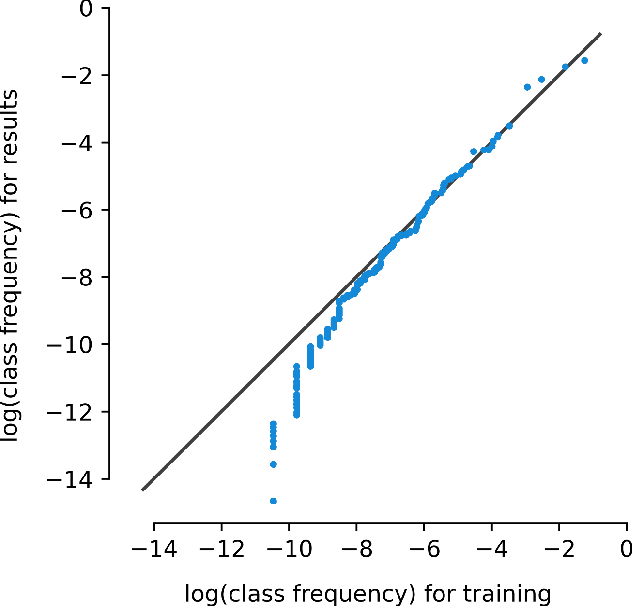

Jun 28, 2023Abstract:Machine learning methods have proven useful in transcribing historical data. However, results from even highly accurate methods require manual verification and correction. Such manual review can be time-consuming and expensive, therefore the objective of this paper was to make it more efficient. Previously, we used machine learning to transcribe 2.3 million handwritten occupation codes from the Norwegian 1950 census with high accuracy (97%). We manually reviewed the 90,000 (3%) codes with the lowest model confidence. We allocated those 90,000 codes to human reviewers, who used our annotation tool to review the codes. To assess reviewer agreement, some codes were assigned to multiple reviewers. We then analyzed the review results to understand the relationship between accuracy improvements and effort. Additionally, we interviewed the reviewers to improve the workflow. The reviewers corrected 62.8% of the labels and agreed with the model label in 31.9% of cases. About 0.2% of the images could not be assigned a label, while for 5.1% the reviewers were uncertain, or they assigned an invalid label. 9,000 images were independently reviewed by multiple reviewers, resulting in an agreement of 86.43% and disagreement of 8.96%. We learned that our automatic transcription is biased towards the most frequent codes, with a higher degree of misclassification for the lowest frequency codes. Our interview findings show that the reviewers did internal quality control and found our custom tool well-suited. So, only one reviewer is needed, but they should report uncertainty.

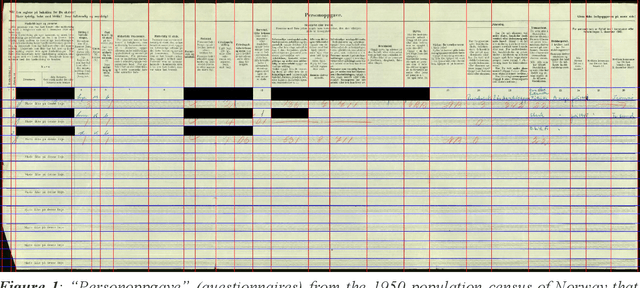

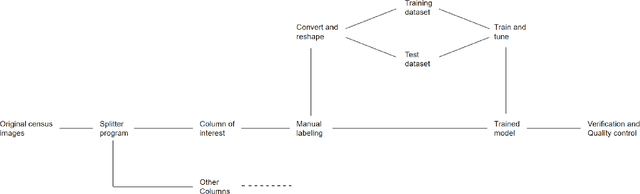

Occode: an end-to-end machine learning pipeline for transcription of historical population censuses

Jun 07, 2021

Abstract:Machine learning approaches achieve high accuracy for text recognition and are therefore increasingly used for the transcription of handwritten historical sources. However, using machine learning in production requires a streamlined end-to-end machine learning pipeline that scales to the dataset size, and a model that achieves high accuracy with few manual transcriptions. In addition, the correctness of the model results must be verified. This paper describes our lessons learned developing, tuning, and using the Occode end-to-end machine learning pipeline for transcribing 7,3 million rows with handwritten occupation codes in the Norwegian 1950 population census. We achieve an accuracy of 97% for the automatically transcribed codes, and we send 3% of the codes for manual verification. We verify that the occupation code distribution found in our result matches the distribution found in our training data which should be representative for the census as a whole. We believe our approach and lessons learned are useful for other transcription projects that plan to use machine learning in production. The source code is available at: https://github.com/uit-hdl/rhd-codes

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge