Bernd Hamm

3D U-Net for segmentation of COVID-19 associated pulmonary infiltrates using transfer learning: State-of-the-art results on affordable hardware

Jan 25, 2021

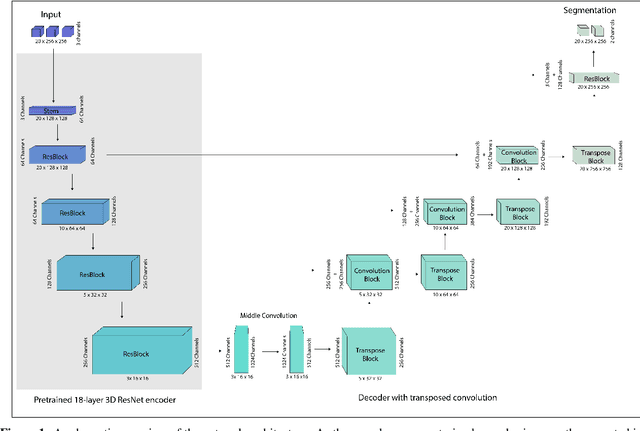

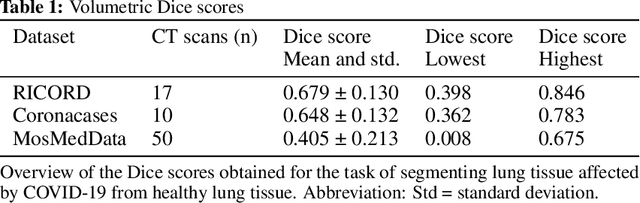

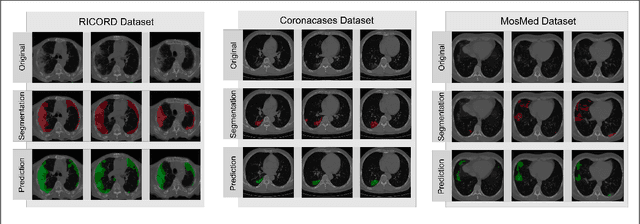

Abstract:Segmentation of pulmonary infiltrates can help assess severity of COVID-19, but manual segmentation is labor and time-intensive. Using neural networks to segment pulmonary infiltrates would enable automation of this task. However, training a 3D U-Net from computed tomography (CT) data is time- and resource-intensive. In this work, we therefore developed and tested a solution on how transfer learning can be used to train state-of-the-art segmentation models on limited hardware and in shorter time. We use the recently published RSNA International COVID-19 Open Radiology Database (RICORD) to train a fully three-dimensional U-Net architecture using an 18-layer 3D ResNet, pretrained on the Kinetics-400 dataset as encoder. The generalization of the model was then tested on two openly available datasets of patients with COVID-19, who received chest CTs (Corona Cases and MosMed datasets). Our model performed comparable to previously published 3D U-Net architectures, achieving a mean Dice score of 0.679 on the tuning dataset, 0.648 on the Coronacases dataset and 0.405 on the MosMed dataset. Notably, these results were achieved with shorter training time on a single GPU with less memory available than the GPUs used in previous studies.

Comparing Different Deep Learning Architectures for Classification of Chest Radiographs

Feb 20, 2020

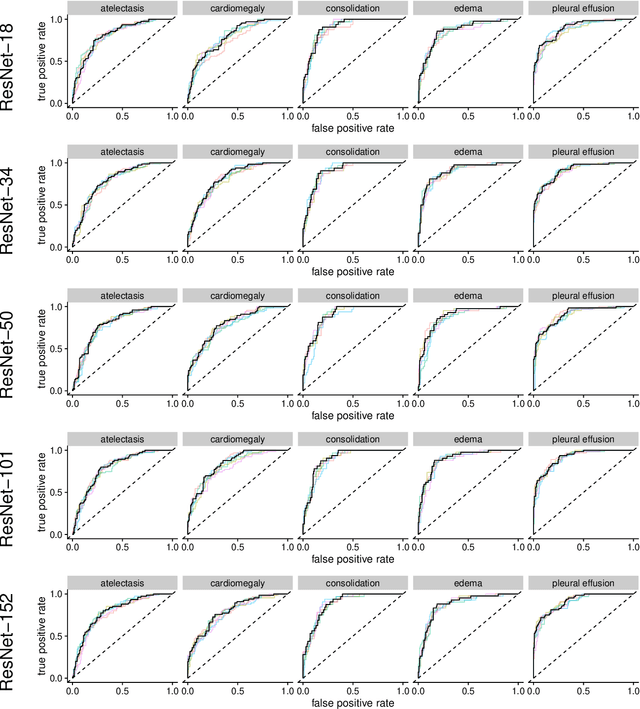

Abstract:Chest radiographs are among the most frequently acquired images in radiology and are often the subject of computer vision research. However, most of the models used to classify chest radiographs are derived from openly available deep neural networks, trained on large image-datasets. These datasets routinely differ from chest radiographs in that they are mostly color images and contain several possible image classes, while radiographs are greyscale images and often only contain fewer image classes. Therefore, very deep neural networks, which can represent more complex relationships in image-features, might not be required for the comparatively simpler task of classifying grayscale chest radiographs. We compared fifteen different architectures of artificial neural networks regarding training-time and performance on the openly available CheXpert dataset to identify the most suitable models for deep learning tasks on chest radiographs. We could show, that smaller networks such as ResNet-34, AlexNet or VGG-16 have the potential to classify chest radiographs as precisely as deeper neural networks such as DenseNet-201 or ResNet-151, while being less computationally demanding.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge