Baris Akgun

BarlowRL: Barlow Twins for Data-Efficient Reinforcement Learning

Aug 28, 2023

Abstract:This paper introduces BarlowRL, a data-efficient reinforcement learning agent that combines the Barlow Twins self-supervised learning framework with DER (Data-Efficient Rainbow) algorithm. BarlowRL outperforms both DER and its contrastive counterpart CURL on the Atari 100k benchmark. BarlowRL avoids dimensional collapse by enforcing information spread to the whole space. This helps RL algorithms to utilize uniformly spread state representation that eventually results in a remarkable performance. The integration of Barlow Twins with DER enhances data efficiency and achieves superior performance in the RL tasks. BarlowRL demonstrates the potential of incorporating self-supervised learning techniques to improve RL algorithms.

Keyframe Demonstration Seeded and Bayesian Optimized Policy Search

Jan 19, 2023

Abstract:This paper introduces a novel Learning from Demonstration framework to learn robotic skills with keyframe demonstrations using a Dynamic Bayesian Network (DBN) and a Bayesian Optimized Policy Search approach to improve the learned skills. DBN learns the robot motion, perceptual change in the object of interest (aka skill sub-goals) and the relation between them. The rewards are also learned from the perceptual part of the DBN. The policy search part is a semiblack box algorithm, which we call BO-PI2 . It utilizes the action-perception relation to focus the high-level exploration, uses Gaussian Processes to model the expected-return and performs Upper Confidence Bound type low-level exploration for sampling the rollouts. BO-PI2 is compared against a stateof-the-art method on three different skills in a real robot setting with expert and naive user demonstrations. The results show that our approach successfully focuses the exploration on the failed sub-goals and the addition of reward-predictive exploration outperforms the state-of-the-art approach on cumulative reward, skill success, and termination time metrics.

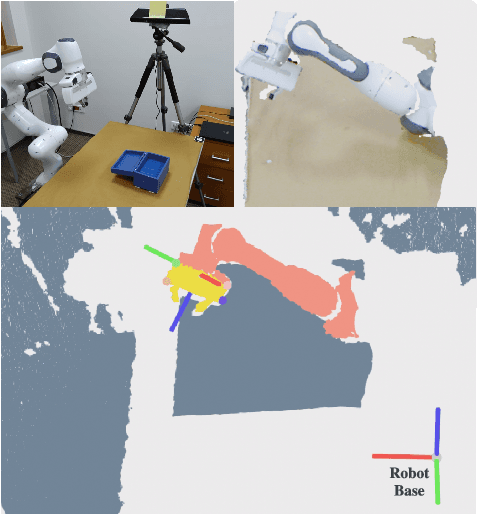

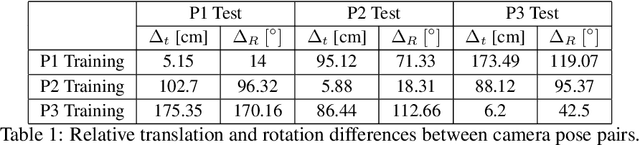

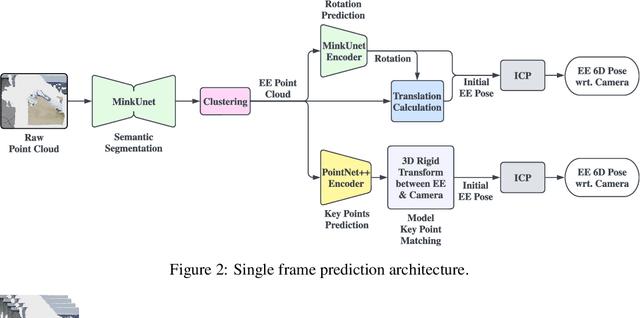

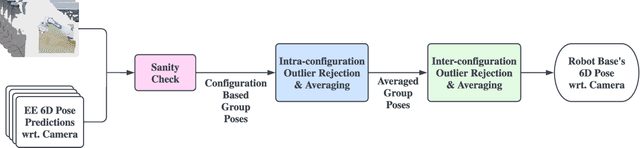

Learning Markerless Robot-Depth Camera Calibration and End-Effector Pose Estimation

Dec 15, 2022

Abstract:Traditional approaches to extrinsic calibration use fiducial markers and learning-based approaches rely heavily on simulation data. In this work, we present a learning-based markerless extrinsic calibration system that uses a depth camera and does not rely on simulation data. We learn models for end-effector (EE) segmentation, single-frame rotation prediction and keypoint detection, from automatically generated real-world data. We use a transformation trick to get EE pose estimates from rotation predictions and a matching algorithm to get EE pose estimates from keypoint predictions. We further utilize the iterative closest point algorithm, multiple-frames, filtering and outlier detection to increase calibration robustness. Our evaluations with training data from multiple camera poses and test data from previously unseen poses give sub-centimeter and sub-deciradian average calibration and pose estimation errors. We also show that a carefully selected single training pose gives comparable results.

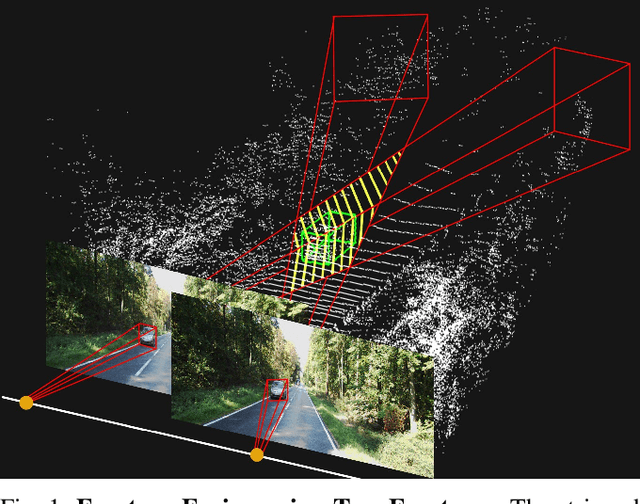

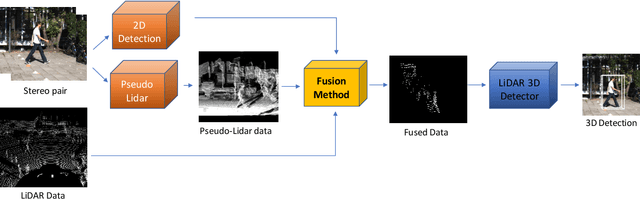

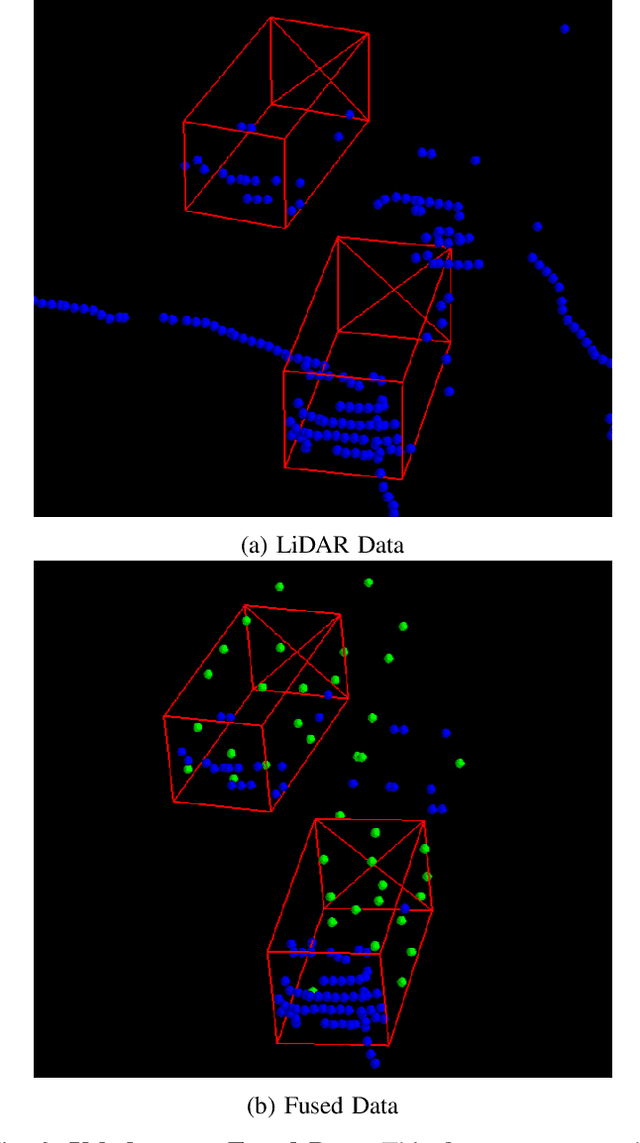

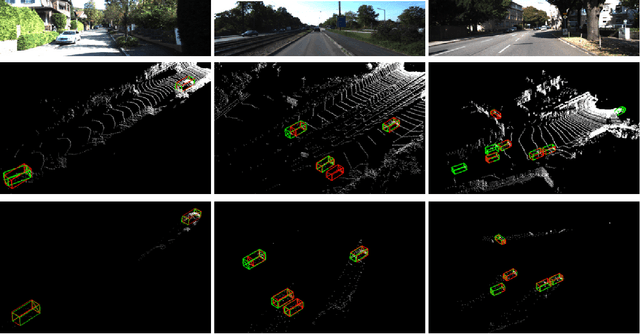

Frustum Fusion: Pseudo-LiDAR and LiDAR Fusion for 3D Detection

Nov 08, 2021

Abstract:Most autonomous vehicles are equipped with LiDAR sensors and stereo cameras. The former is very accurate but generates sparse data, whereas the latter is dense, has rich texture and color information but difficult to extract robust 3D representations from. In this paper, we propose a novel data fusion algorithm to combine accurate point clouds with dense but less accurate point clouds obtained from stereo pairs. We develop a framework to integrate this algorithm into various 3D object detection methods. Our framework starts with 2D detections from both of the RGB images, calculates frustums and their intersection, creates Pseudo-LiDAR data from the stereo images, and fills in the parts of the intersection region where the LiDAR data is lacking with the dense Pseudo-LiDAR points. We train multiple 3D object detection methods and show that our fusion strategy consistently improves the performance of detectors.

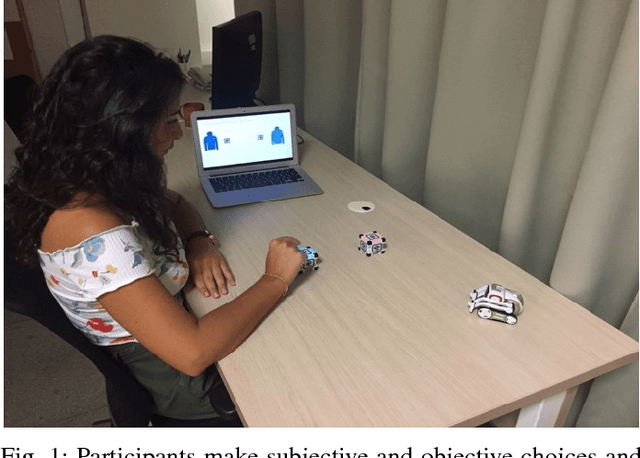

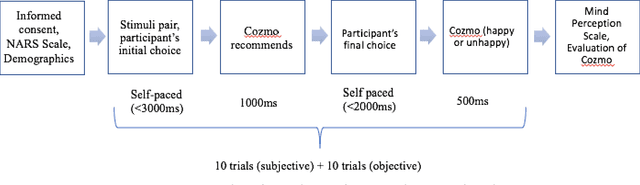

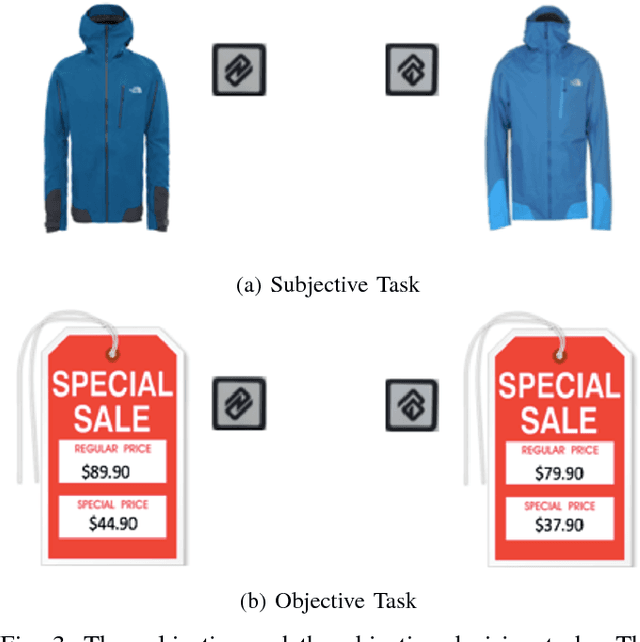

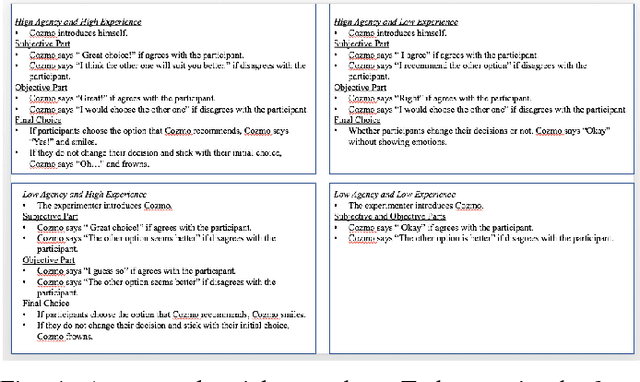

Mind in the Machine: Perceived Minds Induce Decision Change

Nov 02, 2018

Abstract:Recent research on human robot interaction explored whether people's tendency to conform to others extends to artificial agents (Hertz & Wiese, 2016). However, little is known about to what extent perception of a robot as having a mind affects people's decisions. Grounded on the theory of mind perception, the current study proposes that artificial agents can induce decision change to the extent in which individuals perceive them as having minds. By varying the degree to which robots expressed ability to act (agency) or feel (experience), we specifically investigated the underlying mechanisms of mind attribution to robots and social influence. Our results show an interactive effect of perceived experience and perceived agency on social influence induced by artificial agents. The findings provide preliminary insights regarding autonomous robots' influence on individuals' decisions and form a basis for understanding the underlying dynamics of decision making with robots.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge