Aswin Suresh

Studying Lobby Influence in the European Parliament

Sep 20, 2023

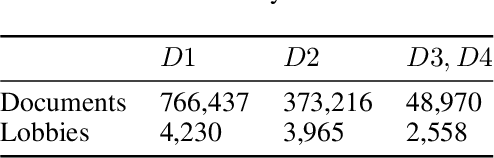

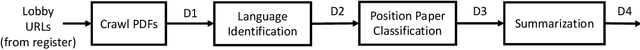

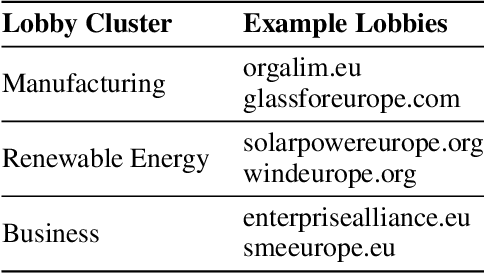

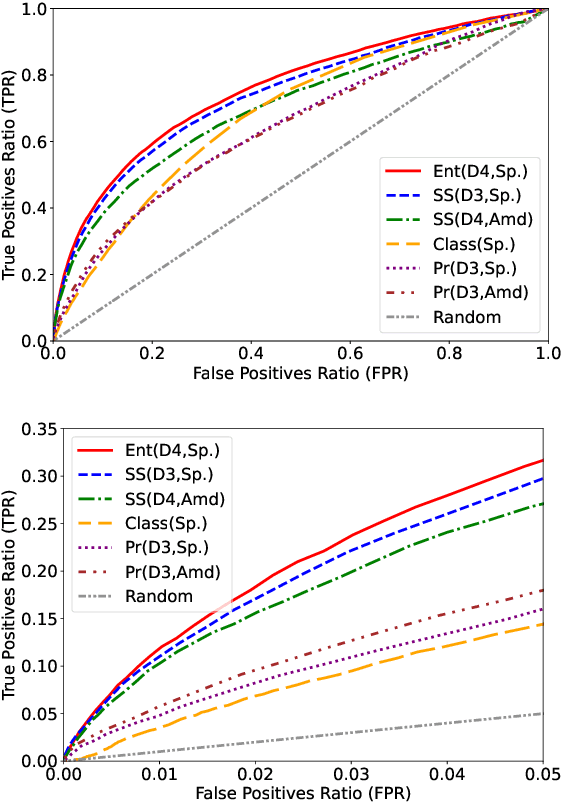

Abstract:We present a method based on natural language processing (NLP), for studying the influence of interest groups (lobbies) in the law-making process in the European Parliament (EP). We collect and analyze novel datasets of lobbies' position papers and speeches made by members of the EP (MEPs). By comparing these texts on the basis of semantic similarity and entailment, we are able to discover interpretable links between MEPs and lobbies. In the absence of a ground-truth dataset of such links, we perform an indirect validation by comparing the discovered links with a dataset, which we curate, of retweet links between MEPs and lobbies, and with the publicly disclosed meetings of MEPs. Our best method achieves an AUC score of 0.77 and performs significantly better than several baselines. Moreover, an aggregate analysis of the discovered links, between groups of related lobbies and political groups of MEPs, correspond to the expectations from the ideology of the groups (e.g., center-left groups are associated with social causes). We believe that this work, which encompasses the methodology, datasets, and results, is a step towards enhancing the transparency of the intricate decision-making processes within democratic institutions.

It's All Relative: Interpretable Models for Scoring Bias in Documents

Jul 16, 2023

Abstract:We propose an interpretable model to score the bias present in web documents, based only on their textual content. Our model incorporates assumptions reminiscent of the Bradley-Terry axioms and is trained on pairs of revisions of the same Wikipedia article, where one version is more biased than the other. While prior approaches based on absolute bias classification have struggled to obtain a high accuracy for the task, we are able to develop a useful model for scoring bias by learning to perform pairwise comparisons of bias accurately. We show that we can interpret the parameters of the trained model to discover the words most indicative of bias. We also apply our model in three different settings - studying the temporal evolution of bias in Wikipedia articles, comparing news sources based on bias, and scoring bias in law amendments. In each case, we demonstrate that the outputs of the model can be explained and validated, even for the two domains that are outside the training-data domain. We also use the model to compare the general level of bias between domains, where we see that legal texts are the least biased and news media are the most biased, with Wikipedia articles in between. Given its high performance, simplicity, interpretability, and wide applicability, we hope the model will be useful for a large community, including Wikipedia and news editors, political and social scientists, and the general public.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge