Ashwin Sanjay Lele

Fusing Frame and Event Vision for High-speed Optical Flow for Edge Application

Jul 21, 2022

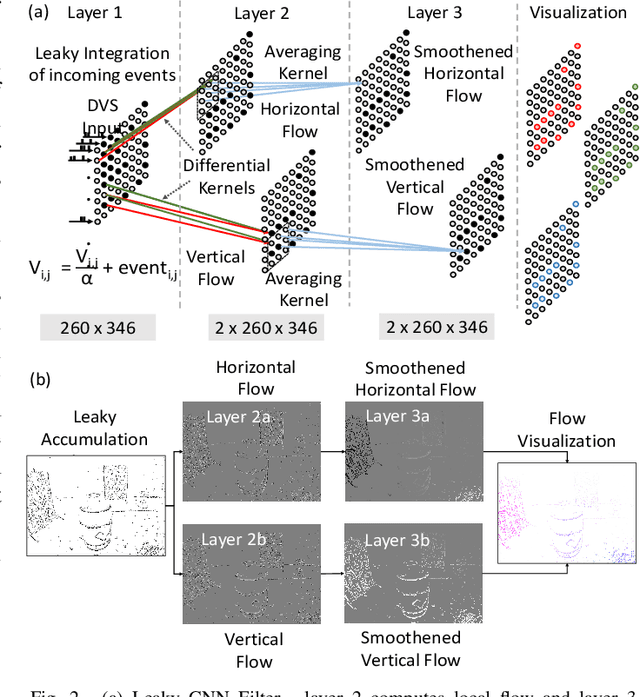

Abstract:Optical flow computation with frame-based cameras provides high accuracy but the speed is limited either by the model size of the algorithm or by the frame rate of the camera. This makes it inadequate for high-speed applications. Event cameras provide continuous asynchronous event streams overcoming the frame-rate limitation. However, the algorithms for processing the data either borrow frame like setup limiting the speed or suffer from lower accuracy. We fuse the complementary accuracy and speed advantages of the frame and event-based pipelines to provide high-speed optical flow while maintaining a low error rate. Our bio-mimetic network is validated with the MVSEC dataset showing 19% error degradation at 4x speed up. We then demonstrate the system with a high-speed drone flight scenario where a high-speed event camera computes the flow even before the optical camera sees the drone making it suited for applications like tracking and segmentation. This work shows the fundamental trade-offs in frame-based processing may be overcome by fusing data from other modalities.

Circuit and System Technologies for Energy-Efficient Edge Robotics

Feb 22, 2022

Abstract:As we march towards the age of ubiquitous intelligence, we note that AI and intelligence are progressively moving from the cloud to the edge. The success of Edge-AI is pivoted on innovative circuits and hardware that can enable inference and limited learning in resource-constrained edge autonomous systems. This paper introduces a series of ultra-low-power accelerator and system designs on enabling the intelligence in edge robotic platforms, including reinforcement learning neuromorphic control, swarm intelligence, and simultaneous mapping and localization. We put an emphasis on the impact of the mixed-signal circuit, neuro-inspired computing system, benchmarking and software infrastructure, as well as algorithm-hardware co-design to realize the most energy-efficient Edge-AI ASICs for the next-generation intelligent and autonomous systems.

Bio-inspired Gait Imitation of Hexapod Robot Using Event-Based Vision Sensor and Spiking Neural Network

Apr 11, 2020

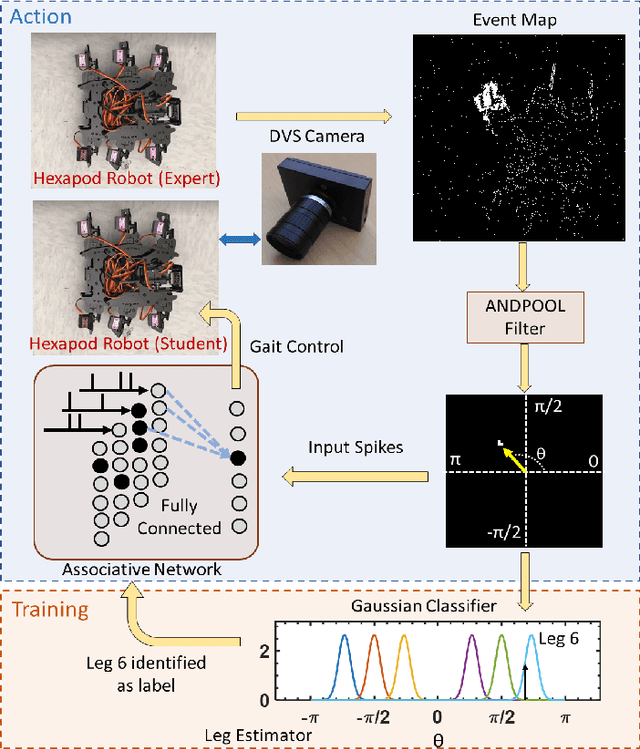

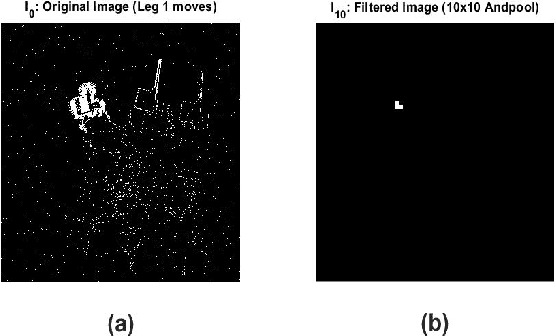

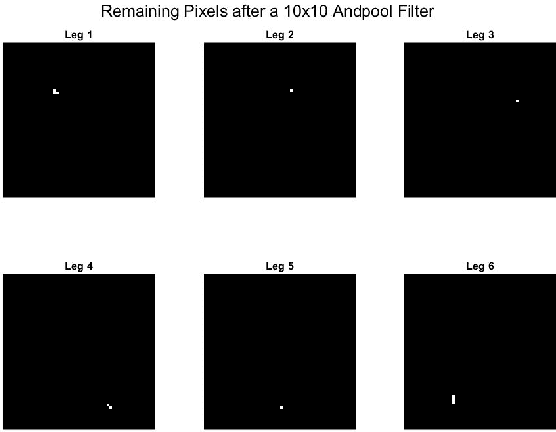

Abstract:Learning how to walk is a sophisticated neurological task for most animals. In order to walk, the brain must synthesize multiple cortices, neural circuits, and diverse sensory inputs. Some animals, like humans, imitate surrounding individuals to speed up their learning. When humans watch their peers, visual data is processed through a visual cortex in the brain. This complex problem of imitation-based learning forms associations between visual data and muscle actuation through Central Pattern Generation (CPG). Reproducing this imitation phenomenon on low power, energy-constrained robots that are learning to walk remains challenging and unexplored. We propose a bio-inspired feed-forward approach based on neuromorphic computing and event-based vision to address the gait imitation problem. The proposed method trains a "student" hexapod to walk by watching an "expert" hexapod moving its legs. The student processes the flow of Dynamic Vision Sensor (DVS) data with a one-layer Spiking Neural Network (SNN). The SNN of the student successfully imitates the expert within a small convergence time of ten iterations and exhibits energy efficiency at the sub-microjoule level.

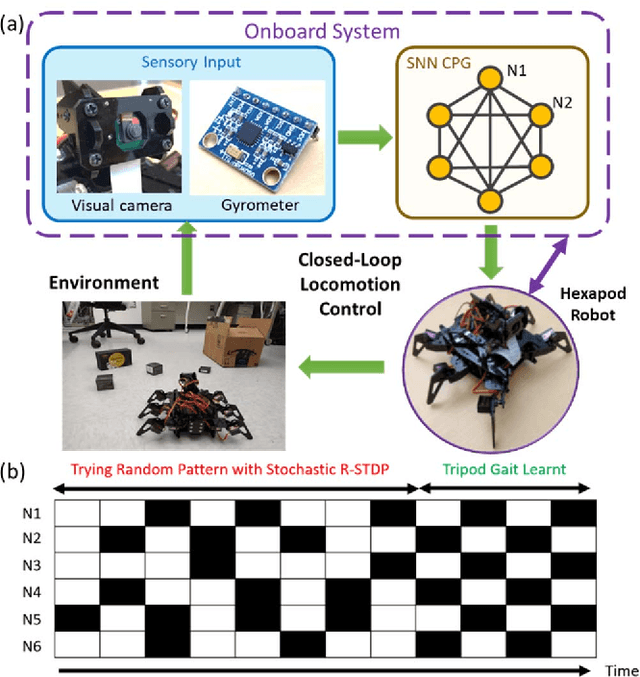

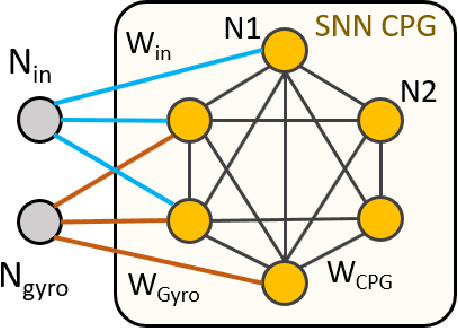

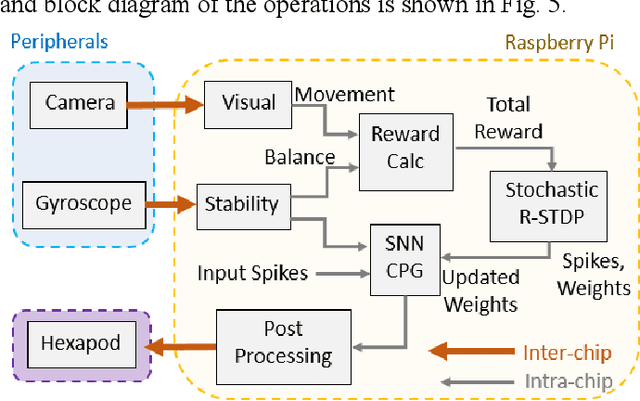

Learning to Walk: Spike Based Reinforcement Learning for Hexapod Robot Central Pattern Generation

Mar 22, 2020

Abstract:Learning to walk -- i.e., learning locomotion under performance and energy constraints continues to be a challenge in legged robotics. Methods such as stochastic gradient, deep reinforcement learning (RL) have been explored for bipeds, quadrupeds and hexapods. These techniques are computationally intensive and often prohibitive for edge applications. These methods rely on complex sensors and pre-processing of data, which further increases energy and latency. Recent advances in spiking neural networks (SNNs) promise a significant reduction in computing owing to the sparse firing of neuros and has been shown to integrate reinforcement learning mechanisms with biologically observed spike time dependent plasticity (STDP). However, training a legged robot to walk by learning the synchronization patterns of central pattern generators (CPG) in an SNN framework has not been shown. This can marry the efficiency of SNNs with synchronized locomotion of CPG based systems providing breakthrough end-to-end learning in mobile robotics. In this paper, we propose a reinforcement based stochastic weight update technique for training a spiking CPG. The whole system is implemented on a lightweight raspberry pi platform with integrated sensors, thus opening up exciting new possibilities.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge