Ashish Jaiswal

Understanding Cognitive Fatigue from fMRI Scans with Self-supervised Learning

Jun 30, 2021

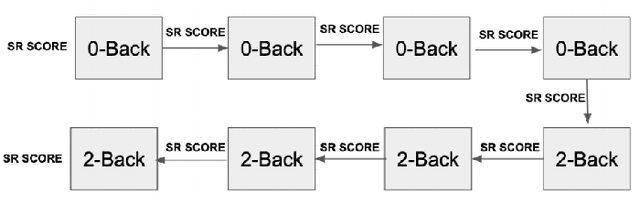

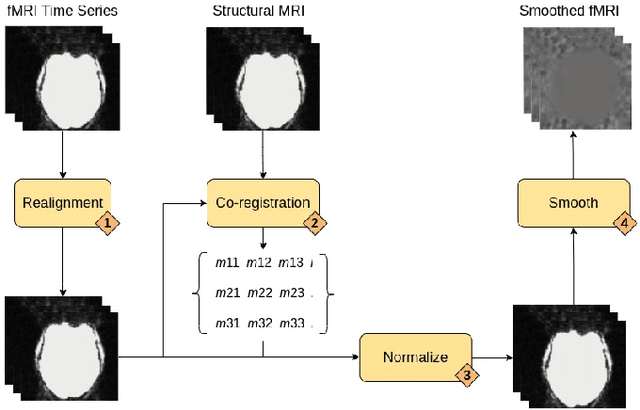

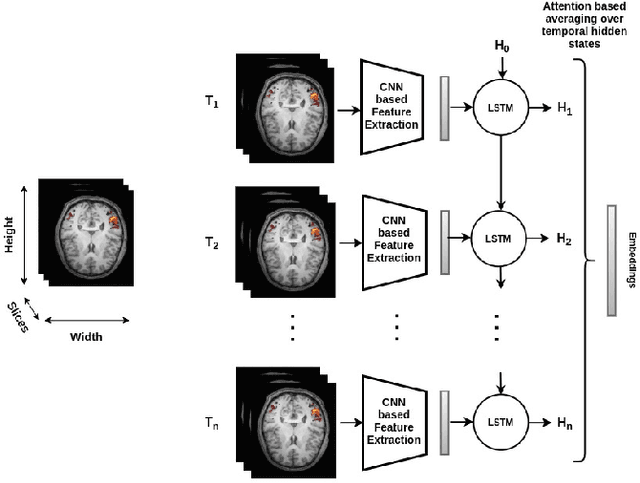

Abstract:Functional magnetic resonance imaging (fMRI) is a neuroimaging technique that records neural activations in the brain by capturing the blood oxygen level in different regions based on the task performed by a subject. Given fMRI data, the problem of predicting the state of cognitive fatigue in a person has not been investigated to its full extent. This paper proposes tackling this issue as a multi-class classification problem by dividing the state of cognitive fatigue into six different levels, ranging from no-fatigue to extreme fatigue conditions. We built a spatio-temporal model that uses convolutional neural networks (CNN) for spatial feature extraction and a long short-term memory (LSTM) network for temporal modeling of 4D fMRI scans. We also applied a self-supervised method called MoCo to pre-train our model on a public dataset BOLD5000 and fine-tuned it on our labeled dataset to classify cognitive fatigue. Our novel dataset contains fMRI scans from Traumatic Brain Injury (TBI) patients and healthy controls (HCs) while performing a series of cognitive tasks. This method establishes a state-of-the-art technique to analyze cognitive fatigue from fMRI data and beats previous approaches to solve this problem.

Automated system to measure Tandem Gait to assess executive functions in children

Dec 28, 2020

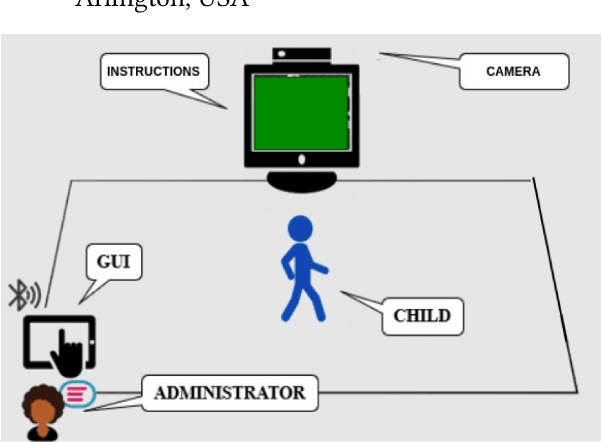

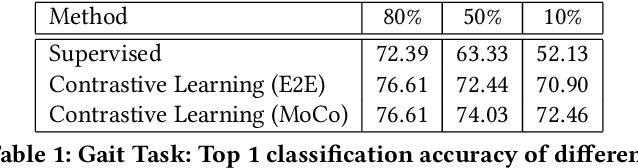

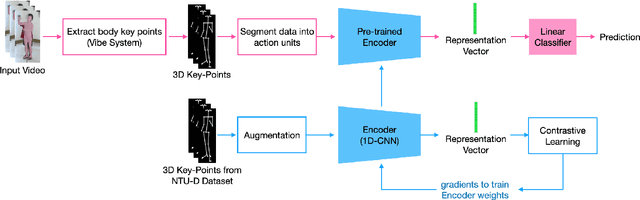

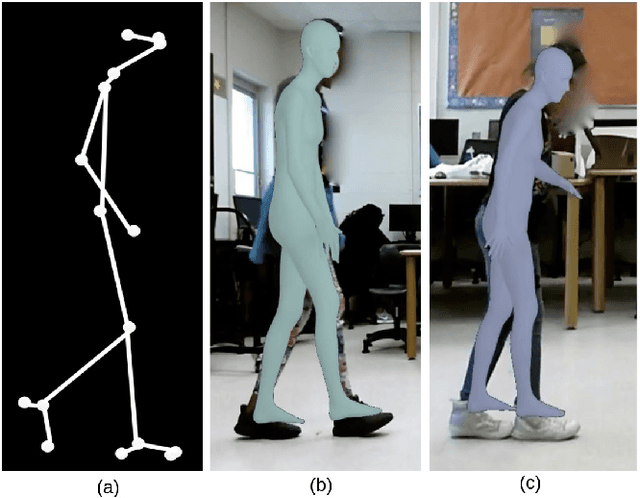

Abstract:As mobile technologies have become ubiquitous in recent years, computer-based cognitive tests have become more popular and efficient. In this work, we focus on assessing motor function in children by analyzing their gait movements. Although there has been a lot of research on designing automated assessment systems for gait analysis, most of these efforts use obtrusive wearable sensors for measuring body movements. We have devised a computer vision-based assessment system that only requires a camera which makes it easier to employ in school or home environments. A dataset has been created with 27 children performing the test. Furthermore in order to improve the accuracy of the system, a deep learning based model was pre-trained on NTU-RGB+D 120 dataset and then it was fine-tuned on our gait dataset. The results highlight the efficacy of proposed work for automating the assessment of children's performances by achieving 76.61% classification accuracy.

A Survey on Contrastive Self-supervised Learning

Oct 31, 2020

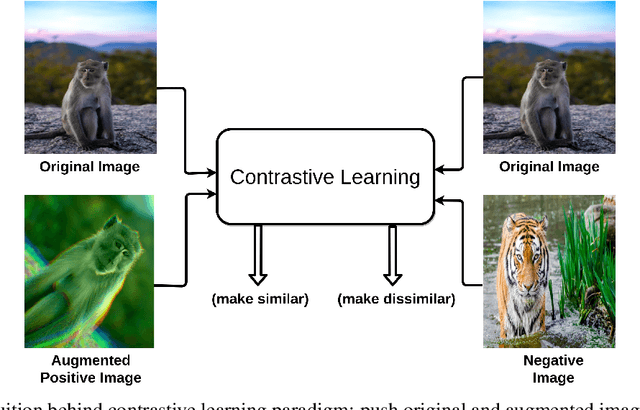

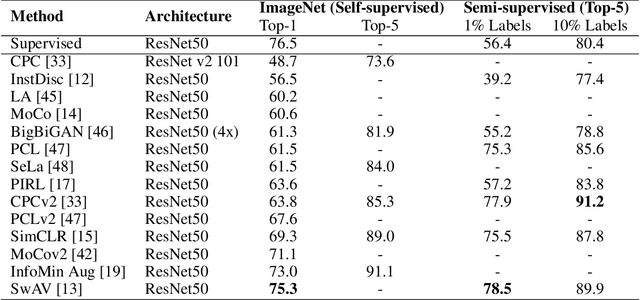

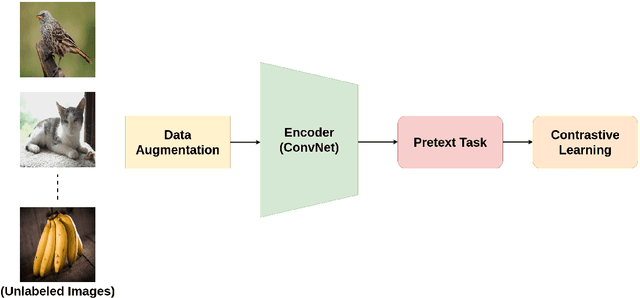

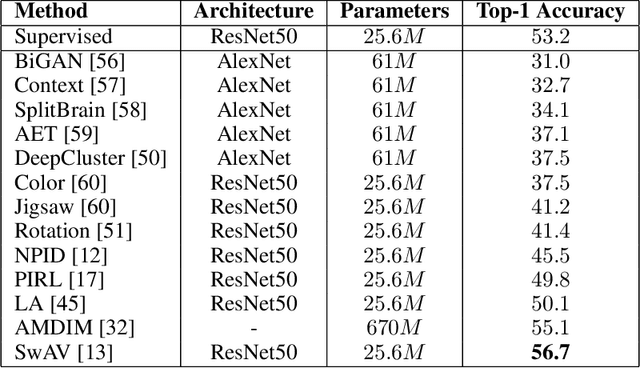

Abstract:Self-supervised learning has gained popularity because of its ability to avoid the cost of annotating large-scale datasets. It is capable of adopting self-defined pseudo labels as supervision and use the learned representations for several downstream tasks. Specifically, contrastive learning has recently become a dominant component in self-supervised learning methods for computer vision, natural language processing (NLP), and other domains. It aims at embedding augmented versions of the same sample close to each other while trying to push away embeddings from different samples. This paper provides an extensive review of self-supervised methods that follow the contrastive approach. The work explains commonly used pretext tasks in a contrastive learning setup, followed by different architectures that have been proposed so far. Next, we have a performance comparison of different methods for multiple downstream tasks such as image classification, object detection, and action recognition. Finally, we conclude with the limitations of the current methods and the need for further techniques and future directions to make substantial progress.

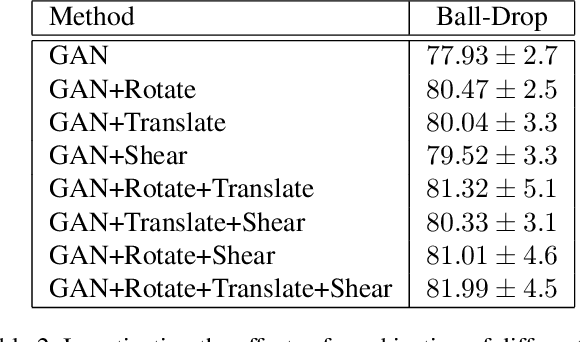

Self-Supervised Human Activity Recognition by Augmenting Generative Adversarial Networks

Aug 26, 2020

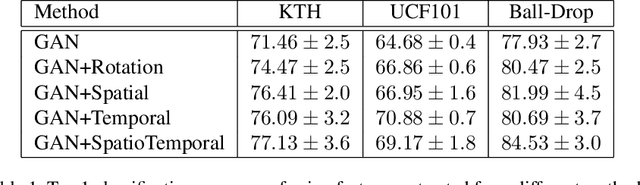

Abstract:This article proposes a novel approach for augmenting generative adversarial network (GAN) with a self-supervised task in order to improve its ability for encoding video representations that are useful in downstream tasks such as human activity recognition. In the proposed method, input video frames are randomly transformed by different spatial transformations, such as rotation, translation and shearing or temporal transformations such as shuffling temporal order of frames. Then discriminator is encouraged to predict the applied transformation by introducing an auxiliary loss. Subsequently, results prove superiority of the proposed method over baseline methods for providing a useful representation of videos used in human activity recognition performed on datasets such as KTH, UCF101 and Ball-Drop. Ball-Drop dataset is a specifically designed dataset for measuring executive functions in children through physically and cognitively demanding tasks. Using features from proposed method instead of baseline methods caused the top-1 classification accuracy to increase by more then 4%. Moreover, ablation study was performed to investigate the contribution of different transformations on downstream task.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge