Ashfaq Khokhar

Root Cause Analysis of Hydrogen Bond Separation in Spatio-Temporal Molecular Dynamics using Causal Models

Aug 17, 2025Abstract:Molecular dynamics simulations (MDS) face challenges, including resource-heavy computations and the need to manually scan outputs to detect "interesting events," such as the formation and persistence of hydrogen bonds between atoms of different molecules. A critical research gap lies in identifying the underlying causes of hydrogen bond formation and separation -understanding which interactions or prior events contribute to their emergence over time. With this challenge in mind, we propose leveraging spatio-temporal data analytics and machine learning models to enhance the detection of these phenomena. In this paper, our approach is inspired by causal modeling and aims to identify the root cause variables of hydrogen bond formation and separation events. Specifically, we treat the separation of hydrogen bonds as an "intervention" occurring and represent the causal structure of the bonding and separation events in the MDS as graphical causal models. These causal models are built using a variational autoencoder-inspired architecture that enables us to infer causal relationships across samples with diverse underlying causal graphs while leveraging shared dynamic information. We further include a step to infer the root causes of changes in the joint distribution of the causal models. By constructing causal models that capture shifts in the conditional distributions of molecular interactions during bond formation or separation, this framework provides a novel perspective on root cause analysis in molecular dynamic systems. We validate the efficacy of our model empirically on the atomic trajectories that used MDS for chiral separation, demonstrating that we can predict many steps in the future and also find the variables driving the observed changes in the system.

GCN-Driven Reinforcement Learning for Probabilistic Real-Time Guarantees in Industrial URLLC

Jun 17, 2025

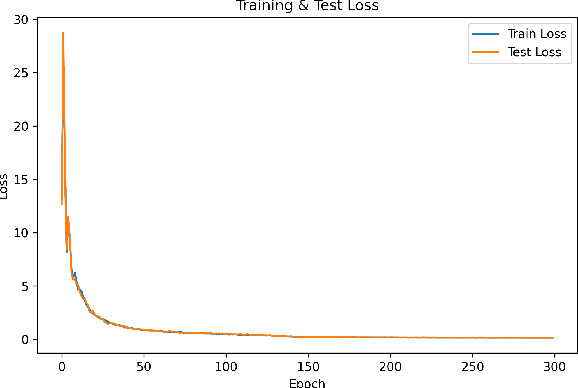

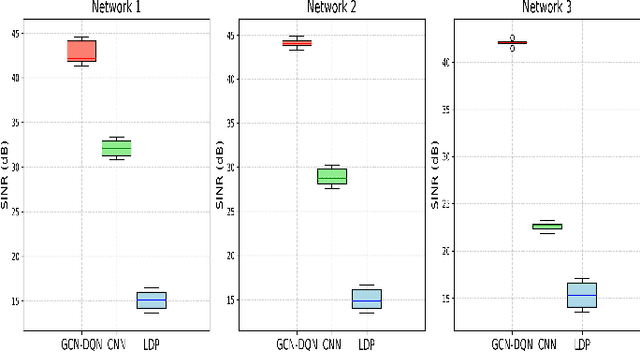

Abstract:Ensuring packet-level communication quality is vital for ultra-reliable, low-latency communications (URLLC) in large-scale industrial wireless networks. We enhance the Local Deadline Partition (LDP) algorithm by introducing a Graph Convolutional Network (GCN) integrated with a Deep Q-Network (DQN) reinforcement learning framework for improved interference coordination in multi-cell, multi-channel networks. Unlike LDP's static priorities, our approach dynamically learns link priorities based on real-time traffic demand, network topology, remaining transmission opportunities, and interference patterns. The GCN captures spatial dependencies, while the DQN enables adaptive scheduling decisions through reward-guided exploration. Simulation results show that our GCN-DQN model achieves mean SINR improvements of 179.6\%, 197.4\%, and 175.2\% over LDP across three network configurations. Additionally, the GCN-DQN model demonstrates mean SINR improvements of 31.5\%, 53.0\%, and 84.7\% over our previous CNN-based approach across the same configurations. These results underscore the effectiveness of our GCN-DQN model in addressing complex URLLC requirements with minimal overhead and superior network performance.

CNN-Enabled Scheduling for Probabilistic Real-Time Guarantees in Industrial URLLC

Jun 17, 2025Abstract:Ensuring packet-level communication quality is vital for ultra-reliable, low-latency communications (URLLC) in large-scale industrial wireless networks. We enhance the Local Deadline Partition (LDP) algorithm by introducing a CNN-based dynamic priority prediction mechanism for improved interference coordination in multi-cell, multi-channel networks. Unlike LDP's static priorities, our approach uses a Convolutional Neural Network and graph coloring to adaptively assign link priorities based on real-time traffic, transmission opportunities, and network conditions. Assuming that first training phase is performed offline, our approach introduced minimal overhead, while enabling more efficient resource allocation, boosting network capacity, SINR, and schedulability. Simulation results show SINR gains of up to 113\%, 94\%, and 49\% over LDP across three network configurations, highlighting its effectiveness for complex URLLC scenarios.

OSI Stack Redesign for Quantum Networks: Requirements, Technologies, Challenges, and Future Directions

Jun 13, 2025Abstract:Quantum communication is poised to become a foundational element of next-generation networking, offering transformative capabilities in security, entanglement-based connectivity, and computational offloading. However, the classical OSI model-designed for deterministic and error-tolerant systems-cannot support quantum-specific phenomena such as coherence fragility, probabilistic entanglement, and the no-cloning theorem. This paper provides a comprehensive survey and proposes an architectural redesign of the OSI model for quantum networks in the context of 7G. We introduce a Quantum-Converged OSI stack by extending the classical model with Layer 0 (Quantum Substrate) and Layer 8 (Cognitive Intent), supporting entanglement, teleportation, and semantic orchestration via LLMs and QML. Each layer is redefined to incorporate quantum mechanisms such as enhanced MAC protocols, fidelity-aware routing, and twin-based applications. This survey consolidates over 150 research works from IEEE, ACM, MDPI, arXiv, and Web of Science (2018-2025), classifying them by OSI layer, enabling technologies such as QKD, QEC, PQC, and RIS, and use cases such as satellite QKD, UAV swarms, and quantum IoT. A taxonomy of cross-layer enablers-such as hybrid quantum-classical control, metadata-driven orchestration, and blockchain-integrated quantum trust-is provided, along with simulation tools including NetSquid, QuNetSim, and QuISP. We present several domain-specific applications, including quantum healthcare telemetry, entangled vehicular networks, and satellite mesh overlays. An evaluation framework is proposed based on entropy throughput, coherence latency, and entanglement fidelity. Key future directions include programmable quantum stacks, digital twins, and AI-defined QNet agents, laying the groundwork for a scalable, intelligent, and quantum-compliant OSI framework for 7G and beyond.

Enhanced Position Estimation in Tactile Internet-Enabled Remote Robotic Surgery Using MOESP-Based Kalman Filter

Jan 27, 2025

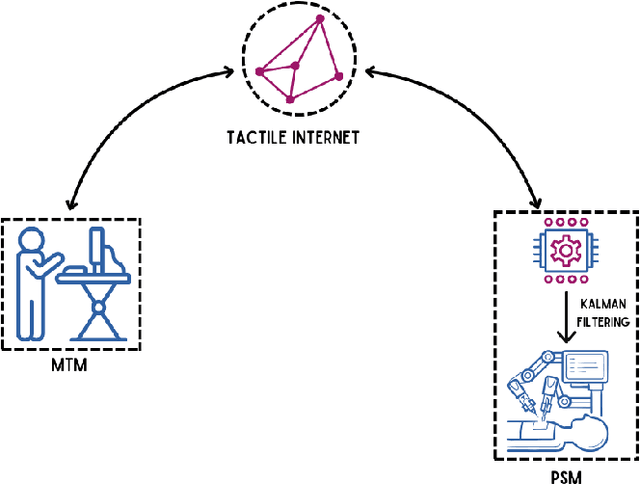

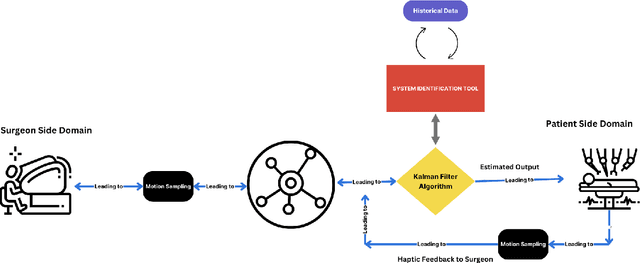

Abstract:Accurately estimating the position of a patient's side robotic arm in real time during remote surgery is a significant challenge, especially within Tactile Internet (TI) environments. This paper presents a new and efficient method for position estimation using a Kalman Filter (KF) combined with the Multivariable Output-Error State Space (MOESP) method for system identification. Unlike traditional approaches that require prior knowledge of the system's dynamics, this study uses the JIGSAW dataset, a comprehensive collection of robotic surgical data, along with input from the Master Tool Manipulator (MTM) to derive the state-space model directly. The MOESP method allows accurate modeling of the Patient Side Manipulator (PSM) dynamics without prior system models, improving the KF's performance under simulated network conditions, including delays, jitter, and packet loss. These conditions mimic real-world challenges in Tactile Internet applications. The findings demonstrate the KF's improved resilience and accuracy in state estimation, achieving over 95 percent accuracy despite network-induced uncertainties.

A Predictive Approach for Enhancing Accuracy in Remote Robotic Surgery Using Informer Model

Jan 24, 2025Abstract:Precise and real-time estimation of the robotic arm's position on the patient's side is essential for the success of remote robotic surgery in Tactile Internet (TI) environments. This paper presents a prediction model based on the Transformer-based Informer framework for accurate and efficient position estimation. Additionally, it combines a Four-State Hidden Markov Model (4-State HMM) to simulate realistic packet loss scenarios. The proposed approach addresses challenges such as network delays, jitter, and packet loss to ensure reliable and precise operation in remote surgical applications. The method integrates the optimization problem into the Informer model by embedding constraints such as energy efficiency, smoothness, and robustness into its training process using a differentiable optimization layer. The Informer framework uses features such as ProbSparse attention, attention distilling, and a generative-style decoder to focus on position-critical features while maintaining a low computational complexity of O(L log L). The method is evaluated using the JIGSAWS dataset, achieving a prediction accuracy of over 90 percent under various network scenarios. A comparison with models such as TCN, RNN, and LSTM demonstrates the Informer framework's superior performance in handling position prediction and meeting real-time requirements, making it suitable for Tactile Internet-enabled robotic surgery.

Adaptive Context-Aware Multi-Path Transmission Control for VR/AR Content: A Deep Reinforcement Learning Approach

Dec 27, 2024

Abstract:This paper introduces the Adaptive Context-Aware Multi-Path Transmission Control Protocol (ACMPTCP), an efficient approach designed to optimize the performance of Multi-Path Transmission Control Protocol (MPTCP) for data-intensive applications such as augmented and virtual reality (AR/VR) streaming. ACMPTCP addresses the limitations of conventional MPTCP by leveraging deep reinforcement learning (DRL) for agile end-to-end path management and optimal bandwidth allocation, facilitating path realignment across diverse network environments.

Efficient VoIP Communications through LLM-based Real-Time Speech Reconstruction and Call Prioritization for Emergency Services

Dec 09, 2024Abstract:Emergency communication systems face disruptions due to packet loss, bandwidth constraints, poor signal quality, delays, and jitter in VoIP systems, leading to degraded real-time service quality. Victims in distress often struggle to convey critical information due to panic, speech disorders, and background noise, further complicating dispatchers' ability to assess situations accurately. Staffing shortages in emergency centers exacerbate delays in coordination and assistance. This paper proposes leveraging Large Language Models (LLMs) to address these challenges by reconstructing incomplete speech, filling contextual gaps, and prioritizing calls based on severity. The system integrates real-time transcription with Retrieval-Augmented Generation (RAG) to generate contextual responses, using Twilio and AssemblyAI APIs for seamless implementation. Evaluation shows high precision, favorable BLEU and ROUGE scores, and alignment with real-world needs, demonstrating the model's potential to optimize emergency response workflows and prioritize critical cases effectively.

Enhancing Precision in Tactile Internet-Enabled Remote Robotic Surgery: Kalman Filter Approach

Jun 06, 2024Abstract:Accurately estimating the position of a patient's side robotic arm in real time in a remote surgery task is a significant challenge, particularly in Tactile Internet (TI) environments. This paper presents a Kalman Filter (KF) based computationally efficient position estimation method. The study also assume no prior knowledge of the dynamic system model of the robotic arm system. Instead, The JIGSAW dataset, which is a comprehensive collection of robotic surgical data, and the Master Tool Manipulator's (MTM) input are utilized to learn the system model using System Identification (SI) toolkit available in Matlab. We further investigate the effectiveness of KF to determine the position of the Patient Side Manipulator (PSM) under simulated network conditions that include delays, jitter, and packet loss. These conditions reflect the typical challenges encountered in real-world Tactile Internet applications. The results of the study highlight KF's resilience and effectiveness in achieving accurate state estimation despite network-induced uncertainties with over 90\% estimation accuracy.

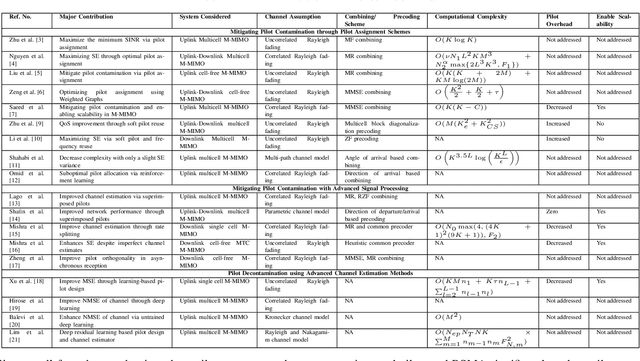

Pilot Contamination in Massive MIMO Systems: Challenges and Future Prospects

Apr 30, 2024

Abstract:Massive multiple input multiple output (M-MIMO) technology plays a pivotal role in fifth-generation (5G) and beyond communication systems, offering a wide range of benefits, from increased spectral efficiency (SE) to enhanced energy efficiency and higher reliability. However, these advantages are contingent upon precise channel state information (CSI) availability at the base station (BS). Ensuring precise CSI is challenging due to the constrained size of the coherence interval and the resulting limitations on pilot sequence length. Therefore, reusing pilot sequences in adjacent cells introduces pilot contamination, hindering SE enhancement. This paper reviews recent advancements and addresses research challenges in mitigating pilot contamination and improving channel estimation, categorizing the existing research into three broader categories: pilot assignment schemes, advanced signal processing methods, and advanced channel estimation techniques. Salient representative pilot mitigation/assignment techniques are analyzed and compared in each category. Lastly, possible future research directions are discussed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge