Arsenii Gorin

Learning domain-invariant classifiers for infant cry sounds

Nov 30, 2023

Abstract:The issue of domain shift remains a problematic phenomenon in most real-world datasets and clinical audio is no exception. In this work, we study the nature of domain shift in a clinical database of infant cry sounds acquired across different geographies. We find that though the pitches of infant cries are similarly distributed regardless of the place of birth, other characteristics introduce peculiar biases into the data. We explore methodologies for mitigating the impact of domain shift in a model for identifying neurological injury from cry sounds. We adapt unsupervised domain adaptation methods from computer vision which learn an audio representation that is domain-invariant to hospitals and is task discriminative. We also propose a new approach, target noise injection (TNI), for unsupervised domain adaptation which requires neither labels nor training data from the target domain. Our best-performing model significantly improves target accuracy by 7.2%, without negatively affecting the source domain.

A cry for help: Early detection of brain injury in newborns

Oct 13, 2023

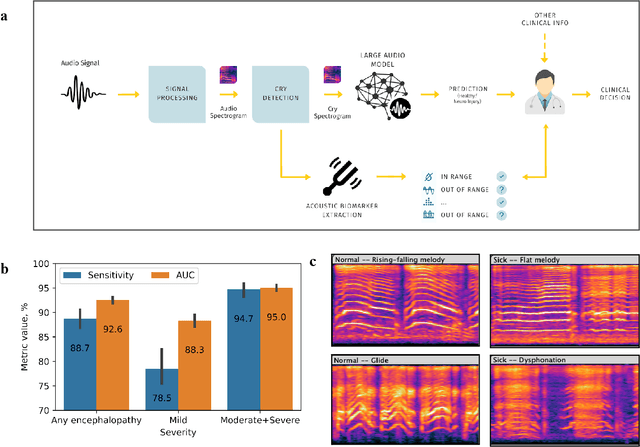

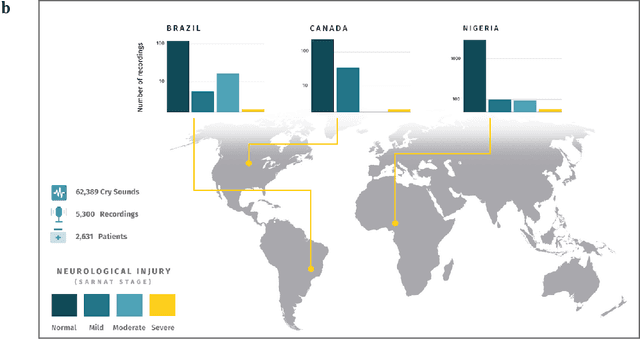

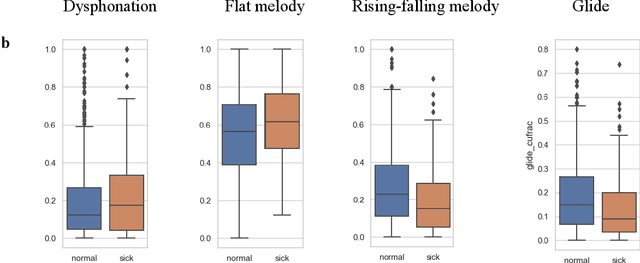

Abstract:Since the 1960s, neonatal clinicians have known that newborns suffering from certain neurological conditions exhibit altered crying patterns such as the high-pitched cry in birth asphyxia. Despite an annual burden of over 1.5 million infant deaths and disabilities, early detection of neonatal brain injuries due to asphyxia remains a challenge, particularly in developing countries where the majority of births are not attended by a trained physician. Here we report on the first inter-continental clinical study to demonstrate that neonatal brain injury can be reliably determined from recorded infant cries using an AI algorithm we call Roseline. Previous and recent work has been limited by the lack of a large, high-quality clinical database of cry recordings, constraining the application of state-of-the-art machine learning. We develop a new training methodology for audio-based pathology detection models and evaluate this system on a large database of newborn cry sounds acquired from geographically diverse settings -- 5 hospitals across 3 continents. Our system extracts interpretable acoustic biomarkers that support clinical decisions and is able to accurately detect neurological injury from newborns' cries with an AUC of 92.5% (88.7% sensitivity at 80% specificity). Cry-based neurological monitoring opens the door for low-cost, easy-to-use, non-invasive and contact-free screening of at-risk babies, especially when integrated into simple devices like smartphones or neonatal ICU monitors. This would provide a reliable tool where there are no alternatives, but also curtail the need to regularly exert newborns to physically-exhausting or radiation-exposing assessments such as brain CT scans. This work sets the stage for embracing the infant cry as a vital sign and indicates the potential of AI-driven sound monitoring for the future of affordable healthcare.

CryCeleb: A Speaker Verification Dataset Based on Infant Cry Sounds

May 15, 2023

Abstract:This paper describes the Ubenwa CryCeleb dataset - a labeled collection of infant cries, and the accompanying CryCeleb 2023 task - a public speaker verification challenge based on infant cry sounds. We release for academic usage more than 6 hours of manually segmented cry sounds from 786 newborns to encourage research in infant cry analysis.

Self-supervised learning for infant cry analysis

May 02, 2023

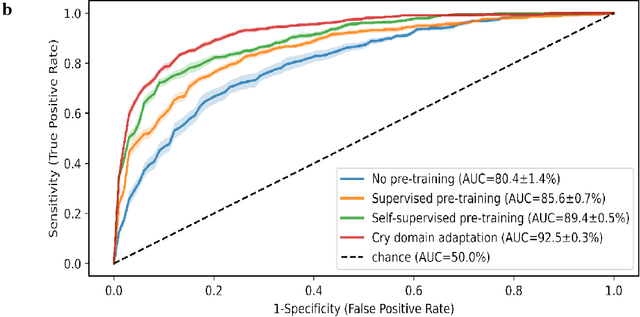

Abstract:In this paper, we explore self-supervised learning (SSL) for analyzing a first-of-its-kind database of cry recordings containing clinical indications of more than a thousand newborns. Specifically, we target cry-based detection of neurological injury as well as identification of cry triggers such as pain, hunger, and discomfort. Annotating a large database in the medical setting is expensive and time-consuming, typically requiring the collaboration of several experts over years. Leveraging large amounts of unlabeled audio data to learn useful representations can lower the cost of building robust models and, ultimately, clinical solutions. In this work, we experiment with self-supervised pre-training of a convolutional neural network on large audio datasets. We show that pre-training with SSL contrastive loss (SimCLR) performs significantly better than supervised pre-training for both neuro injury and cry triggers. In addition, we demonstrate further performance gains through SSL-based domain adaptation using unlabeled infant cries. We also show that using such SSL-based pre-training for adaptation to cry sounds decreases the need for labeled data of the overall system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge